Map Reduce - an overview

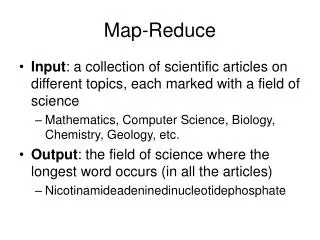

This document provides an extensive overview of the MapReduce programming model, which enables the processing of large datasets across distributed environments. It covers foundational concepts such as the WordCount example, programming paradigms, supported languages (Java, Ruby, Python, C++), and inherent parallelism. Key challenges related to data segregation, network transfer bottlenecks, and disk-based data handling are also discussed, alongside pseudo-code illustrations for practical understanding. The content is designed for developers and data engineers interested in scalable data processing solutions.

Map Reduce - an overview

E N D

Presentation Transcript

Nagarjuna K Map Reduce - an overview nagarjuna@outlook.com

AGENDA • Understanding MapReduce • Map Reduce - An Introduction • Word count – default • Word count – custom nagarjuna@outlook.com

Map Reduce • Programming model to process large datasets • Supported languages for MR • Java • Ruby • Python • C++ • Map Reduce Programs are Inherently parallel. • More data more machines to analyze. • No need to change anything in the code. nagarjuna@outlook.com

Understanding MapReduce • Start with WORDCOUNT example • “Do as I say, not as I do” nagarjuna@outlook.com

Understanding MapReduce pseudo code define wordCount as Map<String,long>; for each document in documentSet { T = tokenize(document); for each token in T { wordCount[token]++; } } display(wordCount); • This works until the no.of documents to process is not very large nagarjuna@outlook.com

Understanding MapReduce -pseudo code • Spam filter • Millions of emails • Word count for analysis • Working from a single computer is time consuming • Rewrite the program to count form multiple machines nagarjuna@outlook.com

Understanding MapReduce -pseudo code • How do we attain parallel computing ? • All the machines compute fraction of documents • Combine the results from all the machines nagarjuna@outlook.com

Understanding MapReduce -pseudo code STAGE 1 define wordCount as Map<String,long>; for each document in documentSUBSet{ T = tokenize(document); for each token in T { wordCount[token]++; } } nagarjuna@outlook.com

Understanding MapReduce -pseudo code STAGE 2 define totalWordCount as Multiset; for each wordCount received from firstPhase { multisetAdd(totalWordCount, wordCount); } Display(totalWordcount) nagarjuna@outlook.com

Understanding MapReduce -pseudo code Master Comp-1 Comp-2 Documents Comp-3 Comp-4 nagarjuna@outlook.com

Understanding MapReduce -pseudo code • Problems • STAGE 1 • Documents segregations to be well defined • Bottle neck in network transfer • Data-intensive processing • Not computational intensive • So better store files over processing machines • BIGGEST FLAW • Storing the words and count in memory • Disk based hash-table implementation needed Master Comp-1 Comp-2 Documents Comp-3 Comp-4 nagarjuna@outlook.com

Understanding MapReduce -pseudo code • Problems • STAGE 2 • Phase 2 has only once machine • Bottle Neck • Phase 1 highly distributed though • Make phase 2 also distributed • Need changes in Phase 1 • Partition the phase-1 output (say based on first character of the word) • We have 26 machines in phase 2 • Single Disk based hash-table should be now 26 Disk based hash-table • Word count-a , worcount-b,wordcount-c Master Comp-1 Comp-2 Documents Comp-3 Comp-4 nagarjuna@outlook.com

Understanding MapReduce -pseudo code Master Comp-1 Comp-10 Comp-2 Comp-20 Documents Comp-3 Comp-30 . . . Comp-4 Comp-40 nagarjuna@outlook.com

Understanding MapReduce -pseudo code • After phase-1 • From comp-1 • WordCount-A comp-10 • WordCount-B comp-20 • . • . • . • Each machine in phase 1 will shuffle its output to different machines in phase 2 nagarjuna@outlook.com

Word Count -- retrospection • This is getting complicated • Store files where are they are being processed • Write disk-based hash table obviating RAM limitations • Partition the phase-1 output • Shuffle the phase-1 output and send it to appropriate reducer nagarjuna@outlook.com

Word Count -- retrospection • This is more than a lot for word count • We haven’t even touched the fault tolerance • What if comp-1 or com-10 fails • So, A need of frame work to take care of all these things • We concentrate only on business nagarjuna@outlook.com

Understanding MapReduce -pseudo code Interim output MAPPER REDUCER Master Comp-1 Comp-10 Comp-2 Comp-20 Shuffling Documents Partitioning HDFS Comp-3 Comp-30 . . . Comp-40 Comp-4 nagarjuna@outlook.com

MapReduce • Mapper • Reducer Mapper filters and transforms the input Reducer collects that and aggregate on that. Extensive research is done two arrive at two phase strategy nagarjuna@outlook.com

MapReduce • Mapper,Reducer,Partitioner,Shuffling • Work together common structure for data processing nagarjuna@outlook.com

MapReduce - WordCount • Mapper • <key,words_per_line> : Input • <word,1> : output • Reducer • <word,list(1)> : Input • <word,count(list(1))> : Output nagarjuna@outlook.com

MapReduce • As said, don’t store the data in memory • So keys and values regularly have to be written to disk. • They must be serialized. • Hadoop provides its way of deserialization • Any class to be key or value have to implement WRITABLE class. nagarjuna@outlook.com

MapReduce nagarjuna@outlook.com

Word Count – default • Let’s try to execute the following command • hadoopjar hadoop-examples-0.20.2-cdh3u4.jar wordcount • hadoop jar hadoop-examples-0.20.2-cdh3u4.jar wordcount<input> <output> • What does this code do ? nagarjuna@outlook.com

CUSTOM WORD-COUNT • Switch to eclipse nagarjuna@outlook.com