Overview of Operating System Design Philosophies and Approaches

This document provides a comprehensive overview of operating system (OS) design philosophies, including Separatist, Compatibilist, Perfectionist, and Universalist perspectives. It examines different OS design approaches such as Layered, Kernel-Based, and Virtual Machine systems. Advanced OS concepts like Distributed OS, Multiprocessor OS, Database OS, and Real-time OS are discussed, along with essential process definitions, states, interactions, and deadlock management strategies, including Banker’s Algorithm. This overview serves as a foundational guide for understanding OS design principles and methodologies.

Overview of Operating System Design Philosophies and Approaches

E N D

Presentation Transcript

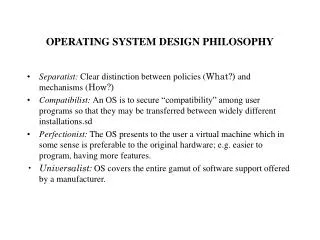

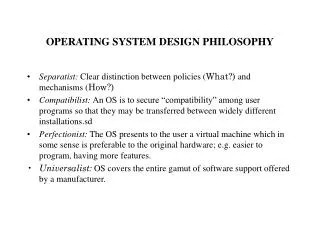

OPERATING SYSTEM DESIGN PHILOSOPHY • Separatist: Clear distinction between policies (What?) and mechanisms (How?) • Compatibilist: An OS is to secure “compatibility” among user programs so that they may be transferred between widely different installations.sd • Perfectionist: The OS presents to the user a virtual machine which in some sense is preferable to the original hardware; e.g. easier to program, having more features. • Universalist: OS covers the entire gamut of software support offered by a manufacturer.

OPERATING SYSTEM DESIGN APPROACHES • Layered: Functional partitioning of levels of service. (Comes from the seven layer ISO Open System Interconnection Model.) Example: MULTICS. • Kernel Based: Kernel or nucleus is a set of primitives supporting the tailoring of higher level functionality. (Flexibility maximized) Example: HYDRA. • Virtual Machine: Software wrapper on basic hardware to provide user illusion of total control of all resources. (Efficiency is price paid for illusion.) Example: VM/370

ADVANCED OPERATING SYSTEMS • Distributed OS: provides OS functions to users of a network of autonomous computer systems such that a single service entity is perceived. • Multiprocessor OS: an OS to support a tightly coupled set of processors sharing a common address space. • Database OS: transaction based functions relying on data creation, access and transfer with concurrency and reliability as major goals. • Real-time OS: deadline-driven functions supported with high reliability. Soft and hard real-time systems.

PROCESS • Informally, “a program in execution” or “the set of operations comprising a computational task.” • Important property of the operations included in a computation is precedence. a < b read “a precedes b,” means that all actions of operation a must end before any action of operation b begins. • Precedence graph models a a b b c d c

PROCESS • Formal representation of a process graph P = {pi | 1 < i < n } is set of processes, and < = { (pi, pk) | 1 < i,k < n} is a partial order on set P, where (pi, pk) belongs to < iff process pi must terminate before process pk can initiate. Then (P, < ), an ordered pair, is described as a computation. • Relation to object, which is described • statically by the enumeration of all attribute values of that object, giving the state of the object at some time epoch ti, and • dynamically by the process that expresses the present state of the object in terms of its past states.

PROCESS STATE TRANSITION MODEL Consider the states describing a process as: I = Initiation (origination) R = Ready (all resources but CPU) E = Executing (on a CPU) B = Blocked (needing resource) P = Preempted (by another process) T = Terminated I T Self-directed arcs indicate no state change. Any other transitions that might occur? Using discrete characterization of time, a Markov model could be derived. R E T P B

PROCESS • Process interaction: • to exchange data • to effect the sharing of a physical resource • to simplify our understanding of the computational correctness Sequential computations are important in assuring determinism. Determinacy versus quasi- (or weak) determinacy. • Threads or lightweight processes. • Purpose • Impact

DETERMINACY C = <(P1, P2, P3) , P2 => P3> Computation D(P1) = M1 R (P1) = M2 Process Definitions D(P2) = M2 R (P2) = M3 D(P3) = M4 R (P3) = M3 S0 M1 M2 M3 M4

GRAPH REDUCTION ALGORITHM • Select a process p, which is neither blocked nor an isolated node and remove all edges. (Process p has acquired all its requested resources, executed to completion and released them. • A graph is completely reducible if the first step leads to id = od = 0 for all nodes in the graph (all edges are removed). • A graph is irreducible if the graph cannot be reduced by the selection of any process in Step 1. Deadlock Theorem: S is a deadlock state iff the reusable resource graph of S is not completely reducible.

Banker’s Algorithm: Multiple Resource Types Data Structures (n processes and m resource types) • Available: Vector of length m showing the number of units of available resources of each type. (Available[j] = k.) • Max: The maximum demand of each process i for resource type j. If max(i,j) = k, then process i may request at most k units of resource type j. The matrix is nx m . • Allocation: An nx mmatrix showing the number of resources of each type currently allocted to each process. • Claim: An nx mmatrix indicating the remaining resource units that can be claimed by each process. Claim(i,j) := Max(i,j) - Allocation (i,j). • Request: Vector of length m giving the number of units of each type of resource requested by process i. Notation Let X and Y be vectors of length n. Then X < Y only if X[i] < Y[i] for all i = 1, 2, ..., n. Each instance of a request by process i is represented as Requesti. Allocationiand Claimiare rows of each matrix treated as vectors.

Banker’s Algorithm 1. If Requesti< Claimi go to Step 2; otherwise an error occurs since the process exceeds its maximum claim for some resource type. 2. If Requesti< Available then proceed to Step 3. Otherwise, the resources are not available and the process pj must wait. 3. The Resource Manager pretends to have allocated the requested resources to process pj by modifying the state as follows Available := Available - Requesti ; Allocationi := Allocationi + Requesti ; Claimi := Claimi - Requesti ; then run the Safety Algorithm.. If the resulting state is safe the transaction is completed and process i is allocated requested resources. If resulting state is unsafe, process i is denied request and waits. Original state is restored.

Safety Algorithm 1. Let Work be an integer-valued vector of length m and and Finish be a boolean-valued vector of length n. Set Work := Available and Finish[i] := false for i = 1, ..., n. 2. Find a process i such that Finish[i] = false and Claimi< Work. If no such i exists, go to Step 4. 3. Work := Work + Allocationi Finish[i] := true go to Step 2. 4. If Finish[i] = true for all i, then the system is in a safe state.

ISSUES IN DISTRIBUTED OPERATING SYSTEMS • Global/local relationships • Decentralized or centralized control • Ordering of events and clock management • Object identification (Naming) • Replication with storage at different physical locations • Partitioning among different physical locations • Combined replication/partitioning • Scalability • Compatability • Binary: identical instruction set architectures • Execution: all processors can compile and execute same source • Protocol: all processing sites support the same protocols

ISSUES IN DISTRIBUTED OS (Continued) • Process synchronization - Mutual exclusion on wider scale • Local versus remote control • Deadlock • Resource management • Data migration • Computation migration • Distributed scheduling • Security • Structuring - Interactions and interrelationships • Monolithic kernel • Collective kernel • Object oriented • Client/server model

LAMPORT’S LOGICAL CLOCKS The “happened before” relation a b is defined as • a b, if a and b are events in the same process and a occurred before b. • a b, if a is the event of sending a message m in a process and b is the event of receipt of the same message m by another process. • a b and b c , then a c,(relation is transitive). Events ordered by “happened before” relationship are labeled causaleffects. Two events are said to be concurrent if a b and b a

SPACE-TIME DIAGRAM Label with process and event IDs, vector clock values, and use to explain and contrast event and message causality. Space Global Time

LLC: IMPLEMENTATION RULES IR1: Clock Ci is incremented between any two successive events in process Pi Ci := Ci + d (d > 0) IR2: If event a is the sending of message m by process Pi , then message m is assigned a timestamp tm := Ci(a) (with the value of Ci(a) obtained after applying IR1. On receiving the same message m by process Pj , Cj is set to a value greater than or equal to its present value and greater than tm . Cj := max(Cj , tm + d) (d > 0 ) This “happened before” relation defines an irreflexive partial ordering on the events.

VECTOR CLOCKS IMPLEMENTATION RULES IR1: Clock Ci is incremented between any two successive events in process Pi Ci[ i] := Ci [ i] + d (d > 0) IR2: If event a is the sending of the message m by process Pi , then message m is assigned a vector timestamp tm = Ci[a] ; on receipt of m by Pj , Cj is updated by Cj[k] := max(Cj[k],tm[k]), for all k. Note: If ab then C(a) < C(b) but the reverse does not necessarily hold.

BIRMAN-SCHIPER-STEPHENSON PROTOCOL • Before broadcasting message m, process Pi increments the vector time VTpi[i] and timestamps m. Note that VTpi[i] - 1 indicates the number of messages sent from Pi that precede m. • Process Pj = Pi , on receipt of message m timestamped VTm from Pi , delays its delivery until both the following conditions are satisfied: 2.1 VTpj[i] = VTm[i] - 1 2.2 VTpj[k] > VTm[k] , for all k = 1, 2,..., i-1, i+1, ..., n Delayed messages are queued at each process in a queue that is sorted by vector time of the messages. (Concurrent messages ordered by time of their receipt.) • When a message is delivered at a process Pj , VTpj is updated according to the vector clocks rule (IR2).

S-E-S PROTOCOL EXAMPLE e11 e12 e13 e14 e15 P1 m1 Space m1 m2 e21 e22 e23 e24 P2 m2 m1 m2 e15 e31 e32 e33 e34 P3 Global Time e24 At e31 , V_P3= {(_ ), (0,0,1)} V_M = {V_ P3, tm1:(0,0,1)} At e21, V_P2= {(_, (0,1,0)} V_M = {V_ P2, tm1:(0,1,0)} At e11, V_ P1= {(_, , (1,0,0)} on receipt. Message is delivered and Update t P1= (1,1,0) => V_ P1= {(_ (0,1,0) , (1,0,0)} continue

GLOBAL STATES AND CONSISTENT CUTS e11 e12 e13 e14 e15 P1 Space m1 m1 e21 e22 e23 e24 e25 P2 m2 m1 m2 e15 e31 e32 e33 e34 P3 Global Time e24 Consider the local state of each process to be defined at respective events. Does GS = {e14, e23, e33} define a consistent global state? Is a cut defined by C = {e14, e23, e33} a consistent cut?

ALGORITHM SPECIFICATIONLamport’s Algorithm Ri = Request Set = {S1, ..., SN} 1. Request CS 1.1 REQUEST <---- (tsi, i) ----> Ri; Adds (tsi, i) to request_queue (i) 1.2 REPLY by Sj (timestamped); Adds (tsi, i) to request_queue (j) Vj ° i 2. Executing CS Si enters CS when both 2.1 and 2.2 hold 2.1 Si has received MSG (tsj, j) > (tsi, i) from all Sj 2.2 (tsi, i) is first in request_queue (i) 3.Releasing CS 3.1 RELEASE (tsi, i) ----> Ri 3.2 Sj removes (tsi, i) from request_queue (j) Vj ° i

ALGORITHM SPECIFICATIONMaekawa’s Algorithm for Mutual Exclusion 1. Requesting Critical Section (CS) 1.1 Si sends REQUEST(i) messages to all members of Ri. 1.2 Sk receiving REQUEST(i) message, sends REPLY(k) to Si provided its last message received was a RELEASE. Otherwise, places REQUEST(i) in message_queue(k). 2. Executing the Critical Section 2.1 Si accesses CS only after receiving REPLY messages from all members of Ri. 3. Releasing the Critical Section 3.1 Completing execution in CS, Si sends RELEASE(i) messages to all members of Ri. 3.2 When Sk in Ri receives RELEASE(i), if message_queue(k) = Sk sends REPLY message to first site in message_queue(k) and deletes it. 3.3 If message_queue(k) = Sk updates state to recognize that no REPLY was sent. (This means no site in the Rito which Sk belongs has issued a REQUEST.)

DEADLOCK DETECTION IN DISTRIBUTED SYSTEMSALGORITHMS • Distributed: Classes • Path-pushing: Wait-for information disseminated as paths • Edge-chasing: Probes circulated along edges of WFG • Diffusion computation: Echo sent by blocked processes • Global state detection: Consistent snapshot reveals stable properties

ISSUES IN DISTRIBUTED DEADLOCK DETECTION • Correctness of Algorithms • Formal proofs are lacking; intuition is dangerous. • Difficulties with formal proof techniques: • Multiple forms of TWFG • Sensitivity to request timing • No global memory and message latencies • Algorithm Performance • Measures are inadequate (number of messages is deceiving, number to detect “no deadlock”? or message size? • Alternative measures and analyses: • Deadlock persistence time (average time) • Overhead - storage, processing time in both search and resolution

ISSUES IN DISTRIBUTED DEADLOCK DETECTION(Continued) • Deadlock Resolution • Often overlooked as a requirement exacting cost. • Deadlock persistence affects active processes (adds to resource scarcity) and blocked processes (extends response time) • Information for effective resolution not provided by detection algorithm • False Deadlocks • Search for cycles is done independently and shared edges not recognized • While deadlock detection is aided by persistence (static condition), deadlock resolution is causes changes in WFG (dynamic)

ALGORITHM OF DOLEV, et.al.(Agreement Protocol) 1. [Initiation] HIGH := 2m + 1, LOW := m + 1 and k := 1. Source broadcasts value, say “ * ”. 2. [Update] Set k := k + 1. Pj broadcasts names of new processors for which it is direct or indirect supporter. If initiation condition was true in prior round and Pj has not done so, it broadcasts “ * ”. Repeat step for all j = 1, 2, ..., n. 3. [Commit] If process counter for Pj> HIGH, then the process commits to a value of 1. 4. [Termination] If k < 2m + 3, return to Step 2; else if 1 is committed, processors agree on 1; else agree on 0. NOTES: Initiation Condition: A processor initiates when (1) for k = 2, if “ * “ received when k = 1, or (2) for k > 2 if |Cj| > LOW + max(0, fl(k/2) - 2) (source excluded).

DISTRIBUTED FILE SYSTEMS • Important Goals • Network transparency: users’ perceptions of access to file resources are unaffected by the physical distribution and location. • High availability: high reliability of file access system should be a priority and scheduled downtime should be constrained. =>Virtual Uniprocessor Concept

DISTRIBUTED FILE SYSTEMSTOPICS • Architecture • Client/Server • Services • Name resolution • Caching • Foundational Mechanisms • Design Issues • Case Studies • Log-Structured File Systems

TEMPORAL REFERENCE LOCALITY 1.0 Prob Joint Ref NOT Local 0 1.0 Proportion of File Space Stored Locally

DISTRIBUTED SHARED MEMORYMemory Access Schematic Backing Store Backing Store Cache, Private 1 Main Memory Main Memory 3 CPU CPU 2 1 = Intra-site BS to Main Memory 2 = Main Memory Access 3 = Inter-site Access

TYPES OF CONSISTENCY • Sequential Consistency - Result of any execution of operations of all processors is identical with the sequential execution where the operations of each processor appears in the sequence as specified by its program. • General Consistency - All copies of a memory location eventually contain the same data after the completion of all writes issued by every processor. • Processor Consistency - Write operations issued by a processor are observed in the same order in which they were issued. However, the ordering might not be identical when observed from different processors. • Weak Consistency - Synchronization accesses are sequentially consistent. All synchronization accesses must be performed before a regular data access and vice versa (programmer-imposed consistency). • Release Consistency - Same as weak consistency except that synchronization accesses must only be processor consistent with respect to each other.

CLASSES OF LOAD DISTRIBUTING ALGORITHMS DefinitionEffectOverheadRelative Performance Static “Hard coded” Little overhead Cheap, stable under decision logic based stable, conforming load on historical data Dynamic Respond to state Higher overhead to Handles non-conforming information to adjust collect information load patterns better load distribution Adaptive Adjusts decision Can adjust if needed Responds better to parameters, e.g. but generally high extreme conditions thresholds

COMPONENTS OF LOAD DISTRIBUTING ALGORITHMS • Transfer Policy • Thresholds defined for each site • Based on threshold, site is sender or receiver • Alternative based on imbalance among nodes • Selection Policy • Designation of task to be transferred (overhead incurred in transfer of task < reduction in response time achieved in transfer) • In general, tasks should incur minimal transfer overhead • Local-dependent system calls should be minimal (local-dependent means that such operations must be performed at originating node)

COMPONENTS OF LOAD DISTRIBUTING ALGORITHMS(Continued) • Location Policy • Finding and maintaining suitable sites for sending and receiving • Techniques include polling and broadcast query • Information Policy • What data should be collected, from whom and when. • Information policy follows one of three types: • Demand-driven: node collects state information for other nodes only on becoming a sender or receiver • Demand-driven policies are sender-initiated, receiver-initiated or symmetricallyinitiated. • Periodic: exchange of load information at defined intervals • State-change-driven: with state change of , nodes disseminate information