Great Food, Lousy Service

120 likes | 232 Vues

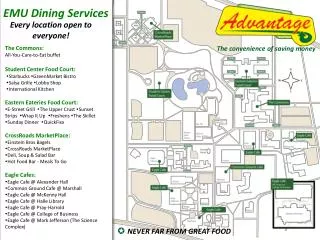

This study explores sentiment analysis in sparse restaurant reviews, focusing on the relationship between food quality and service experience. Using advanced modeling techniques and 10,000 training reviews, we assess key features like negation handling and topic modeling. Our analysis reveals insights into customer sentiments expressed in sparse remarks and how they correlate with overall dining experiences. The model's accuracy improved significantly from a baseline of 50% to 58.6% through iterative feature enhancements and classifiers, highlighting the power of precise sentiment analysis in understanding complex customer feedback.

Great Food, Lousy Service

E N D

Presentation Transcript

Great Food, Lousy Service Topic Modeling for Sentiment Analysis in Sparse Reviews Robin Melnick rmelnick@stanford.edu Dan Preston dpreston@stanford.edu

Short Words Characters

Sparse “An unexpected combination of Left-Bank Paris and Lower Manhattan in Omaha. Divine. Inspirational and a great value.” • Food? • Ambiance? • Service? • Noise?

SVM + Features, Features, Features! • 30+ preprocessing and SVM classification features, • ~50 configurations

Key Features • Stemming • Porter 1980 via NLTK • <fast>, <faster>, <fastest> <fast> • Negation processing • (enhanced approach from Pang et al. 2002) • “Not a great experience.” NOT_great • “They neverdisappoint!” NOT_disappoint • Net sentiment count • pos/neg lexicon (Harvard General Inquirer) • running +/- count • “Incredible(+) food, but our server was rude(-).” (0)

Results (so far) • Trained on 10,000 reviews • Tested on ~80,000 reviews • Accuracy • Baseline: 50.0% • Intermediate model: 56.6% (1.13x) • abs( average scoring delta ): 0.56

Topic Modeling Hand-seeded topic-word list expanded via WordNetSynSets • sub-topic classifiers • topic-filtered n-grams • <soupFOOD was fantasticADJ> • <fantasticADJsoupFOOD was> • topic-word proximity filtering • both above <fantasticADJ/FOOD>. Results:

Word-Rating Distributions “decent” “worst” “mediocre” “solid” “exceeded”

Frequency-Weighted Entropy Model • Accuracy • Baseline: 50.0% • Intermediate model: 56.6% • Best (entropy) model: 58.6% (1.17x) • abs( average scoring delta ): 0.56 0.52