Exploring Voice Technologies: VoiceXML and Speech Applications

This document outlines the advancements in voice technologies focusing on VoiceXML, Automated Speech Recognition (ASR), and Text-to-Speech (TTS). It discusses the underlying technologies, architectural elements of VoiceXML, and its applications in voice browsing and interactive voice response (IVR) systems. The presentation covers the fundamentals of speech recognition and synthesis processes, emphasizing the evolution of personal computing and the transition to web-centric models in pervasive computing. By integrating these technologies into virtual environments, we aim to enhance user interactions with digital systems.

Exploring Voice Technologies: VoiceXML and Speech Applications

E N D

Presentation Transcript

Speech Technologies and VoiceXML try Department of Computer Science National Cheng-Chi University

Reference • [1]Bob Edgar(2001),“The VoiceXML Handbook” ,NY:CMP Books. • [2]Dave Raggett(2001),”Getting started with VoiceXML 2.0”,W3C. • [3]Sun Microsystems(1998),”Java Speech Grammar Format Specification v1.0”,Sun Microsystems. • [4]Chetan Sharma and Jeff Kunins(2002),”VoiceXML:Strategies and Techniques for Effective Voice Application Development with VoiceXML 2.0”,Wiley. • [5]Brian Eberman,Jerry Carter,Darren Meyer,David Goddeau(2002),”Building VoiceXML Browsers with OpenVXI”, NY:ACM Press.

Reference • [6]Microsoft (2002),“Speech Technology Overview ” , http://www.microsoft.com/speech/evaluation/techover/ • [7] VoiceGenie Technologies Inc.(2001),”White Paper:Speaking Freely About The VoiceGenie VoiceXML Gateway and the VoiceXML Interpreter”,VoiceGenie Technologies Inc. • [8]W3C(2002),”VoiceXML Specification v2.0”,W3C. • [9]Chun-Feng,Liao(2002),”Basics of Speech Recognition”,NCCU Computer Center.

Presentation Agenda • Voice technologies Backgrounds • ASR/TTS • Voice browsing with VoiceXML • VoiceXML architecture • Implementations of VoiceXML Platform • VoiceXML document structure • Bringing Voice Technologies into Virtual Environment

Voice Technologies • In the mid- to late 1990s, personal computers started to become powerful enough to support ASR • The two key underlying technologies behind these advances are speech recognition (SR) and text-to-speech synthesis (TTS).

Classification of Voice Application • Basic interactive voice response (IVR) • Computer: “For stock quotes, press 1. For trading, press 2. …” • Human: (presses DTMF “1”) • Basic speech ASR • C: “Say the stock name for a price quote.” • H: “Lucent Technologies”

Classification of Voice Application • Advanced speech ASR • C: “Stock Services, how may I help you?” • H: “Uh, what’s Lucent trading at?” • “Near-natural language” ASR • C: “How may I help you?” • H: “Um, yeah, I’d like to get the current price of Lucent Technologies” • C: “Lucent is up two at sixty eight and a half.” • H: “OK. I want to buy one hundred shares at market price.” • C: “…”

Speech Recognition • Capturing speech (analog) signals • Digitizing the sound waves, converting them to basic language units or phonemes, • Constructing words from phonemes, and contextually analyzing the words to ensure correct spelling for words that sound alike (such as write and right).

Speech Recognition Process Flow Source:Microsoft Speech.NET Home(http://www.microsoft.com/speech/ )

Speech Recognition Process Flow • Step 1:User Input • The system catches user’s voice in the form of analog acoustic signal . • Step 2:Digitization • Digitize the analog acoustic signal. • Step 3:Phonetic Breakdown • Breaking signals into phonemes.

Speech Recognition Process Flow • Step 4:Statistical Modeling • Mapping phonemes to their phonetic representation using statistics model (ex:HMM) • Step 5:Matching • According to grammar , phonetic representation and Dictionary , the system returns an n-best list (I.e.:a word plus a confidence score • Grammar-the union words or phrases to constraint the range of input or output in the voice application. • Dictionary-the mapping table of phonetic representation and word(EX:thu,theethe)

Speech Synthesis • Speech Synthesis, or text-to-speech, is the process of converting text into spoken language. • Breaking down the words into phonemes; • Analyzing for special handling of text such as numbers, currency amounts. • Generating the digital audio for playback.

Speech Synthesis Source:Microsoft Speech.NET Home(http://www.microsoft.com/speech/ )

Pervasive Computing Model • E-business has changed from client-server model to web-centric model • Once connect to the Internet,one can get any information he want. But people wants more convenient way to connect to Internet. • Lou Gerstner,CEO of IBM:Pervasive Computing Model is billion people interacting with million e-business with trillion devices interconnected.

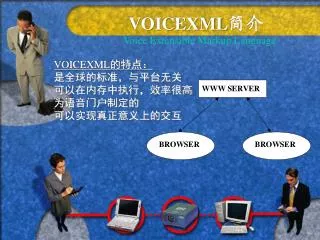

Voice Browsing • VoiceXML instead of HTML • A voice browser instead of an ordinary web browser • Phone instead of PC.

VoiceXML Overview • A language for specifying voice dialogs. • Voice dialogs use audio prompts and text-to-speech (TTS) for output; touch-tone keys (DTMF) and automatic speech recognition (ASR) for input. • Main input/output device (initially) is the phone. • Leverages the Internet for application development and delivery. • Standard language enables portability.(unifies dialog control languages)

History of VoiceXML Source:VoiceXML forum(http://www.voicexml.org)

Making use of mature Internet Technologies • Leverage existing web application development tools. • Leverage existing web infrastructure for application delivery. • Clean separation of service logic from user interaction.

VoiceXML Platform Architecture-1 • Telephone and Telephone network-Connects caller’s telephone with Telephony Server • VoiceXML Gateway • Voice Browser • Audio input-Speech Recognition (ASR), Touchtone (DTMF), Audio recording. • Audio output-Audio playback, Speech Synthesis (TTS) • Interface, Call Controls

VoiceXML Platform Architecture-2 • VoiceXML Documents • Dialog and flow control • Client-side scripting (ECMAScript) • Speech Recognition grammar • Speech Synthesis pronunciation control • Document servers(web server) • Feeding Static VoiceXML documents or audio files. • Application servers • Generate VoiceXML documents dynamically. • Server-side application logic • Connect to Database, or database interface

Implementations of VoiceXML Gateways • In Taiwan: • Yes Mobile • Chunghwa Telecom Laboratories • eWings Technologies, Inc • Free • IBM VoiceServerSDK • Open Source • CMU:OpenVXI

Related Research • Raymond L.Smith,III and Stephen D.Roberts: • Using voice input command to operate simulation-animation. • The efficiency issues of ASR/TTS are taken into account. • Satoru,Osamu,Katunobu,Takashi,Tomoyoshi,Hideki,Shotaro,Takio and Katsuhiko: • Create 3D virtual user who can speak with user via speaker and microphone. • Virtual User have the ability to learn words and recognize human face.

We can do more.. • Speak to many users who are “moving” in virtual environment. • System are built in distributed environment.(I.e. web) • Make use of XML technology (VoiceXML/SALT).

Problems to Solve • Voice /Animation synchronization. • Protocol integration. • ASR/TTS integration and its performance issues. • Virtual user autonomy. • The “Voice propagation range” issues.

Summary • Speech is the most natural way for human to communicate thus it will become an important way in HCI. • VoiceXML has revolutionized speech recognition & telephony application development & deployment. • Adding Speech facilities into 3D virtual environment will make UI more friendly and enable multi-modal input/output. • My research interest on this topic will focus on voice-animation synchronization and enable SR/TTS in distributed 3D virtual environment .