Introduction to Markov Chain Monte Carlo: Techniques and Applications in Text Mining

This lecture covers advanced concepts in Markov Chain Monte Carlo (MCMC) methods, including Metropolis-Hastings and Gibbs sampling, as applied to text mining and Bayesian network modeling. Participants will learn about joint distributions, posterior inference, and the computational challenges associated with sampling from complex distributions. Topics also include the nuances of proposal distributions, convergence of algorithms, and practical issues in implementing MCMC techniques. The session is aimed at deepening understanding of MCMC for effective data analysis in text mining.

Introduction to Markov Chain Monte Carlo: Techniques and Applications in Text Mining

E N D

Presentation Transcript

This work is licensed under a Creative Commons Attribution-Share Alike 3.0 Unported License. CS 679: Text Mining Lecture #9: Introduction to Markov Chain Monte Carlo, part 3 Slide Credit: TegGrenager of Stanford University, with adaptations and improvements by Eric Ringger.

Announcements • Assignment #4 • Prepare and give lecture on your 2 favorite papers • Starting in approx. 1.5 weeks • Assignment #5 • Pre-proposal • Answer the 5 Questions!

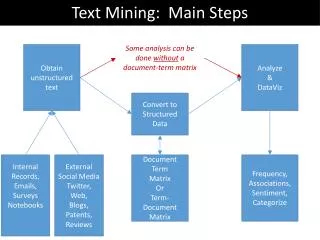

Where are we? • Joint distributions are useful for answering questions (joint or conditional) • Directed graphical models represent joint distributions • Hierarchical bayesianmodels: make parameters explicit • We want to ask conditional questions on our models • E.g., posterior distributions over latent variables given large collections of data • Simultaneously inferring values of model parameters and answering the conditional questions: “posterior inference” • Posterior inference is a challenging computational problem • Sampling is an efficient mechanism for performing that inference. • Variety of MCMC methods: random walk on a carefully constructed markov chain • Convergence to desirable stationary distribution

Agenda • Motivation • The Monte Carlo Principle • Markov Chain Monte Carlo • Metropolis Hastings • Gibbs Sampling • Advanced Topics

Metropolis-Hastings • The symmetry requirement of the Metropolis proposal distribution can be hard to satisfy • Metropolis-Hastings is the natural generalization of the Metropolis algorithm, and the most popular MCMC algorithm • Choose a proposal distribution which is not necessarily symmetric • Define a Markov chain with the following process: • Sample a candidate point x* from a proposal distribution q(x*|x(t)) • Compute the importance ratio: • With probability min(r,1) transition to x*, otherwise stay in the samestate x(t)

MH convergence • Theorem: The Metropolis-Hastings algorithm converges to the target distribution p(x). • Proof: • For all , WLOG assume • Thus, it satisfies detailed balance candidate is always accepted (i.e., ) b/c multiply by 1 commute transition prob.

Gibbs sampling • A special case of Metropolis-Hastings which is applicable to state spaces in which • we have a factored state space And • access to the full (“complete”) conditionals: • Perfect for Bayesian networks! • Idea: To transition from one state (variable assignment) to another, • Pick a variable, • Sample its value from the conditional distribution • That’s it! • We’ll show in a minute why this is an instance of MH and thus must be sampling from joint or conditional distribution we wanted.

Markov blanket • Recall that Bayesian networks encode a factored representation of the joint distribution • Variables are independent of their non-descendents given their parents • Variables are independent of everything else in the network given their Markov blanket! • So, to sample each node, we only need to condition on its Markov blanket:

if otherwise Gibbs sampling • More formally, the proposal distribution is • The importance ratio is • So we always accept! Dfn of proposal distribution and Dfn of conditional probability (twice) Definition of Cancel common terms

T A T T F 1 1 T F F B 1 1 Gibbs sampling example • Consider a simple, 2 variable Bayes net • Initialize randomly • Sample variables alternately F T T

Gibbs Sampling (in 2-D) (MacKay, 2002)

Practical issues • How many iterations? • How to know when to stop? • M-H: What’s a good proposal function? • Gibbs: How to derive the complete conditionals?

Advanced Topics • Simulated annealing, for global optimization, is a form of MCMC • Mixtures of MCMC transition functions • Monte Carlo EM • stochastic E-step • i.e., sample instead of computing full posterior • Reversible jump MCMC for model selection • Adaptive proposal distributions

Next • Document Clustering by Gibbs Sampling on a Mixture of Multinomials • From Dan Walker’s dissertation!