Coverage Rates and Coverage Bias When Interviewers Create Frames

This study investigates how interviewers contribute to errors in area-probability surveys, focusing on coverage rates and biases that arise during housing unit listings and household rostering. The research involves two primary listing methods—traditional and dependent—and evaluates factors like confirmation bias, incentives for interviewers, and bias/variance relationships. Additionally, it presents pretest findings from Southeast Michigan and offers insights into undercoverage and overcoverage rates based on varying listing methodologies. The aim is to improve accuracy in survey estimates through understanding and addressing interview-related biases.

Coverage Rates and Coverage Bias When Interviewers Create Frames

E N D

Presentation Transcript

Coverage Rates and Coverage BiasWhen Interviewers Create Frames Stephanie Eckman Joint Program in Survey Methodology University of Maryland June 15, 2009 @ NCHS

Interviewers as Source of Error • Interviewers contribute to nonresponse, measurement error • Create frames in area-probability surveys • Housing unit listing • Missed housing unit procedure • Household rostering • Screening for eligibility 2

Literature Review • Previous work concentrates on: • How many people are missed? • What kinds of people are missed? • Errors by respondents • Definitional • Motivated 3

Research Questions • Compare listing methods • Scratch: start with blank frame • Update: start with address list • Mechanisms of lister error • How are errors made? • Incentives of interviewers • Bias & variance 4

Hypotheses on Mechanisms • What makes listing easier or more comfortable for the lister? • Race or language match between residents and lister • High crime areas • Driving 5

Hypotheses on Mechanisms • Confirmation bias in dependent listing • Failure to add • HUs missing from list • Failure to delete • Inappropriate units on list 6

Hypotheses on Mechanisms • Undercoverage and nonresponse • Anecdotal evidence • Hainer 1987 (CPS) • Difficult to test 7

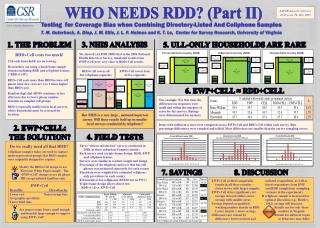

Pretest of Methods • Pretest with masters students • 14 segments in SE Michigan • 2 listings: traditional, dependent • Overall disagreement: 12% • Evidence of confirmation bias • FTA: 13% less likely to add • FTD: 11% less likely to delete 8

Census Dataset: Lister Agreement • 2 identical listings • 211 blocks • Dependent on MAF, where available • Overall: 79% agreement 9

Census Dataset: Drawbacks • Lister characteristics not available • No manipulation to test for confirmation bias 11

NSFG Data Collection • National Survey of Family Growth • Three listings of 49 segments • 1st listing by project • 2nd listing: traditional • 3rd listing: dependent • Manipulate input: add & delete lines 12

Paper 2: Mechanisms of Lister Error • Undercoverage and overcoverage rates • Overall • By housing unit and block characteristics • By listing method • Test hypotheses 13

Paper 3: Coverage Bias • Does lister error lead to bias in survey estimates? • 2 sources of data on undercovered • NSFG response data (27% selected) • Experian data (60% match) 14

Paper 3: Estimating Bias • Direct estimates • Re-estimate key variables without cases undercovered by 1 or 2 listers • Indirect estimates • Correlation between listing propensity & key variables

Questions and Concerns • Analysis of repeated listings • Latent class analysis? • Interviewer debriefing • What do I want to know? • NR and coverage • Available datasets? • How to test for this?

Thank You • Stay tuned for results next year • seckman@survey.umd.edu 17

Pretest: FTA Conf Bias • D listers missed 11 suppressed lines • T listers missed only 4 • Difference-in-differences estimate • Suppression leads to 13% decrease in inclusion 18

Pretest: FTD Conf Bias • D listers confirmed 4 added lines • All in multi-unit buildings • T listers included only 1 (??) • Difference-in-differences estimate • Bad lines in D lead to 11% increase in inclusion 19

Difference-in-Differences Estimate • (0.17-0.89) – (0.04-0.88) = -0.13 rounding 20