Machine Learning and the Big Data Challenge

420 likes | 522 Vues

Explore the advancements and challenges in machine learning, big data, and computing technologies. Learn about algorithms, trends, data volume, and modeling tools shaping the future of AI.

Machine Learning and the Big Data Challenge

E N D

Presentation Transcript

Machine Learning and the Big Data Challenge Max Welling UC Irvine

AI’s Promise 60 years ago • Robots that behave and think like humans • Marvin Minsky: Computer Vision will be easy, chess will be hard

What we got • Deep Blue beat Kasparov in the game of chess […] • Watson won Jeopardy

What is hard for AI? • Computer Vision and Scene Understanding […]

Another Example: Machine Translation • Tremendous progress has been made • Main reason: more and better data (e.g. documents from EU)

Language Processing • Google’s spelling and query correction • Main reason for progress: Google’s massive datasets

Computation: Moore’s Law • Computational power is doubling every two years (approximately).

Trends in Computing: Cloud Computing • Computing will become similar to electricity: take it as you need it. • We need global wifi coverage to make this work well.

Trends in Computing: Distributed Computing (e.g. GPUs) • Cheap and massively parallel computing (up to 300 processing units) • First developed for the gaming community • Now adopted by machine learning for very fast learning (3d ReNNaicance)

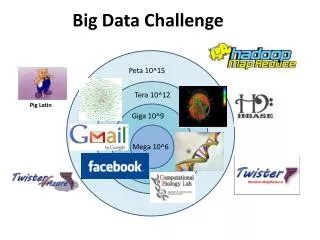

Big Data That’s 38 images every second if you live for 100 years (that’s more visual data than anyone will actually see …) • Current data-volume ~ 2 Zettabyte(2 trillion GB), and doubling every 1.5 years. • Data volume has it’s own Moore’s law!

Sensors Everywhere • Internet. • There are around 1.85 million surveillance cameras in the UK alone. • That is 1 camera for every 32 people ! • On a typical day every person will be recorded on around 70 CCTV cameras • There are about 5.6 billion cellphone users worldwide

Machine Learning • Algorithms that learn to make predictions from examples (data)

Generalization • Consider the following regression problem: • Predict the real value on the y-axis from the real value on the x-axis. • You are given 6 examples: {Xi,Yi}. • What is the y-value for a new query point X* ? X*

Generalization which curve is best?

Generalization • Ockham’s razor: prefer the simplest hypothesis consistent with data.

Generalization Learning is concerned with accurate prediction of future data, not accurate prediction of training data.

Learning as Compression • Imagine a game where Bob needs to send a dataset to Alice. • They are allowed to meet once before they see the data. • The agree on a precision level (quantization level). • Bob learns a model (red line). • Bob sends the model parameters • (offset and slant) only once • For every datapoint, Bob sends • -distance along line (large number) • -orthogonal distance from line (small number) • (small numbers are cheaper to encode than • large numbers)

Generalization learning = compression = abstraction • The man who couldn’t forget …

Types of Learning • Supervised Learning • Labels are provided, there is a strong learning signal. • e.g. classification, regression. • Semi-supervised Learning. • Only part of the data have labels. • e.g. a child growing up. • Reinforcement learning. • The learning signal is a (scalar) reward and may come with a delay. • e.g. trying to learn to play chess, a mouse in a maze. • Unsupervised learning • There is no direct learning signal. We are simply trying to find structure in data. • e.g. clustering, dimensionality reduction.

Classification: nearest neighbor Example: Imagine you want to classify versus Data: 100 monkey images and 200 human images with labels what is what. Task: Here is a new image: monkey or human?

1 nearest neighbor • Idea: • Find the picture in the database which is closest your query image. • Check its label. • Declare the class of your query image to be the same as that of the • closest picture. query closest image

kNN Decision Surface decision curve

Unsupervised Learning: Dimensionality Reduction (LLE – Roweis& Saul)

total of +/- 400,000,000 nonzero entries (99% sparse) movies (+/- 17,770) users (+/- 240,000) Collaborative Filtering (Netflix Dataset) 1 ? 4 ? 1 4

Bayes Rule(s) Riddle: Joe goes to the doctor and tells the doctor he has a stiff neck and a rash. The doctor is worried about meningitis and performs a test that is 80% correct, that is, for 80% of the people that have meningitis it will turn out positive. If 1 in 100,000 people have meningitis in the population and 1 in 1000 people will test positive (sick or not sick) what is the probability that Joe has meningitis? Answer: Bayes Rule. P(meningitis | positive test) = P(positive test | meningitis ) P(meningitis) / P(positive test) = 0.8 * 0.00001 / 0.001 = 0.008 < 1%

Bayesian Networks & Graphical Models • Main modeling tool for modern machine learning • Reasoning over large collections of random variables with intricate relations test result meningitis stiff-neck, rash

Nonparametric Bayes • Assumption: Real world data is infinitely complex. • Consequence: as the dataset grows, so should the model complexity. • Nonparametric Bayesian models do exactly that. Hierarchical clustering Of 10000 birds

Trends in ML: Human Computation (Luis Von Ahn) • Old paradigm: Computers assist humans • New paradigm: Humans assist Computers to learn • (The raising of the machines)

HC I: Useful Games “LabelMe” to segment & label images EPS game to label images

HC II: Crowd-sourced Marketplaces • Split a problem into many small and simple problems and sell them • on a crowd-sourced marketplace such as Amazon’s “Mechanical Turk.

HC III: Online Competitions • Netflix organized an online competition to improve their movie recommender system • Prize money: 1 million dollars if 10% improvement was achieved. • It lasted 3 years, at least 20,000 teams registered from 150 countries. • Kaggle has turned this into a business and hosts numerous competitions. • Latest: Heritage Healthcare Competition at $3M!

What won the Netflix Prize? • Ensemble learning: learn many models (e.g. 200) and average their predictions. • Algorithmic equivalent of “Wisdom of the Crowds”

Wisdom of the Crowds • Estimate the weight of the Space Shuttle (in tons) • Take mean or median of answers. • Does surprisingly well. • Time for experiment? • Mechanism: canceling of independent errors Answer: 2030 tons

Prediction Markets • “Idea Futures” • Use magnitude of the • bet to express confidence.

AI Assisted Learning • Stanford is offering 15 courses online to +/- 100,000 students. • Involved homework and exams and a “certificate of achievement”. • Flipping the classroom: watch lecture video at home, do homework in class. • AI can find right set of exercises/hints or cyber-partner for each individual student • and track progress

Outlook (past vision) (now: Google glasses) (a virtual, connected world) • Volume/diversity of data and computing power is growing exponentially. • Proliferation of sensors, internet and “human computing” allow for AIsystems that • are very different from human intelligence. • Future AI’s will: • sense your location, intention, mood, needs. • Anticipate your next action (order nonfat cappuccino from Starbuck at 9am). • Monitor your health. • Monitor environment.