Chapter 7 Memory Systems

Chapter 7 Memory Systems. Secondary Memory (Disk) M D. Secondary Memory (Archival) M A. Cache Memory M C. Main Memory M M. Memory Hierarchy. CPU ---- Registers. Memory Content: M C M M M D M A. Memory Parameters: Access Time: increase with distance from CPU

Chapter 7 Memory Systems

E N D

Presentation Transcript

Secondary Memory (Disk) MD Secondary Memory (Archival) MA Cache Memory MC Main Memory MM Memory Hierarchy CPU ---- Registers Memory Content: MC MM MD MA • Memory Parameters: • Access Time: increase with distance from CPU • Cost/Bit: decrease with distance from CPU • Capacity: increase with distance from CPU ELEC 5200/6200 From Patterson/Hennessey Slides

Exploiting Memory Hierarchy • Users want large and fast memories! SRAM access times are .5 – 5ns at cost of $4000 to $10,000 per GB.DRAM access times are 50-70ns at cost of $100 to $200 per GB.Disk access times are 5 to 20 million ns at cost of $.50 to $2 per GB. • Try and give it to them anyway • build a memory hierarchy 2004 ELEC 5200/6200 From Patterson/Hennessey Slides

Locality of Reference • A principle that makes having a memory hierarchy a good idea • If an item is referenced:temporal locality: it will tend to be referenced again soon spatial locality: nearby items will tend to be referenced soon Why does code have locality? • Our initial focus: two levels (upper, lower) • block: minimum unit of data • hit: data requested is in the upper level • miss: data requested is not in the upper level ELEC 5200/6200 From Patterson/Hennessey Slides

Memory Performance • Access Time (latency) – from initiation of memory read to valid data returned to CPU • Cache: TA typically = CPU cycle time • Main: TA typically = multiple CPU cycles • Disk: TA typically several orders of magnitude greater than CPU cycle time (software controlled) • Bandwidth (throughput) = number of bytes transferred per second BW = (bytes/transfer) x (transfers/second) Ex. Synchronous Dynamic RAM “burst transfers” ELEC 5200/6200 From Patterson/Hennessey Slides

Memory Access Modes • Random Access – locations accessed in any order with access time independent of location • SRAM • ROM/EPROM/EEPROM/Flash technologies • DRAM (some special modes available) • Cache • Serial Access – locations must be accessed in a predetermined sequence (ex. tape) • Disk – position read element over a track, and then serial access within the track • CDROM – data recorded in a “spiral” ELEC 5200/6200 From Patterson/Hennessey Slides

Memory Write Strategies • Writing a new value of an item in a hierarchical memory which has copies in multiple memory levels • “Write-through” strategy • Update all levels of memory on each write • All levels “consistent” or “coherent” at all times • “Write-back” strategy • Update closest (fastest) copy during write operation • Copy the value to other levels at “convenient time” • Memory state temporarily inconsistent ELEC 5200/6200 From Patterson/Hennessey Slides

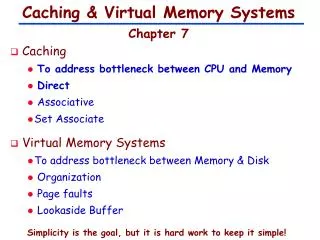

Cache • Two issues: • How do we know if a data item is in the cache? • If it is, how do we find it? • Three basic cache organizations: • Fully associative • Direct mapped • Set associative • Assume block size is one word of data ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Fully Associative Cache • Associative memory – access by “content” rather than address Concurrently compare CPU address to all cache address fields 1 2 3 4 5 6 7 8 9 10 Valid entry Address from CPU Cache Memory Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

Hits vs. Misses • Read hits • this is what we want! • Read misses • stall the CPU, fetch block from memory, deliver to cache, restart • Write hits: • can replace data in cache and memory (write-through) • write the data only into the cache (write-back the cache later) • Write misses: • read the entire block into the cache, then write the word ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Fully Associative Cache • CPU memory read from address 5 1 2 3 4 5 6 7 8 9 10 Address from CPU 5 Cache “Hit” Data to CPU Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Fully Associative Cache • CPU memory read from address 8 1 2 3 4 5 6 7 8 9 10 Address from CPU 8 Cache CPU reads Main Memory after Cache miss “Miss” (no “hit”) Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Cache Memory Structures • Update cache with data from address 8 1 2 3 4 5 6 7 8 9 10 Cache Use “open” cache line Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Cache Memory Structures • Where should the CPU place data from address 2?? • no open cache lines • must replace data in some line 1 2 3 4 5 6 7 8 9 10 Address 2 Cache “Miss” (no “hit”) Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

= = = = Multi-Word Blocks • Cache line holds a multi-word block from main memory • Take advantage of spatial locality of reference 0 1 2 3 4 5 6 7 8 9 Block 0 Block # Word offset Blk 1 2 Cache 3 4 Main Memory ELEC 5200/6200 From Patterson/Hennessey Slides

Direct Mapped Cache • Mapping: address is modulo the number of blocks in the cache Main memory address tag index cache line ELEC 5200/6200 From Patterson/Hennessey Slides

Direct Mapped Cache • For MIPS:What kind of locality are we taking advantage of? ELEC 5200/6200 From Patterson/Hennessey Slides

Direct Mapped Cache • Taking advantage of spatial locality: ELEC 5200/6200 From Patterson/Hennessey Slides

Motorola 68020/68030 Cache ELEC 5200/6200 From Patterson/Hennessey Slides

Cache Misses • Compulsory – cache “empty” at startup • Capacity – cache unable to hold entire working set • Conflict – two memory loc’s map to same cache line Conflict (1-way) Conflict (2-way) Compulsory – “flat” but negligible in this example Conflict (8-way) Fig. 7.31 ELEC 5200/6200 From Patterson/Hennessey Slides

Decreasing miss ratio with associativity • Problems: • Fully associative – high cost, misses only when capacity reached since any item can go in any line • Direct-mapped: less expensive, but can hold only one item with a given index, leading to conflicts • Compromise: Set Associative Cache • “N-way” set associative cache has N direct-mapped caches • One “set” = N lines with a particular index • Item with index K can be placed in line K of any of the N direct-mapped caches • Results in fewer misses due to conflicts ELEC 5200/6200 From Patterson/Hennessey Slides

Example: 4-way set-associative cache ELEC 5200/6200 From Patterson/Hennessey Slides

VAX 11/780 Cache ELEC 5200/6200 From Patterson/Hennessey Slides

Intel 80486/Pentium L1 Cache 80486 4-way Set Associative (Pentium 2-way) 2K (486) 4K (Pentium) 128 Lines (sets) LRU Valid* (3) (4) Tags (20 bit) Data line = 16 bytes (486) 32 bytes (Pentium) * Instruction cache: 1 valid bit per line * Data cache: 2-bit MESI state per line 80486 – 8K unified I/D Pentium – 8K/8K I/D Pentium II/III – 16K/16K I/D Write-back/Write-through programmable ELEC 5200/6200 From Patterson/Hennessey Slides

MESI Cache Line State • MESI = 2-bit cache line “state” for “write-back” cache • I = Invalid (line does not contain valid data) • S = Line valid, with shared access – no writes allowed • E = Line valid, with exclusive access – data may be written • M = Line valid & modified since read from main (must be rewritten to main memory) read I S invalidate read invalidate invalidate E M write ELEC 5200/6200 From Patterson/Hennessey Slides

Intel Approximated-LRU Replacement • 3-bit number B2B1B0 assigned to each “set” of 4 lines • Access L0/L1 – set B0=1 • Access L2/L3 – set B0=0 • Access L0 – set B1=1 or L1 – set B1=0 • Access L2 – set B2=1 or L1 – set B2=0 B0 B0B1 = 00 – replace L0 01 – replace L1 B0B2 = 10 – replace L2 11 – replace L3 0 1 B1 B2 0 1 0 1 L0 L1 L2 L3 ELEC 5200/6200 From Patterson/Hennessey Slides

Intel Pentium P4 vs. AMD Opteron ELEC 5200/6200 From Patterson/Hennessey Slides Figure 7.35

Average Memory Access Time • Assume main accessed after cache miss detected: TC ,TM = cache and main memory access times HC = cache hit ratio TAavg = TC*HC + (1-HC)(TC + TMavg) = TC + (1-HC)(TMavg) miss penalty • Extending to 3rd level (disk): TAavg = TC + (1-HC)(TM) + (1-HC)(1-HM)(TDavg ) Note that TM << TDavg ELEC 5200/6200 From Patterson/Hennessey Slides

Average Memory Access Time • Assume main accessed concurrent with cache access • abort main access on cache hit • main access already in progress on cache miss TA = TC*HC + (1-HC)(TM) Called “look-aside” cache (previous “look through”) Problem: main memory busy with aborted accesses (unacceptable if memory shared) ELEC 5200/6200 From Patterson/Hennessey Slides

Performance • Increasing the block size tends to decrease miss rate: • Use split caches because there is more spatial locality in code: ELEC 5200/6200 From Patterson/Hennessey Slides

Performance ELEC 5200/6200 From Patterson/Hennessey Slides

Performance • Simplified model:execution time = (execution cycles + stall cycles) x cycle timestall cycles = # of instructions x miss ratio x miss penalty • Two ways of improving performance: • decreasing the miss ratio • decreasing the miss penalty What happens if we increase block size? ELEC 5200/6200 From Patterson/Hennessey Slides

Example – “gcc”compiler on MIPS • Instruction count = IC • Instruction cache miss rate = 5% • Data cache miss rate = 10% • Clocks/instruction (CPI) = 4 if no misses/stalls • Miss penalty = 12 cycles (all misses) • Instruction frequencies: • lw = 22% of instructions executed • sw = 11% of instructions executed Question: What is the effect of cache misses on CPU performance (ex, on CPI)? ELEC 5200/6200 From Patterson/Hennessey Slides

Decreasing miss penalty with multilevel caches • Add a second level cache: • often primary cache is on the same chip as the processor • use SRAMs to add another cache above primary memory (DRAM) • miss penalty goes down if data is in 2nd level cache • Example: • CPI of 1.0 on a 5 Ghz machine with a 5% miss rate, 100ns DRAM access • Adding 2nd level cache with 5ns access time decreases miss rate to .5% • Using multilevel caches: • try and optimize the hit time on the 1st level cache • try and optimize the miss rate on the 2nd level cache ELEC 5200/6200 From Patterson/Hennessey Slides

Hardware Issues • Make reading multiple words easier by using banks of memory • It can get a lot more complicated... ELEC 5200/6200 From Patterson/Hennessey Slides

Cache Complexities • Not always easy to understand implications of caches: Theoretical behavior of Radix sort vs. Quicksort Observed behavior of Radix sort vs. Quicksort ELEC 5200/6200 From Patterson/Hennessey Slides

Cache Complexities • Here is why: • Memory system performance is often critical factor • multilevel caches, pipelined processors, make it harder to predict outcomes • Compiler optimizations to increase locality sometimes hurt ILP • Difficult to predict best algorithm: need experimental data ELEC 5200/6200 From Patterson/Hennessey Slides

Virtual Memory • Main memory can act as a cache for the secondary storage (disk) • Advantages: • illusion of having more physical memory • program relocation • protection ELEC 5200/6200 From Patterson/Hennessey Slides

Segmentation vs. Paging • Page: transparent to programmer • Virtual address partitioned into fixed-size pages • Physical memory likewise partitioned • Virtual pages map exactly into physical pages • Segment: defined by programmer/compiler • Compiler partitions program/data into segments of related information (code, data, etc.) • Segments easily protected (read-only, execute-only, etc.) • Segments can be of variable size • Memory manager must find sufficient free memory to hold each segment (fragmentation may occur) • Less common than paging ELEC 5200/6200 From Patterson/Hennessey Slides

Pages: virtual memory blocks • Page faults: the data is not in memory, retrieve it from disk • huge miss penalty, thus pages should be fairly large (e.g., 4KB) • reducing page faults is important (LRU is worth the price) • can handle the faults in software instead of hardware • using write-through is too expensive so we use writeback ELEC 5200/6200 From Patterson/Hennessey Slides

V i r t u a l p a g e n u m b e r P a g e t a b l e P h y s i c a l m e m o r y P h y s i c a l p a g e o r V a l i d d i s k a d d r e s s 1 1 1 1 0 1 1 0 D i s k s t o r a g e 1 1 0 1 Page Tables for Address Translation ELEC 5200/6200 From Patterson/Hennessey Slides

Page Tables Page Descriptor ELEC 5200/6200 From Patterson/Hennessey Slides

Segmented Memory Virtual Memory Physical Memory Memory Management Unit (MMU) S1 x S3 free S2 S3 x S1 Segment Table free S4 Sufficient free memory for segment S2, but fragmented, preventing loading of S2 ELEC 5200/6200 From Patterson/Hennessey Slides

Mapping Segmented Address Virtual Address Physical Memory Segment # Displacement Segment Table + V prot limit base datum Protection Physical address Compare segment Limit to displacement ELEC 5200/6200 From Patterson/Hennessey Slides

Combining Segmentation and Paging Virtual Address Physical Memory Segment # Page # Offset offset from top of page Physical page + V prot limit base V prot page # datum Page Table Segment Table • Partition segments into pages • Combine best features of both Page # Offset* Physical Address * offset same as in virtual address ELEC 5200/6200 From Patterson/Hennessey Slides

Making Address Translation Fast • A cache for address translations: translation lookaside buffer (TLB) Typical values: 16-512 entries, miss-rate: .01% - 1% miss-penalty: 10 – 100 cycles ELEC 5200/6200 From Patterson/Hennessey Slides

TLBs and caches ELEC 5200/6200 From Patterson/Hennessey Slides

TLBs and Caches ELEC 5200/6200 From Patterson/Hennessey Slides

Concurrent TLB & Cache Access • Normally access main memory cache after translating logical to physical address • Can do these concurrently to save time Virtual address Virtual page # Offset translate Physical address Offset Physical page # Cache format Tag Index Byte • Offset same in virtual & physical address, • Index available for cache line access while translation occurs • Check tag after translation and cache line access ELEC 5200/6200 From Patterson/Hennessey Slides

Modern Systems ELEC 5200/6200 From Patterson/Hennessey Slides