The Performance Impact of Kernel Prefetching on Buffer Cache Replacement Algorithms

The Performance Impact of Kernel Prefetching on Buffer Cache Replacement Algorithms. ( ACM SIGMETRIC ’05 ) ACM International Conference on Measurement & Modeling of Computer Systems Ali R. Butt, Chris Gniady, Y. Charlie Hu Purdue University. Presented by Hsu Hao Chen. Outline. Introduction

The Performance Impact of Kernel Prefetching on Buffer Cache Replacement Algorithms

E N D

Presentation Transcript

The Performance Impact of Kernel Prefetching on BufferCache Replacement Algorithms (ACM SIGMETRIC ’05) ACM International Conference on Measurement & Modeling of Computer Systems Ali R. Butt, Chris Gniady, Y. Charlie Hu Purdue University Presented by Hsu Hao Chen

Outline • Introduction • Motivation • Replacement Algorithm • OPT • LRU • LRU-2 • 2Q • LIRS • LRFU • MQ • ARC • Performance Evaluation • Conclusion

Introduction • Improving file system performance • Design effective block replacement algorithms for the buffer cache • Almost all buffer cache replacement algorithms have been proposed and studied comparatively without taking into account file system prefetching which exists in all modern operating systems • Cache hit ratio is used as sole performance metric • The actual number of disk I/O requests? • The actual running time of applications?

Introduction (Cont.) • Kernel Prefetching in Linux • Beneficial for sequential accesses Various kernel components on the path from file system operation to the disk

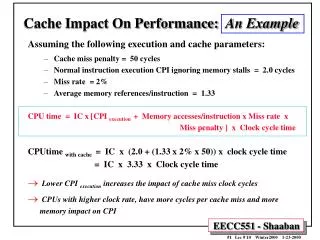

Motivation • The goal of buffer replacement algorithm • Minimize the number of disk I/O • Reduce the running time of the applications • Example Without prefetching, Belady results in 16 misses LRU results in 23 misses With prefetching, Beladys is not optimal!

Replacement Algorithm • OPT • Evicts the block that will be referenced farthest in the future • Often used for comparative studies • Prefetched blocks are assumed to be accessed most recently, OPT can immediately determine wrong or right prefetches

Replacement Algorithm • LRU • Replaces the page that has not been accessed for the longest time • Prefetched blocks are inserted in the MRU just like regular blocks

Replacement Algorithm • LRU pathological case • the working set size is larger than the cache • The application has a looping access pattern • In this case, LRU will replace all blocks before they are used again

Replacement Algorithm • LRU-2 • Try to avoid the pathological cases of LRU • LRU-K replaces a block based on the Kth-to-the-last reference • Authors recommended K=2 • LRU-2 can quickly remove cold blocks from the cache • Each block access requires log(N) operations to manipulate a priority queue N is the number of blocks in the cache

Replacement Algorithm • 2Q • Proposed • Achieve similar page replacement performance to LRU-2 • Low overehad way (constant LRU) • All missed blocks in A1in queue • Address of replaced blocks in A1out queue • Re-referenced blocks in Am queue • Prefetched blocks are treated as on-demand blocks and if prefetched block is evicted from A1in queue before on-demand access, it is simply discarded

Replacement Algorithm • LIRS (Low Inter-reference Recency Set) • LIR block : if accessed again since inserted on the LRU stack HIR block : referenced less frequently • Insert prefetched blocks into the cache that maintains HIR blocks

Replacement Algorithm • LRFU (Least Recently/Frequently Used) • Replaces the block with the smallest C(x) value • Prefetched blocks are treated as the most recently accessed • Problem: how to assign the initial weight (c(x)) • Solution: a prefetched flag is set • When the block is accessed on-demand • Initial value every block x,at every time t,λ a tunable parameter Initially,assign a value C(x)=0

Replacement Algorithm • MQ (Multi-Queue) • Use m LRU queues (typically m=8) • Q0,Q1,….Qm-1,where Qi contains blocks that have been at least 2i times but no more than 2i+1-1 times recently • Not increments the reference counter when a block is prefetched

Replacement Algorithm • MQ (Multi-Queue)

Replacement Algorithm • ARC (Adaptive Replacement Cache) • Maintains two LRU lists • Pages that have been referenced only once (L1) • Pages that have been referenced at least twice (L2) • Each list has same length c as cache • Cache contains tops of both lists: T1 and T2 L-1 L-2 |T1| + |T2| = c T1 T2

Replacement Algorithm • ARC attempts to maintain a Buffer size B_T1 for list T1 • When cache is full, ARC replacement • if |T1| > B_T1 LRU page from T1 • otherwise LRU page from T2 • if prefetched block is already in the ghost queue, it is not moved to the second queue, but to the first queue

Performance Evaluation • Simulation Environment • implement a buffer cache simulator • functionally (prefetching, I/O clustering) Linux • With DiskSim, they simulate the I/O time of applications Application Sequentialaccess Randomaccess Multi1 : workload in a code development environment Multi2 : workload in a graphic development and simulation Multi2 : workload in a database and a web index server

Performance Evaluation (Cont.) cscope (sequential) Hit ratio # of clustered disk requests Execution time

Performance Evaluation (Cont.) cscope (sequential) Hit ratio # of clustered disk requests Execution time

Performance Evaluation (Cont.) glimpse (sequential) Hit ratio # of clustered disk requests Execution time

Performance Evaluation (Cont.) tph-h (random) Hit ratio # of clustered disk requests Execution time

Performance Evaluation (Cont.) tph-r (random) Hit ratio # of clustered disk requests Execution time

Performance Evaluation (Cont.) • Concurrent applications • Multi1 : hit ratios and disk requests with or without prefetching exhibit similar behavior as cscope • Multi2 : behavior is similar to multi1, but prefetching does not improve the execution time (CPU-bound viewperf) • Multi3 : behavior is similar to tpc-h • Synchronous vs. asynchronous prefetching With prefetching, number of requests is at least 30% lower than without prefetching except OPT, especially whenasynchronous prefetching is used Number and size of disk I/O (cscope at 128MB cache size)

Conclusion • Kernel prefetching performance can have significant impact • different replacement algorithms • Application file access patterns importance for prefetching disk data • Sequential access • Random access • With prefetching or without prefetching, hit ratio is not sole performance metric