INF 2914

As p ginas na base de dados s o c pias das p ginas encontradas na Web ... Page Refresh Policies For Web Crawlers. Optimal Crawling Strategies for Web Search Engines ...

INF 2914

E N D

Presentation Transcript

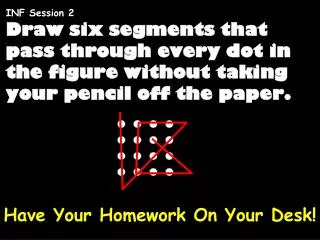

Slide 1:INF 2914 Web Search

Lecture 2: Crawlers

Slide 2:Today�s lecture

Crawling

Slide 3:Basic crawler operation

Begin with known �seed� pages Fetch and parse them Extract URLs they point to Place the extracted URLs on a queue Fetch each URL on the queue and repeat

Slide 4:Crawling picture

Unseen Web Seed pages

Slide 5:Simple picture � complications

Web crawling isn�t feasible with one machine All of the above steps distributed Even non-malicious pages pose challenges Latency/bandwidth to remote servers vary Webmasters� stipulations How �deep� should you crawl a site�s URL hierarchy? Site mirrors and duplicate pages Malicious pages Spam pages Spider traps � incl dynamically generated Politeness � don�t hit a server too often

Slide 6:What any crawler must do

Be Polite: Respect implicit and explicit politeness considerations for a website Only crawl pages you�re allowed to Respect robots.txt (more on this shortly) Be Robust: Be immune to spider traps and other malicious behavior from web servers

Slide 7:What any crawler should do

Be capable of distributed operation: designed to run on multiple distributed machines Be scalable: designed to increase the crawl rate by adding more machines Performance/efficiency: permit full use of available processing and network resources

Slide 8:What any crawler should do

Fetch pages of �higher quality� first Continuous operation: Continue fetching fresh copies of a previously fetched page Extensible: Adapt to new data formats, protocols

Slide 9:Updated crawling picture

Unseen Web Seed Pages URL frontier Crawling thread

Slide 10:URL frontier

Can include multiple pages from the same host Must avoid trying to fetch them all at the same time Must try to keep all crawling threads busy

Slide 11:Explicit and implicit politeness

Explicit politeness: specifications from webmasters on what portions of site can be crawled robots.txt Implicit politeness: even with no specification, avoid hitting any site too often

Slide 12:Robots.txt

Protocol for giving spiders (�robots�) limited access to a website, originally from 1994 www.robotstxt.org/wc/norobots.html Website announces its request on what can(not) be crawled For a URL, create a file URL/robots.txt This file specifies access restrictions

Slide 13:Robots.txt example

No robot should visit any URL starting with "/yoursite/temp/", except the robot called �searchengine": User-agent: * Disallow: /yoursite/temp/ User-agent: searchengine Disallow:

Slide 14:Processing steps in crawling

Pick a URL from the frontier Fetch the document at the URL Parse the URL Extract links from it to other docs (URLs) Check if URL has content already seen If not, add to indexes For each extracted URL Ensure it passes certain URL filter tests Check if it is already in the frontier (duplicate URL elimination) E.g., only crawl .edu, obey robots.txt, etc. Which one?

Slide 15:Basic crawl architecture

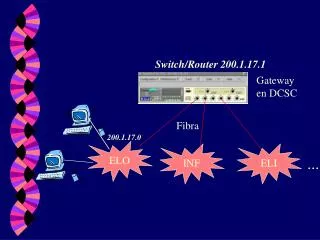

WWW Fetch DNS Parse Content seen? URL filter Dup URL elim Doc FP�s URL set URL Frontier robots filters

Slide 16:DNS (Domain Name Server)

A lookup service on the internet Given a URL, retrieve its IP address Service provided by a distributed set of servers � thus, lookup latencies can be high (even seconds) Common OS implementations of DNS lookup are blocking: only one outstanding request at a time Solutions DNS caching Batch DNS resolver � collects requests and sends them out together

Slide 17:Parsing: URL normalization

When a fetched document is parsed, some of the extracted links are relative URLs E.g., at http://en.wikipedia.org/wiki/Main_Page we have a relative link to /wiki/Wikipedia:General_disclaimer which is the same as the absolute URL http://en.wikipedia.org/wiki/Wikipedia:General_disclaimer During parsing, must normalize (expand) such relative URLs

Slide 18:Content seen?

Duplication is widespread on the web If the page just fetched is already in the index, do not further process it This is verified using document fingerprints or shingles

Slide 19:Filters and robots.txt

Filters � regular expressions for URL�s to be crawled/not Once a robots.txt file is fetched from a site, need not fetch it repeatedly Doing so burns bandwidth, hits web server Cache robots.txt files

Slide 20:Duplicate URL elimination

For a non-continuous (one-shot) crawl, test to see if an extracted+filtered URL has already been passed to the frontier For a continuous crawl � see details of frontier implementation

Slide 21:Distributing the crawler

Run multiple crawl threads, under different processes � potentially at different nodes Geographically distributed nodes Partition hosts being crawled into nodes Hash used for partition How do these nodes communicate?

Slide 22:Communication between nodes

The output of the URL filter at each node is sent to the Duplicate URL Eliminator at all nodes WWW Fetch DNS Parse Content seen? URL filter Dup URL elim Doc FP�s URL set URL Frontier robots filters Host splitter To other hosts From other hosts

Slide 23:URL frontier: two main considerations

Politeness: do not hit a web server too frequently Freshness: crawl some pages more often than others E.g., pages (such as News sites) whose content changes often These goals may conflict each other.

Slide 24:Politeness � challenges

Even if we restrict only one thread to fetch from a host, can hit it repeatedly Common heuristic: insert time gap between successive requests to a host that is >> time for most recent fetch from that host

Slide 25:URL frontier: Mercator scheme

Prioritizer Biased front queue selector Back queue router Back queue selector K front queues B back queues Single host on each URLs Crawl thread requesting URL

Slide 26:Mercator URL frontier

Front queues manage prioritization Back queues enforce politeness Each queue is FIFO

Slide 27:Front queues

Prioritizer 1 K Biased front queue selector Back queue router

Slide 28:Front queues

Prioritizer assigns to URL an integer priority between 1 and K Appends URL to corresponding queue Heuristics for assigning priority Refresh rate sampled from previous crawls Application-specific (e.g., �crawl news sites more often�)

Slide 29:Biased front queue selector

When a back queue requests a URL (in a sequence to be described): picks a front queue from which to pull a URL This choice can be round robin biased to queues of higher priority, or some more sophisticated variant Can be randomized

Slide 30:Back queues

Biased front queue selector Back queue router Back queue selector 1 B Heap

Slide 31:Back queue invariants

Each back queue is kept non-empty while the crawl is in progress Each back queue only contains URLs from a single host Maintain a table from hosts to back queues

Slide 32:Back queue heap

One entry for each back queue The entry is the earliest time te at which the host corresponding to the back queue can be hit again This earliest time is determined from Last access to that host Any time buffer heuristic we choose

Slide 33:Back queue processing

A crawler thread seeking a URL to crawl: Extracts the root of the heap Fetches URL at head of corresponding back queue q (look up from table) Checks if queue q is now empty � if so, pulls a URL v from front queues If there�s already a back queue for v�s host, append v to q and pull another URL from front queues, repeat Else add v to q When q is non-empty, create heap entry for it

Slide 34:Number of back queues B

Keep all threads busy while respecting politeness Mercator recommendation: three times as many back queues as crawler threads

Slide 35:Determinando a frequ�ncia de atualiza��o de p�ginas

Estudar pol�ticas de atualiza��o de uma base de dados local com N p�ginas As p�ginas na base de dados s�o c�pias das p�ginas encontradas na Web Dificuldade Quando uma p�gina da Web � atualizada o crawler n�o � informado

Slide 36:Framework

Medindo o quanto o banco de dados esta atualizado Freshness F(ei,t)=1 se a p�gina ei esta atualizada no instante 1 e 0 caso contr�rio F(S,t): Freshness m�dio da base de dados S no instante t Age A(ei,t) = 0 se ei esta atualizada no instante t e t-tm(ei) onde tm � o instante da �ltima modifica��o de ei A(S,t) : Age m�dio da base de dados S no instante t

Slide 37:Framework

Utiliza-se o freshness (age) m�dio ao longo do tempo para comparar diferentes pol�ticas de atualiza��o de p�ginas

Slide 38:Framework

Processo de Poisson (N(t)) Processo estoc�stico que determina o n�mero de eventos ocorridos no intervalo [0,t] Propriedades Processo sem mem�ria: O n�mero de eventos que ocorrem em um intervalo limitado de tempo ap�s o instante t independe do n�mero de eventos que ocorreram antes de t

Slide 39:Framework

Hip�tese: Um processo de Poisson � uma boa aproxima��o para o modelo de modifica��o de p�ginas Probabilidade de uma p�gina ser modificada pelo menos uma vez no intervalo (0,t] Quanto maior a taxa ?, maior a probabilidade de haver mudan�a

Slide 40:Framework

Probabilidade de uma p�gina ser modificada pelo menos uma vez no intervalo (0,t] Quanto maior a taxa ?, maior a probabilidade de haver mudan�a

Slide 41:Framework

Evolu��o da base de dados Taxa uniforme de modifica��o (�nico ?) � razo�vel quando n�o se conhece o par�metro Taxa n�o uniforme de modifica��o (um ? diferente para cada p�gina)

Slide 42:Pol�ticas de Atualiza��o

Frequ�ncia de Atualiza��o Deve-se decidir quantas atualiza��es podem ser feitas por unidade de tempo Assumimos que os N elementos s�o atulizados em I unidades de tempo Diminuindo I aumentamos a taxa de atualiza��o A rela��o N/I depende do n�mero de cralwlers, banda dispon�vel, banda nos servidores, etc.

Slide 43:Pol�ticas de Atualiza��o

Aloca��o de Recursos Ap�s decidir quantos elementos atualizar devemos decidir a frequ�ncia de atualiza��o de cada elemento Podemos atualizar todos elementos na mesma taxa ou atualizar mais frequentemente elementos que se modificam com maior frequ�ncia

Slide 44:Pol�ticas de Atualiza��o

Exemplo Um banco de dados contem 3 elementos {e1,e2,e3} As taxas de modifica��o 4/dia , 3/dia e 2/dia, respectivamente. S�o permitidas 9 atualiza��es por dia Com que taxas as atualiza��es devem ser feita ?

Slide 45:Pol�ticas de Atualiza��o

Pol�tica Uniforme 3 atualiza��es / dia para todas as p�ginas Pol�tica Proporcional: e1: 4 atualiza��es / dia e2: 3 atualiza�es /dia e3: 2 atualiza��es /dia Qual da pol�ticas � melhor ?

Slide 46:Pol�tica �tima

Calculando a frequ�ncia de atualiza��o �tima. Dada uma frequ�ncia m�dia f=N/I e a taxa m�dia ? i para cada p�gina, deve-se calcular a frequ�ncia fi com que cada p�gina i deve ser atualizada

Slide 47:Pol�tica �tima

Considere um banco de dados com 5 elementos e com as seguintes taxas de modifica��o 1,2,3,4 e 5. Assumindo que 5 atualiza��es por dias s�o poss�veis.

Slide 48:Pol�tica �tima

Frequ�ncia de atualiza��o �tima. Para maximizar o freshness temos a seguinte curva

Slide 49:Pol�tica �tima

Maximizando o Freshenss P�ginas que se modificam pouco devem ser pouco atualizadas P�ginas que se modificam demais devem ser pouco atualizadas Uma atualiza��o consome recurso e garante o freshness da p�gina atualizada por muito pouco tempo

Slide 50:Experimentos

Foram escohidos 270 sites. Para cada um destes 3000 p�ginas s�o atualizadas todos os dias. Experimentos realizados de 9PM as 6AM durante 4 meses 10 segundos de intervalo entre requisi��es ao mesmo site

Slide 51: Estimando frequ�ncias de modifica��o

O intervalo m�dio de modifica��o de uma p�gina � estimado dividindo o per�odo em que o experimento foi realizado pelo total de modifica��es no per�odo

Slide 52:Experimentos

Verifica��o do processo de Poisson Seleciona-se as p�ginas que se modificam a uma taxa m�dia (e.g. a cada 10 dias) e plota-se a distribui��o do intervalo de mudan�as

Slide 53: Verificando o Processo de Poisson

Eixo horizontal: tempo entre duas modifica��es Eixo vertical: fra��o das modifica��es que ocorreram no dado intervalo As retas s�o as predi��es Para a p�ginas que se modificam muito e p�ginas que se modificam muito pouco n�o foi poss�vel obter conclus�es.

Slide 54:Aloca��o de Recursos

Baseado nas frequ�ncias estimadas, calcula-se o freshness esperado para cada uma das tr�s pol�ticas consideradas. Consideramos que � poss�vel visitar 1Gb p�ginas/m�s

Slide 55:Resources

IIR 20 See also Effective Page Refresh Policies For Web Crawlers Cho& Molina

Slide 56:Trabalho 2 - Proposta

Estudar pol�ticas de atualiza��o de p�ginas Web Effective Page Refresh Policies For Web Crawlers Optimal Crawling Strategies for Web Search Engines User-centric Web crawling http://www.cs.cmu.edu/~olston/publications/wic.pdf

Slide 57:Trabalho 3- Proposta

Estudar pol�ticas para distribui��o de Crawlers Parallel Crawlers Realizar pesquisa bibliogr�fica em artigos que citam este

Slide 58:Parallel crawling

Why? Aggregate resources of many machines Network load dispersion Issues Quality of pages crawled (as before) Communication overhead Coverage; Overlap

Slide 59:Parallel crawling approach [Cho+ 2002]

Partition URL�s; each crawler node responsible for one partition many choices for partition function e.g., hash(host IP) What kind of coordination among crawler nodes? 3 options: firewall mode cross-over mode exchange mode

Slide 60:Coordination of parallel crawlers

i h g f e d a c b crawler node 1 crawler node 2 modes: ? firewall ? cross-over ? exchange

Slide 61:Empirical findings by Cho+

Firewall has acceptable coverage if number of crawler nodes small (<= 4) Exchange incurs small overhead (< 1% of network bandwidth) Can reduce exchanges by replicating the most popular URL�s

Slide 62:Trabalho 4 - Proposta

Google File System Map Reduce http://labs.google.com/papers.html

Slide 63:Trabalho 5 - Proposta

XML Parsing, Tokenization, and Indexing