Research vs Experiment

920 likes | 939 Vues

Research vs Experiment. Research. A careful search A process of enquiry and investigation ; An effort to obtain new knowledge in order to answer a question or to solve a problem

Research vs Experiment

E N D

Presentation Transcript

Research • A careful search • A process of enquiry and investigation; • An effort to obtain new knowledge in order to answer a question or to solve a problem • A protocol for measuring the values of a set of variables (response variables) under a set of condition (study condition)

Purpose of Research • Review or synthesize existing knowledge. • Investigate existing situations or problems. • Provide solutions to problems. • Explore and analyze more general issues. • Construct or create new procedures or systems. • Explain new phenomenon. • Generate new knowledge. • …or a combination of any of the above! (Collis & Hussey, 2003)

Three Purposes of Research • Exploration • Description • Explanation RESEARCH STRATEGY/DESIGN (a plan for research): The outline, plan, or strategy specifying the procedure to be used in answering research questions

Research strategies The research process ‘onion’ Research strategies

Research strategy • FIRST, be clear about your research questions and objectives. • A strategy is a general plan of how you will go about answering your research question(s). • It will contain clear objectives derived from the question. • You must – • Specify the data sources. • Consider the constraints e.g access, time, location, money, ethical issues.

Research strategies • Survey • Case study • Grounded theory • Ethnography • Action research • Exploratory, descriptive and explanatory studies • Experiment • Note: They are not mutually exclusive

Survey • a collection of information in standardised form from samples of known populations to create quantifiable data with regard to a number of variables from which correlations and possible causations can be established.

Main advantages of survey • Ability to collect large amounts of data • The relatively cheap cost at which these data may be collected • Perceived as authoritative • The more respondents can be involved • The easier coding and pre-coding • The easier quantification, comparison and measurement • The easier it becomes to analyse statistically • The greater reliability likely • Reliability is about accuracy, consistency, precision and lack of error- the ability to produce results which are dependable, repeatable

Disadvantage of Survey The less possibility for understanding respondents meanings and motives The greater the possibility of validity problems arising e.g. do all respondents interpret questions the same way? The more the richness of qualitative accounts is lost The less it tells us about the subjective world of the respondents……hence the need for a ‘phenomenological /naturalistic ’ inquiry. It’s easy to do a survey badly!

Case study • Focuses on understanding the dynamics present within a single setting. • Often used in the exploratory stages. • Can be - individual person, a single institution / organisation, a small group, a community, a nation, a decision, a policy, a particular service, a particular event, a process

Grounded theory • Data collection starts without any formal theoretical framework. • Theory is developed from data by a series of observations, which leads to the generation of predictions that are tested in further observations, which may confirm or otherwise the predictions. • Theory is grounded in continual reference to the data. • An attempt to impart rigour to qualitative methods. Barney Glaser GTI

Ethnography • Developed out of field work in anthropology. • The purpose is to interpret the world the way the ‘locals’ interpret it. • Is time consuming. • Linked to participant observation.

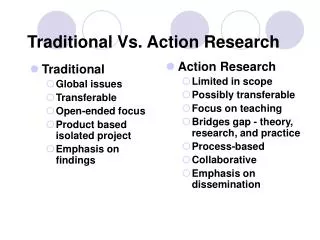

Action research • May involve practitioners who are also researchers e.g. professionals in training • Research may be part of the organisation ,e.g. school, university, hospital • Researcher is actively involved in the promotion of change within it • Issue of transfer of knowledge from one context to another.

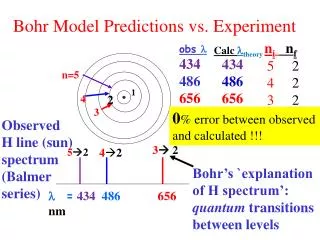

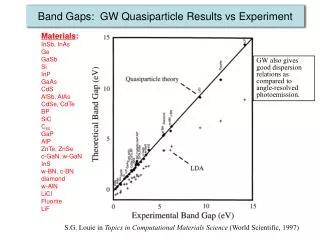

Experiment • A study in which the investigator selects the levels of at least one factor • An investigation in which the investigator applies some treatments to experimental units and then observes the effect of the treatments on the experimental units by measuring one or more response variables • An inquiry in which an investigator chooses the levels (values) of input or independent variables and observes the values of the output or dependent variable (s).

Strengths of experiment • Causation can be determined (if properly designed) • The researcher has considerable control over the variables of interest • It can be designed to evaluate multiple independent variables Limitations of experiment • Not ethical in many situations • Often more difficult and costly

Design of Experiments • Define the objectives of the experiment and the population of interest. • Identify all sources of variation. • Choose an experimental design and specify the experimental procedure.

Defining the Objectives What questions do you hope to answer as a result of your experiment? To what population do these answers apply?

Identifying Sources of Variation Input Variable Output Variable

Choosing an Experimental Design Experimental design?

Experimental Design • A controlled study in which one or more treatments are applied to experimental units. • A plan and a structure to test hypotheses in which the analyst controls or manipulates one or more variables • Protocol for measuring the values of a set of variable It contains independent and dependent variables

Statistical experimental design Determine the levels of independent variables (factors) and the number of experimental units at each combination of these levels according to the experimental goal. • What is the output variable? • Which (input) factors should we study? • What are the levels of these factors? • What combinations of these levels should be studied? • How should we assign the studied combinations to experimental units?

Steps of Experimental Design • Plan the experiment. • Design the experiment. • Perform the experiment. • Analyze the data from the experiment. • Confirm the results of the experiment. • Evaluate the conclusions of the experiment.

Plan the Experiment • Identify the dependent or output variable(s). • Translate output (response) variables to measurable quantities. • Determine the factors (input or independent variables) that potentially affect the output variables that are to be studied. • Identify potential combined actions between factors.

Well-planned Experiment • Simplicity • Degree of precision • Absence of systematic error • Range of validity of conclusion • Calculation of degree of uncertainty

Well-planned Experiment • Simplicity The selection of treatments and experimental arrangement should be as simple as possible, consistent with the objectives of the experiment • Degree of Precision The probability should be high that the experiment will be able to measure differences with the degree of precision the experimenter desires. This implies an appropriate design and sufficient replication • Absence of systematic error The experiment must be planned to ensure that the experimental units receiving one treatment in no systematic way differ from those receiving another treatment so that an unbiased estimate of each treatment effect can be obtained

Well-planned Experiment • Range of validity of conclusion Conclusion should have as wide a range of validity as possible. An experiment replicated in time and space would increase the range of validity of the conclusions that could be drawn from it. A factorial set of treatments is another way for increasing the range of validity of an experiment. In a factorial experiment, the effect of one factor are evaluated under varying levels of a second factor • Calculation of degree of uncertainty In any experiment, there is always some degree of uncertainty as to the validity of the conclusions. The experiment should be designed so that it is possible to calculate the probability of obtaining the observed results by chance alone

Steps in Design the Experiment • Selection of treatment (independent/input variables) • Selection of experimental material • Selection of experimental design • Selection of the unit of observation and the number of replication • Control the effect of the adjacent units on each other • Consideration of data to be collected (output/response variables) • Outlining statistical analysis and summarization of results

Important Steps in Design Experiment • Selection of treatment Careful selection of treatment • Selection of experimental material The material used should be representative of the population on which the treatment will be tested • Selection of experimental design Choose the simplest design that is likely to provide the precision • Selection of the unit of observation and the number of replication Plot size and the number of replications should be chosen to produce the required precision of treatment estimate

Important Steps in Design the Experiment • Control the effect of the adjacent units on each other Use border rows and by randomization of treatment • Consideration of data to be collected The data collected should properly evaluate treatment effect in line with the objectives of the experiment • Outlining statistical analysis and summarization of results Write out the SV, DF, SS, MS and F-test

Grading system Grade : 0 – 100 • A > 80 • B – D → 45 – 80 (Normal distribution) • E < 45 Grade composition

Types of Experimental Designs • Pre-experimental designs: One group designs and designs that compare pre-existing groups • Quasi-experimental designs: Experiments that have treatments, outcome measures, and experimental conditions but that do not use random selection and assignment to treatment conditions. • True experimental designs: Experiments that have treatments, outcome measures, and experimental conditions and use random selection and assignment to treatment conditions. This is the strongest set of designs in terms of internal and external validity.

Terminology Output Variable Input Variable

Terminology Variable A characteristic that varies (e.g., weight, body temperature, bill length, etc.) Treatment/ input/independent variable • Set at predetermined levels decided by the experimenter • A condition or set of conditions applied to experimental units • The variable that the experimenter either controls or modifies • What you manipulate • What you evaluate • Single factor • ≥ 2 factors

Terminology Factors • Another name for the independent variables of an experimental design • An explanatory variable whose effect on the response is a primary objective of the study • A variable upon which the experimenter believes that one or more response variables may depend, and which the experimenter can control • An explanatory variable that can take any one of two or more values. The design of the experiment will largely consist of a policy for determining how to set the factors in each experimental trial

Terminology Levels or Classifications • The subcategories of the independent variable used in the experimental design • The different values of a factor Dependent/response/output variable • A quantitative or qualitative variable that represents the variable of interest. • The response to the different levels of the independent variables • A characteristic of an experimental unit that is measured after treatment and analyzed to assess the effects of treatments on experimental units

Terminology Treatment Factor A factor whose levels are chosen and controlled by the researcher to understand how one or more response variables change in response to varying levels of the factor Treatment Design The collection of treatments used in an experiment. Full Factorial Treatment Design Treatment design in which the treatments consist of all possible combinations involving one level from each of the treatment factors.

Terminology Experimental materials: Materials which are used in the experiment Requirements: • Homogen/uniform • Corectly identified • Sensitive to the treatment An individualsor a group of materials which will be applied a treatment Experimental units

Terminology Experimental unit • The unit of the study material in which treatment is applied • The smallest unit of the study material sharing a common treatment • The physical entity to which a treatment is randomly assigned and independently applied • a person, object or some other well-defined item upon which a treatmentis applied. Observational unit (sampling unit) • The smallest unit of the study material for which responses are measured. • The unit on which a response variable is measured. There is often a one-to-one correspondence between experimental units and observational units, but that is not always true.

Basic principles • Comparison/control • Replication • Randomization • Stratification (blocking)

Comparison/control Good experiments are comparative • Comparing the effect of different nitrogen dosages on rice yield • Comparing the potential yield of cassava clones • Comparing the effectiveness of pesticides Ideally, the experimental group is compared to concurrent controls (rather than to historical controls).

Replication • Applying a treatment independently to two or more experimental units • The number of experimental units for which responses to a particular treatment are observed • reduce the effect of uncontrolled variation (i.e. increase precision). • Estimate the variability in response that is not associated with treatment different • Improve the reliability of the conclusion drawn from the data • quantify uncertainty The usages

Randomization • Random assignment of treatments to experimental units. • Experimental subjects (“units”) should be assigned to treatment groups at random. At random does not mean haphazardly. One needs to explicitly randomize using • A computer, or • Coins, dice or cards.

Why randomized? • Allow the observed responses to be regarded as random sampling from appropriate population • Eliminate the influence of systematic bias on the measured value • Control the role of chance • Randomization allows the later use of probability theory, and so gives a solid foundation for statistical analysis.

Stratification (Blocking) • Grouping similar experimental units together and assigning different treatments within such groups of experimental units • A technique used to eliminate the effects of selected confounding variables when comparing the treatment • If you anticipate a difference between morning and afternoon measurements: • Ensure that within each period, there are equal numbers of subjects in each treatment group. • Take account of the difference between periods in your analysis.

Cage positions Completely randomized design (4 treatments x 4 replications)

Cage positions Completely randomized design (4 treatments x 4 replications)