The Design of the Borealis Stream Processing Engine

260 likes | 437 Vues

The Design of the Borealis Stream Processing Engine. Brandeis University, Brown University, MIT Magdalena Balazinska Nesime Tatbul MIT Brown. Node 2. Node 1. Node 3. Node 4. Distributed Stream Processing. Data sources push tuples continuously Operators process windows of tuples.

The Design of the Borealis Stream Processing Engine

E N D

Presentation Transcript

The Design of the Borealis Stream Processing Engine Brandeis University, Brown University, MIT Magdalena Balazinska Nesime Tatbul MIT Brown

Node 2 Node 1 Node 3 Node 4 Distributed Stream Processing • Data sources push tuples continuously • Operators process windows of tuples U S m Data Sources Client Apps U m S

Where are we today? • Data models, operators, query languages • Efficient single-site processing • Single-site resource management • [STREAM, TelegraphCQ, NiagaraCQ, Gigascope, Aurora] • Basic distributed systems • Servers [TelegraphCQ, Medusa/Aurora] • or sensor networks [TinyDB, Cougar] • Tolerance to node failures • Simple load management

Challenges • Tuple revisions • Revisions on input streams • Fault-tolerance • Dynamic & distributed query optimization • ...

(3pm,$11) (2pm,$12) (2:25,$9) (4:15,$10) (4:05,$11) (1pm,$10) Average price 1 hour Causes for Tuple Revisions • Data sources revise input streams: “On occasion, data feeds put out a faulty price [...] and send a correction within a few hours” [MarketBrowser] • Temporary overloads cause tuple drops • Temporary failures cause tuple drops

Current Data Model • time: tuple timestamp header data (time,a1,...,an)

New Data Model for Revisions • time: tuple timestamp • type: tuple type • insertion, deletion, replacement • id: unique identifier of tuple on stream header data (time,type,id,a1,...,an)

Revisions: Design Options • Restart query network from a checkpoint • Let operators deal with revisions • Operators must keep their own history • (Some) streams can keep history

(3pm,$11) (2pm,$11) (2pm,$12) (3pm,$11) (2:25,$9) (1pm,$10) (2pm,$12) Average price Average price 1 hour 1 hour Revision Processing in Borealis • Closed model: revisions produce revisions

Revision Processing in Borealis • Connection points (CPs) store history • Operators pull the history they need Input queue Operator Stream S CP Most recent tuple in history Oldest tuple in history Revision

Node 3 U m U S m Node 3’ Node 1’ Node 2’ U m s3’ Fault-Tolerance through Replication • Goal: Tolerate node and network failures s3 Node 1 Node 2 U S m s1 s2 s4

Reconciliation • State reconciliation alternatives • Propagate tuples as revisions • Restart query network from a checkpoint • Propagate UNDO tuple • Output stream revision alternatives • Correct individual tuples • Stream of deletions followed by insertions • Single UNDO tuple followed by insertions

Fault-Tolerance Approach • If an input stream fails, find another replica • No replica available, produce tentative tuples • Correct tentative results after failures STABLE UPSTREAM FAILURE Missing or tentative inputs Failure heals Another upstream failure in progress Reconcile state Corrected output STABILIZING

Challenges • Tuple revisions • Revisions on input streams • Fault-tolerance • Dynamic & distributed query optimization • ...

Optimization in a Distributed SPE • Goal: Optimized resource allocation • Challenges: • Wide variation in resources • High-end servers vs. tiny sensors • Multiple resources involved • CPU, memory, I/O, bandwidth, power • Dynamic environment • Changing input load and resource availability • Scalability • Query network size, number of nodes

Quality of Service • A mechanism to drive resource allocation • Aurora model • QoS functions at query end-points • Problem: need to infer QoS at upstream nodes • An alternative model • Vector of Metrics (VM) carried in tuples • Operators can change VM • A Score Function to rank tuples based on VM • Optimizers can keep and use statistics on VM

State Confidence Area Thermal Hydration Cognitive Life Signs Wound Detection 90% 60% 100% 90% 80% Physiologic Models S S S Example Application: Warfighter Physiologic Status Monitoring (WPSM)

VM ([age, confidence], value) HRate Model1 RRate Merge Model2 Temp Sensors Models may change confidence. SF(VM) = VM.confidence x ADF(VM.age) Score Function age decay function Ranking Tuples in WPSM

End-point Monitor(s) Global Optimizer Borealis Optimizer Hierarchy Neighborhood Optimizer Local Monitor Local Optimizer Borealis Node

Optimization Tactics • Priority scheduling • Modification of query plans • Commuting operators • Using alternate operator implementations • Allocation of query fragments to nodes • Load shedding

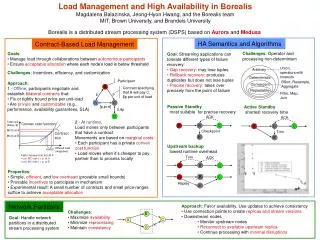

A C B D S1 S2 A B S1 Connected Plan Cut Plan C D r r S2 2cr 2r 2r 4cr 3cr 3cr Correlation-based Load Distribution • Goal: Minimize end-to-end latency • Key ideas: • Balance load across nodes to avoid overload • Group boxes with small load correlation together • Maximize load correlation among nodes

Load Shedding • Goal: Remove excess load at all nodes and links • Shedding at node A relieves its descendants • Distributed load shedding • Neighbors exchange load statistics • Parent nodes shed load on behalf of children • Uniform treatment of CPU and bandwidth problem • Load balancing or Load shedding?

Node A Node B 4C C r1 r2 App1 3C C r1 r2 App2 Local Load Shedding Goal: Minimize total loss r2’ 25% 58% CPU Load = 5Cr1, Cap = 4Cr1 CPU Load = 4Cr2, Cap = 2Cr2 CPU Load = 3.75Cr2, Cap = 2Cr2 No overload No overload A must shed 20% B must shed 50% A must shed 20% A must shed 20% B must shed 47%

Node A Node B 4C C r1 r2 App1 3C C r1 r2 App2 Distributed Load Shedding Goal: Minimize total loss r2’ 9% r2’’ 64% CPU Load = 5Cr1, Cap = 4Cr1 CPU Load = 4Cr2, Cap = 2Cr2 No overload No overload A must shed 20% B must shed 50% A must shed 20% A must shed 20% smaller total loss!

Models data Proxy Merge control Sensors control message from the optimizer Extending Optimization to Sensor Nets • Sensor proxy as an interface • Moving operators in and out of the sensor net • Adjusting sensor sampling rates • The WPSM Example:

Conclusions • Next generation streaming applications require a flexible processing model • Distributed operation • Dynamic result and query modification • Dynamic and scalable optimization • Server and sensor network integration • Tolerance to node and network failures • Borealis has been iteratively designed, driven by real applications • First prototype release planned for Spring’05 • http://nms.lcs.mit.edu/projects/borealis