Letter-to-phoneme conversion

Letter-to-phoneme conversion. Sittichai Jiampojamarn sj@cs.ualberta.ca CMPUT 500 / HUCO 612 September 26, 2007. Outline. Part I Introduction to letter-phoneme conversion Part II Many-to-Many alignments and Hidden Markov Models to Letter-to-phoneme conversion., NAACL 2007 Part III

Letter-to-phoneme conversion

E N D

Presentation Transcript

Letter-to-phoneme conversion Sittichai Jiampojamarn sj@cs.ualberta.ca CMPUT 500 / HUCO 612 September 26, 2007

Outline • Part I • Introduction to letter-phoneme conversion • Part II • Many-to-Many alignments and Hidden Markov Models to Letter-to-phoneme conversion., NAACL 2007 • Part III • On-going work: discriminative approaches for letter-to-phoneme conversion • Part IV • Possible term projects for CMPUT 500 / HUGO 612

The task • Converting words to their pronunciations • study -> [ s t ʌ d I ] • band -> [b æ n d ] • phoenix -> [ f i n I k s ] • king -> [ k I ŋ ] • Words sequences of letters. • Pronunciations sequence of phonemes. • Ignoring syllabifications, and stresses.

Why is it important? • Major component in speech synthesis systems • Word similarity based on pronunciation • Spelling correction. (Toutanova and Moore, 2001) • Linguistic interest of relationships between letters and phonemes. • Not a trivial task, but tractable.

Trivial solutions ? • Dictionary – searching answers on database • Great effort to construct such large lexicon database. • Can’t handle new words and misspellings. • Rule-based approaches • Work well on non-complex languages • Fail on complex languages • Each word creates its own rules. --- end up with remembering word-phoneme pairs.

John Kominek and Alan W. Black, “Learning Pronunciation Dictionaries: Language Complexity and Word Selection Strategies”, In proceeding of HLT-NAACL 2006, June 4-9, pp.232-239

Learning-based approaches • Training data • Examples of words and their phonemes. • Hidden structure • band [b æ n d ] • b [b], a [æ], n [n], d [d] • abode [ə b o d] • a [ə], b [b], o [o], d [d], e [ _ ]

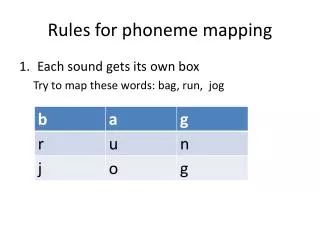

Alignments • To train L2P, we need alignments between letters and phonemes a -> [ə] b -> [b] o -> [o] d -> [d] e -> [_]

Letter-to-phoneme alignments • Previous work assumed one-to-one alignment for simplicity (Daelemans and Bosch, 1997; Black et al., 1998; Damper et al., 2005). • Expectation-Maximization (EM) algorithms are used to optimize the alignment parameters. • Matching all possible letters and phonemes iteratively until the parameters converge.

1-to-1 alignments • Initially, alignments parameters can start from uniform distribution, or counting all possible letter-phoneme mapping. Ex. abode [ə b o d] P(a, ə) = 4/5 P(b,b) = 3/5 …

1-to-1 alignments • Find the best possible alignments based on current alignment parameters. • Based on the alignments found, update the parameters.

Finding the best possible alignments • Dynamic programming: • Standard weighted minimum edit distance algorithm style. • Consider the alignment parameter P(l,p) is a mapping score component. • Try to find alignments which give the maximum score. • Allow to have null phonemes but not null letters • It is hard to incorporate null letters in the testing data

Problems with 1-to-1 alignments • Double letters: two letters map to one phoneme. (e.g. ng [ŋ], sh [ʃ], ph [f])

Problem with 1-to-1 alignments • Double phonemes: one letter maps to two phonemes. (e.g. x [k s], u [j u])

Previous solutions for double phonemes • Preprocess using a fix list of phonemes. • [k s] -> [X] • [j u] -> [U] Lose "j" and "u"

Applying many-to-many alignments and Hidden Markov Models to Letter-to-Phoneme conversion Sittichai Jiampojamarn, Grzegorz Kondrak and Tarek Sherif Proceedings of the Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL-HLT 2007), Rochester, NY, April 2007, pp.372-379.

Alignment process Prediction process Overview system

Many-to-many alignments • EM-based method. • Extended from the forward-backward training of a one-to-one stochastic transducer (Ristad and Yianilos, 1998). • Allow one or two letters to map to null, one, or two phonemes.

Prediction problem • Should the prediction model generate phonemes from one or two letters ? • gash [g æ ʃ ] gasholder [g æ s h o l d ə r]

Letter chunking • A bigram letter chunking prediction automatic discovers double letters. Ex. longs

Alignment process Prediction process Overview system

Phoneme prediction • Once the training examples are aligned, we need a phoneme prediction model. • “Classification task” or “sequence prediction”?

Instance based learning • Store the training examples. • The predicted class is assigned by searching the “most similar” training instance. • The similarity functions: • Hamming distance, Euclidean distance, etc.

Basic HMMs • A basic sequence-based prediction method. • In L2P, • letters are observations • phonemes are states • Output phoneme sequences depend on both emission and transition probabilities.

Applying HMM • Use an instance based learning to produce a list of candidate phones with confidence values “conf(phonei)”for each letteri. (emission probability). • Use a language model of phoneme sequence in the training data to obtain transition probability P(phonei | phonei-1, …phonei-n).

Visualization Buried -> [ b E r aI d ] = 2.38 x 10-8 Buried -> [ b E r I d ] = 2.23 x 10-6

Evaluation • Data sets • English: CMUDict (112K), Celex (65K). • Dutch: Celex (116K). • German: Celex (49K). • French: Brulex (27K). • IB1 algorithm implemented in TiMBL package as the classifier.(W. Daelemans et al., 2004.) • Results are reported in word accuracy rate based on 10-fold cross validation.

Messages • Many-to-many alignments show significant improvements over one-to-one traditional alignments. • HMM-like approach helps when a local classify has difficulty to predict phonemes.

Criticism • Joint models • Alignments, chunking, prediction, and HMM. • Error propagation • Errors from one model to other models which are unlikely to re-correct. • Can we combine and optimize at once ? Or at least allow the system to re-correct past errors ?

On-going work Discriminative approach for letter-to-phoneme conversion

Online discriminative learning • Let x is an input word and y is an output phonemes. • represents features describing x and y. • is a weight vector for

Online training algorithm • Initially, • For k iterations • For all letter-phoneme sequence pairs (x,y) • update weights according to and