Towards a Synopsis Warehouse

450 likes | 604 Vues

Towards a Synopsis Warehouse. Peter J. Haas IBM Almaden Research Center San Jose, CA. Acknowledgements:. Kevin Beyer Paul Brown Rainer Gemulla (TU Dresden) Wolfgang Lehner (TU Dresden) Berthold Reinwald Yannis Sismanis. Search. Business Intelligence. Enterprise Repository. Content

Towards a Synopsis Warehouse

E N D

Presentation Transcript

Towards a Synopsis Warehouse Peter J. Haas IBM Almaden Research Center San Jose, CA

Acknowledgements: Kevin Beyer Paul Brown Rainer Gemulla (TU Dresden) Wolfgang Lehner (TU Dresden) Berthold Reinwald Yannis Sismanis

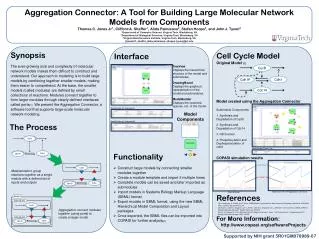

Search BusinessIntelligence Enterprise Repository Content MetadataBusiness objects Account Order Analyze, Integrate Crawl, ETL Customer ERP (SAP), CRM, WBIBPM, SCM ECM (reports, spreadsheets, Financial docs (XBRL)) Office documentsE-Mail, Product Manuals Structured Semi-Structured Unstructured Crawlable/deep Web Company Data Syndicated Data Provider Information Discovery for the Enterprise Query: “Explain the product movement, buyer behavior, maximize the ROI on my product campaigns.” Query: “The sales team is visiting company XYZ next week. What do they need to know about XYZ?” Business-Object Discovery Data Analysis &Similarity

Motivation • Challenge: Scalability • Massive amounts of data at high speed • Batches and/or streams • Structured, semi-structured, unstructured data • Want quick approximate analyses • Automated data integration and schema discovery • “Business object” identification • Quick approximate answers to queries • Data browsing/auditing • Our approach: a warehouse of synopses

Full-Scale Warehouse Of Data Partitions Synop. Synop. Synop. Warehouse of Synopses S1,1 S1,2 Sn,m merge S*,* S1-2,3-7 etc A Synopsis Warehouse

Outline • Synopsis 1: Uniform samples • Background • Creating and combining partitions • Hybrid Bernoulli and Hybrid Reservoir algorithms • Updating partitions • Stable datasets: random pairing • Growing datasets: resizing algorithms • Synopsis 2: AKMV samples • Goal: estimating the number of distinct values • Our choice of synopsis and estimator • Relation to other work • Analysis based on theory of uniform order statistics

Synopsis 1: Uniform Samples • Advantages • Flexible, used to produce other synopses • Mandatory if future use unknown a priori • Used in many statistical and mining methods • Building block for complex schemes • Ex: stratified sampling • Design goals • True uniformity (same sample size = same probability) • Bounded memory (no unpleasant surprises) • Memory efficiency (keep sample full) • Support for compressed samples • Handle common case of few distinct values • 80% of 1000 customer datasets had < 4000 distinct values

Classical Uniform Methods • Bernoulli sampling • Bern(q) independently includes each element with prob = q • Random, uncontrollable sample size • Binomially distributed, Nq on average • Easy to merge: union of two Bern(q) samples is Bern(q) • Reservoir sampling • Creates uniform sample of fixed size k • Insert first k elements into sample • Then insert ith element with prob. pi = k / i • replace random victim • Vitter optimization: Simulate coin tosses • Variants to handle large disk-resident samples • Merging more complicated than Bernoulli

Drawback of Basic Methods • Neither method is very compact • Especially when few distinct values • E.g., DS = (<A,500>,<B,300>) • Stored as (A,A,…,A,B,B,…B) - 800 chars • Concise sampling (GM 98) • Compact: purge Bern(q) sample S if too large • Bern(q’/q) subsample of S Bern(q’) sample • Not uniform (rare items under-represented)

New Sampling Methods (ICDE ’06) • Two flavors: • Hybrid reservoir (HR) • Hybrid Bernoulli (HB) • Properties • Truly uniform • Bounded footprint at all times • Not as memory efficient as Concise Sampling • Will store exact distribution if possible • Samples stored in compressed form • Merging algorithms available

Basic Ideas: Sample Creation • Phase 1 • Start by storing 100% sample compactly • Termination in Phase 1 exact distribution • Abandon Phase 1 if footprint too big • Take subsample and expand • Fall back to reservoir(HR) or Bernoulli(HB) sampling (Phase 2) • HB can revert to reservoir sampling (Phase 3) • Compress sample upon termination • If Phase 2 termination • HR: uniform sample of size k • HB: uniform, (almost) Bernoulli sample • Stay within footprint at all times • Messy details

Basic Ideas: Merging • Both samples in Phase 2 (usual case) • Bernoulli: equalize q’s and take union • Take subsample to equalize q’s • Reservoir: take subsamples and merge • Random (hypergeometric) subsample size • Corner cases • One sample in Phase 1, etc. • See ICDE ’06 paper for details

HB versus HR • Advantages: • HB samples are cheaper to merge • Disadvantages: • HR sampling controls sample size better • Need to know partition size in advance • For subsampling during sample creation • Engineering approximation required

Speedup: HB Sampling You derive “speed-up” advantages from parallelism with up to about 100 partitions.

Speedup: HR Sampling Similar results to previous slide, but merging HR samples is more complex than HB samples.

Linear Scale-Up HB Sampling HR Sampling

Updates Within a Partition • Arbitrary inserts/deletes (updates trivial) • Previous goals still hold • True uniformity • Bounded sample size • Keep sample size close to upper bound • Also: minimize/avoid base-data access

New Algorithms (VLDB ’06+) • Stable datasets: Random pairing • Generalizes reservoir/stream sampling • Handles deletions • Avoids base-data accesses • Dataset insertions paired randomly with “uncompensated deletions” • Only requires counters (cg, cb) of “good” and “bad” UD’s • Insert into sample with probability cb / (cb + cg) • Extended sample-merging algorithm • Growing datasets: Resizing • Theorem: can’t avoid base-data access • Main ideas: • Temporarily convert to Bern(q): requires base-data access • Drift up to new size (stay within new footprint at all times) • Choose q optimally to reduce overall resizing time • Approximate and Monte Carlo methods

Multiset Sampling (PODS ’07) • Bernoulli samples over multisets (w. deletions) • When boundedness not an issue • Problem: how to handle deletions (pairing?) • Idea: maintain “tracking counter” • # inserts into DS since first insertion into sample (GM98) • Can exploit tracking counter • To estimate frequencies, sums, avgs • Unbiased (except avg) and low variance • To estimate # distinct values • Maintaining tracking counter • Subsampling: new algorithm • Merging: negative result • Synopsis-warehouse subsampling and merging still OK

Synopsis 2: AKMV Synopses (SIGMOD ’07) • Goal: Estimate # distinct values • Dataset similarity (Jaccard distance) • Key detection • Data cleansing • Within warehouse framework • Must handle multiset union, intersection, difference

KMV Synopsis • Used for a base partition • Synopsis: k smallest hashed values • vs bitmaps (e.g., logarithmic counting) • Need inclusion/exclusion to handle intersection • Less accuracy, poor scaling • vs sample counting • Random size K (between k/2 and k) • vs Bellman [DJMS02] • minHash for k independent hash functions • O(k) time per arriving value, vs O(log k) • Can view as uniform sample of DV’s

The Basic Estimator • Estimator: • U(k) = kth smallest hashed value • Properties (theory of uniform order statistics) • Normalized hashed values “look like” i.i.d. uniform[0,1] RVs • Large-D scenario (simpler formulas) • Theorem: U(k) approx.= sum of k i.i.d exp(D) random variables • Analysis coincides with [Cohen97] • Can use simpler formulas to choose synopsis size

Compound Partitions • Given a multiset expression E • In terms of base partitions A1,…,An • Union, intersection, multiset difference • Augmented KMV synopsis • Augment with counters • AKMV synopses are closed under multiset operations • Modified unbiased estimator for # DVs in E • See SIGMOD ’07 for details

Experimental Comparison 0.1 0.08 Absolute Relative Error 0.06 0.04 0.02 0 SDLogLog Unbiased Sample-Counting Unbiased-baseline

For More Details • "Toward automated large scale information integration and discovery." P. Brown, P. J. Haas, J. Myllymaki, H. Pirahesh, B. Reinwald, and Y. Sismanis. In Data Management in a Connected World, T. Härder and W. Lehner, eds. Springer-Verlag, 2005. • “Techniques for warehousing of sample data”. P. G. Brown and P. J. Haas. ICDE ‘06. • “A dip in the reservoir: maintaining sample synopses of evolving datasets”. R. Gemulla, W. Lehner, and P. J. Haas. VLDB ‘06. • “Maintaining Bernoulli samples over evolving multisets”. R. Gemulla, W. Lehner, and P. J. Haas. PODS ‘07. • “On Synopses for DistinctValue Estimation Under Multiset Operations” K. Beyer, P. J. Haas, B. Reinwald, Y. Sismanis, and R. Gemulla. SIGMOD 2007.

q = 1/3 + t 1 1 1 2 2 1 2 1 2 2 1 2 1 2 1 1 / / / / / / / / / / / / / / 3 3 3 3 3 3 3 3 3 3 3 3 3 3 + t 1 1 1 2 2 2 1 2 3 3 2 + t 3 1 2 3 30% 15% 15% 7% 15% 7% 7% 4% 3 1 2 Bernoulli Sampling • Bern(q) independently includes each element with probability q • Random, uncontrollable sample size • Easy to merge Bernoulli samples: union of 2 Bern(q) samp’s = Bern(q)

1 / 3 1 / 3 1 / 3 + t 1 2 3 2 1 3 3 + t + t 1 2 1 2 2 / 4 1 / 4 1 / 4 2 / 4 1 / 4 1 / 4 2 / 4 1 / 4 1 / 4 100% + t 1 2 4 2 1 4 3 2 4 2 3 4 1 3 4 3 1 4 4 33% 33% 33% 16 % 8 % 8 % 16 % 8 % 8 % 16 % 8 % 8 % + + t t + + t t 1 1 2 2 1 1 2 2 1 / 3 1 / 3 1 / 3 + t 1 2 3 2 1 3 3 Reservoir Sampling (Example) • Sample size M = 2

Concise-Sampling Example • Dataset • D = { a, a, a, b, b, b } • Footprint • F = one <value, #> pair • Three (possible) samples of size = 3 • S1 = { a, a, a }, S2 = { b, b, b }, S3 = { a, a, b }. • S1 = {<a,3>}, S2 = {<b,3>}, S3 = {<a,2>,<b,1>}. • Three samples should have with equal likelihood • But Prob(S1) = Prob(S2) > 0 and Prob(S3) = 0 • In general: • Concise sampling under-represents ‘rare’ population elements

+a +a {<a,2>} +a {<a,3>} +b +b {<a,3>,b} {<a,3>,<b,1>} {a,<b,2>} (subsample) +b {<a,3>,<b,2>} {a,b,b} (expand) {c,b,b} (reservoir sampling) … … +d {c,b,d} +a {a,a,a} {<a,3>} (compress) Hybrid Reservoir (HR) Sampling Ex: Sample capacity = two <v,#> pairs or three values Phase 1 (Maintain exact frequency distribution) +c Phase 2 (Reservoir sampling) done

Subsampling in HB Algorithm • Goal: find q such that P{|S| > nF} = p • Solve numerically: • Approximate solution (< 3% error):

Merging HB Samples • If both samples in Phase 2 (the usual case) • Choose q as before (w.r.t. |D1 U D2|) • Convert both samples to compressed Bern(q) [Use Bern(q’/q) trick as in Concise Sampling] • If union of compressed samples fits in memory then join and exitelse use reservoir sampling (unlikely) • See paper for remaining cases

Merging a Pair of HR Samples • If at least one sample in Phase 1 • Treat Phase-1 sample as “data stream” • Run HR sampling algorithm • If both samples in Phase 2 • Set k = min(|S1|, |S2|) • Select L elements from S1 and k – L from S2 • L has hypergeometric distribution on {0,1,…,k} • Distribution depends on |D1|, |D2| • Take (compressed) reservoir subsamples of S1, S2 • Join (compressed union) and exit

Generating Realizations of L L is a random variable with probability mass function P(l) = P{ L=l } given by: for l = 0, 1, …. k-1 • Simplest implementation • Compute P recursively • Use inversion method (probe cumulative distribution at each merge) • Optimizations when |D|’s and |S|’s unchanging • Use alias methods to generate L from cached distributions in O(1) time

Naïve/Prior Approaches Algorithm Technique Comments (RS with deletions) conduct deletions, continue with smaller sample unstable Naïve use insertions to immediately refill the sample not uniform RS with resampling let sample size decrease, but occasionally recompute expensive, unstable CAR(WOR) immediately sample from base data to refill the sample stable but expensive Bernoulli sampling with purging “coin flip” sampling with deletions, purge if too large inexpensive but unstable Passive sampling developed for data streams (sliding windows only) special case of our RP algorithm tailored for multiset populations Distinct-value sampling expensive, low space efficiency in our setting Modification of concise sampling Not uniform Counting samples

A Negative Result • Theorem • Any resizing algorithm MUST access base data • Example • data set • samples of size 2 • new data set • samples of size 3 Not uniform!

Resizing: Phase 1 Conversion to Bernoulli sample • Given q, randomly determine sample size • U = Binomial(|D|,q) • Reuse S to create Bernoulli sample • Subsample if U < |S| • Else sample additional tuples (base data access) • Choice of q • small less base data accesses • large more base data accesses

Resizing: Phase 2 Run Bernoulli sampling • Include new tuples with probability q • Delete from sample as necessary • Eventually reach new sample size • Revert to reservoir sampling • Choice of q • small long drift time • large short drift time

Choosing q (Inserts Only) • Expected Phase 1 (conversion) time • Expected Phase 2 (drifting) time • Choose q to minimize E[T1] + E[T2]

Resizing Behavior • Example (dependence on base-access cost): • resize by 30% if sampling fraction drops below 9% • dependent on costs of accessing base data Low costs Moderate costs High costs immediate resizing combined solution degenerates to Bernoulli sampling

Choosing q (w. Deletes) • Simple approach (insert prob. = p > 0.5) • Expected change in partition size (Phase 2) • (p)(1)+(1-p)(-1) = 2p-1 • So scale Phase 2 cost by 1/(2p-1) • More sophisticated approach • Hitting time of Markov chain to boundary • Stochastic approximation algorithm • Modified Kiefer-Wolfowitz

Estimating the DV Count • Exact computation via sorting • Usually infeasible • Sampling-based estimation • Very hard problem (need large samples) • Probabilistic counting schemes • Single-pass, bounded memory • Several flavors (mostly bit-vector synopses) • Linear counting (ASW87) • Logarithmic counting (FM85,WVT90,AMS, DF03) • Sample counting (ASW87,Gi01, BJKST02)

Intuition • Look at spacings • Example with k = 4 and D = 7: • E[V] 1 / D so that D 1 /E[V] • Estimate D as 1 / Avg(V1,…,Vk) • I.e., as k / Sum(V1,…,Vk) • I.e., as k / u(k) • Upward bias (Jensen’s inequality) so change k to k-1