HCI Course - Final Project

HCI Course - Final Project. Lecturer: Dr. 連震杰 Student ID: P78971252 Student Name: 傅夢璇 Mail address: mhfu@ismp.csie.ncku.edu.tw Department: Information Engineering Laboratory: ISMP Lab Supervisor: Dr. 郭耀煌. Final Project.

HCI Course - Final Project

E N D

Presentation Transcript

HCI Course - Final Project Lecturer: Dr. 連震杰 Student ID: P78971252 Student Name: 傅夢璇 Mail address: mhfu@ismp.csie.ncku.edu.tw Department: Information Engineering Laboratory: ISMP Lab Supervisor: Dr. 郭耀煌

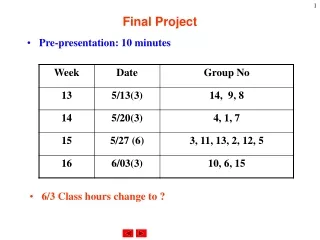

Final Project • Title: Real-time classification of evoked emotions using facial feature tracking and physiological responses • Jeremy N. Bailenson, Emmanuel D. Pontikakis, Iris B. Mauss, James J. Gross, Maria E. Jabon, Cendri A.C. Hutcherson, Clifford Nass, Oliver John • International Journal of Human-Computer Studies. Volume 66 Issue 5, May, pp. 303-317 • Keywords: Facial tracking; Emotion; Computer vision

Abstract • The real-time models use videotapes of subjects’ faces and physiological measurements to predict rated emotion • Through real behavior of subjects watching emotional videos instead of actors making facial poses • To predict the amusement and sadness of emotion type and intensity level • Results demonstrated better fit on the aspects of the emotion categories, amusement ratings of emotion type and person-specific models

Introduction • Cameras are constantly capturing images of a person’s face such as cell phones, webcams, even in automobiles— often with the goal of using that facial information as a clue to understand more about the current state of mind of the user • many car companies are installing cameras in the dashboard with the goal of detecting angry, drowsy, or drunk drivers • Goals are to assist the human-computer interfaces and understanding emotions from the facial expressions

Related work • There are at least three main ways in which psychologists assess facial expressions of emotions (Rosenberg and Ekman, 2000) • 1st: naı¨vecoders view images or videotapes, and then make holistic judgments concerning the degree on target faces in the images • limited in that the coders may miss subtle facial movements, and in that the coding may be biased by idiosyncratic morphological features of various faces

Related work • 2nd: use componential coding schemes in which trained coders use a highly regulated procedural technique to detect facial actions such as the Facial Action Coding System (Ekman and Friesan, 1978) • Advantage is this technique include the richness of the dataset • Disadvantage is the frame-by-frame coding of the points is extremely laborious

Related work • 3rd: to obtain more direct measures of muscle movement via facial electromyography (EMG) with electrodes attached on the skin of the face • This allows for sensitive measurement of features, the placement of the electrodes is difficult and also relatively constraining for subjects who wear them • This approach is also not helpful for coding archival footage

The approach (Part 1- Actual facial emotion) • First, the stimuli used as the input • Input: To elicit intense emotions from people watch videotapes • The actual emotional behavior is higher accessed than in studies that used deliberately posed faces • The automatic facial expressions appear to be more informative about underlying mental states than posed ones

The approach (Part 2- Opposite emotions and intensity) • Second, the emotions were coded second-by-second by using a linear scale for oppositely valencedemotions of the amusement and sadness • The learning algorithms are trained by both binary set of data and linear set of data spanning a full scale of emotional intensity

The approach (Part 3- Three model types) • Hundreds of video frames rated individually for amusement and sadness are collected from each person enable to create three model types • 1st is a ‘‘universal model’’ which predicts how amused any face is by using one set of subjects’ faces as training data and another independent set of subjects’ faces as testing data. • The model would be useful for HCI applications in bank automated teller machines, traffic light cameras, and public computers with webcams.

The approach (Part 3- Three model types) • 2nd is an ‘‘idiosyncratic model’’ predicts how amused or sad by using training and testing data from the same subject for each model • This model is useful for HCI applications in the same person who uses the same interfac • For example, driving in an owned car, using the same computer with a webcam, or any application with a camera in a private home

The approach (Part 3- Three model types) • 3rd is a gender-specific model, trained and tested using only data from subjects in same gender • This model is useful for HCI applications target a specific gender • For example, make-up advertisements directed at female consumers, or home repair advertisements targeted at males

The approach (Part 4- Features) • Physiological responses • Cardiovascular activity, • Electro dermal responding • Somatic activity • Facial features from a camera • Hart rate from the hands gripping the steering wheel

The approach (Part 5- Real-time algorithm) • computer vision algorithms detecting facial features • Real-time physiological measures • The applications on respond to a user’s emotion in improving the interaction • For example, cars seek to avoid accidents for drowsy drivers or advertisements seek to match their content to the mood of a person walking

The approach • The emotions of amusement and sadness and the physiological responses are collected in order to sample positive and negative emotions • Only two emotions are chosen since increasing the number of emotions would come at the cost of sacrificing the reliability of the emotions • The selected films induced dynamic hangs in emotional states over the 9-min period, ranging from neutral to more intense emotional states because of different individuals responded to films with different intensity degrees

Data collection • Training data • It was taken from 151 Stanford undergraduates watched movies pretested to elicit amusement and sadness while they watch videotapes and physiological responses were also assessed

Data collection • Laboratory session • The participants watched a 9-min film clip • The film was composed of an amusing, a neutral, a sad, and another neutral segment (each segment was approximately 2min long) • From the larger dataset of 151, randomly chose 41 to train and test the learning algorithms

Expert ratings of emotions • The laboratory software is rated the amount of amusement and sadness displayed in each second from video • It was anchored at 0 with neutral and 8 with strong laughter for amusement and strong sadness expression • Average inter-rater reliabilities were satisfactory, with Cronbach’s alphas= 0.89 (S.D.= 0.13) for amusement behavior and 0.79 (S.D.= 0.11) for sadness behavior

Physiological measures • 15 physiological measures were monitored • Heart rate • Systolic blood pressure • Diastolic blood pressure • Mean arterial blood pressure • Pre-ejection period • Skin conductance level • Finger temperature • Finger pulse amplitude • Finger pulse transit time • Ear pulse transit time • Ear pulse amplitude • Composite of peripheral sympathetic activation • Composite cardiac activation • Somatic activity

System structure • The videos of the 41 participants were analyzed at a resolution of 20 frames per second • The level of amusement/sadness of every person for every second was measured from 0 (less amused/sad) to 8 (more amused/sad) • The goal is to predict at every individual second the level of amusement or sadness for every person

System structure INPUTS OUTPUTS Processing

Measuring the facial expressions • For measuring the facial expression of the person at every frame, the NEVEN Vision Facial Feature Tracker is used • 22 points are tracked on a face with four blocks which are mouth, nose, eyes and eyebrow

Chi-square values in amusement • Top 20 features in amusement analysis

Chi-square values in sadness • Top 20 features in sadness analysis

Predicting emotion intensity • Two-fold cross-validation was performed on each dataset using two non-overlapping sets of subjects • The separate tests are performed both sadness and amusement • Three test are using face video alone, physiological features alone, and using both of them to predict the expert ratings

Intensity predicting • Table demonstrates that predicting the intensity of amusementis easier than that of sadness • The correlation coefficients of the sadness neural nets were consistently 20–40% lower than those fortheamusement classifiers

Emotion classification • A Support Vector Machine classifier with a linear kernel and a Logitboost with a decision stump weak classifier using 40 iterations (Freund and Schapire, 1996; Friedman et al., 2000) is applied • Each dataset using the WEKA machine learning software package (Witten and Fank, 2005) • The data is split into two non-overlapping datasets and performed a two-fold cross-validation on all classifiers

Emotion classification • The precision, the recall and the F1measure is defined as the harmonic mean between the precision and the recall • For a multi-class classification problem with classes Ai, i=1,..,M and each class Ai having a total of Ni instances • in the dataset, if the classifier predicts correctly Ci instances for Ai and predicts C’I iinstances to be in Ai where in fact those belong to other classes (misclassifies them), then the former measures are defined as

Emotion classification • The discrete classification results for all-subject datasets is shown as

Experimental results – Subjects (1) • The linear classification results for the individual subjects is shown as

Experimental results – Subjects (2) • The discrete classification results for the individual subjects is shown as

Experimental results - Gender • Predicting continuous ratings within gender • The subjects were split into two non-overlapping datasets in order to perform two-fold cross validation on all classifiers

Classification Results by Gender (1) • Linear classification results for gender-specific datasets

Classification Results by Gender (2) • Discrete classification results for gender-specific datasets

Conclusion • A real-time system for emotion recognition is presented • A relatively large number of subjects watched videos through facial and physiological to recognize the feel of amused or sad responses • A second-by second ratings of the intensity with expressed amusement and sadness to train coders

Discussions • The emotion recognition through facial expressions while watching videotapes is not strong prove become of the limitations in content of videotapes • Otherwise, the correctness of the devices of physiological features collection are also considered • The statistics in the aspects of emotion intensity is not significant improve