Parsing

Parsing. SLP Chapter 13. Outline. Parsing with CFGs Bottom-up, top-down CKY parsing Mention of Earley and chart parsing. Parsing. Parsing with CFGs refers to the task of assigning trees to input strings Trees that covers all and only the elements of the input and has an S at the top

Parsing

E N D

Presentation Transcript

Parsing SLP Chapter 13

Speech and Language Processing - Jurafsky and Martin Outline • Parsing with CFGs • Bottom-up, top-down • CKY parsing • Mention of Earley and chart parsing

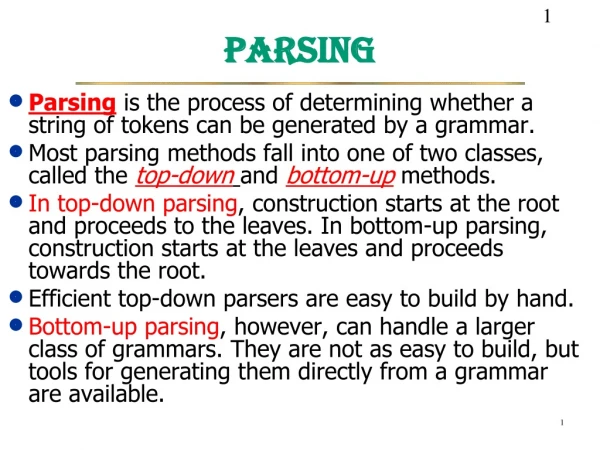

Speech and Language Processing - Jurafsky and Martin Parsing • Parsing with CFGs refers to the task of assigning trees to input strings • Trees that covers all and only the elements of the input and has an S at the top • This chapter: find all possible trees • Next chapter (14): choose the most probable one

Speech and Language Processing - Jurafsky and Martin Parsing • parsing involves a search

Speech and Language Processing - Jurafsky and Martin Top-Down Search • We’re trying to find trees rooted with an S; start with the rules that give us an S. • Then we can work our way down from there to the words.

Speech and Language Processing - Jurafsky and Martin Top Down Space

Speech and Language Processing - Jurafsky and Martin Bottom-Up Parsing • We also want trees that cover the input words. • Start with trees that link up with the words • Then work your way up from there to larger and larger trees.

Speech and Language Processing - Jurafsky and Martin Top-Down and Bottom-Up • Top-down • Only searches for trees that can be S’s • But also suggests trees that are not consistent with any of the words • Bottom-up • Only forms trees consistent with the words • But suggests trees that make no sense globally

Speech and Language Processing - Jurafsky and Martin Control • Which node to try to expand next • Which grammar rule to use to expand a node • One approach: exhaustive search of the space of possibilities • Not feasible • Time is exponential in the number of non-terminals • LOTS of repeated work, as the same constituent is created over and over (shared sub-problems)

Speech and Language Processing - Jurafsky and Martin Dynamic Programming • DP search methods fill tables with partial results and thereby • Avoid doing avoidable repeated work • Solve exponential problems in polynomial time (well, no not really – we’ll return to this point) • Efficiently store ambiguous structures with shared sub-parts. • We’ll cover two approaches that roughly correspond to bottom-up and top-down approaches. • CKY • Earley – we will mention this, not cover it in detail

Speech and Language Processing - Jurafsky and Martin CKY Parsing • Consider the rule A BC • If there is an A somewhere in the input then there must be a B followed by a C in the input. • If the A spans from i to j in the input then there must be some k st. i<k<j • Ie. The B splits from the C someplace.

Speech and Language Processing - Jurafsky and Martin Convert Grammar to CNF • What if your grammar isn’t binary? • As in the case of the TreeBank grammar? • Convert it to binary… any arbitrary CFG can be rewritten into Chomsky-Normal Form automatically. • The resulting grammar accepts (and rejects) the same set of strings as the original grammar. • But the resulting derivations (trees) are different. • We saw this in the last set of lecture notes

Speech and Language Processing - Jurafsky and Martin Convert Grammar to CNF • More specifically, we want our rules to be of the form A B C Or A w That is, rules can expand to either 2 non-terminals or to a single terminal.

Speech and Language Processing - Jurafsky and Martin Binarization Intuition • Introduce new intermediate non-terminals into the grammar that distribute rules with length > 2 over several rules. • So… S A B C turns into S X C and X A B Where X is a symbol that doesn’t occur anywhere else in the the grammar.

Converting grammar to CNF Copy all conforming rules to the new grammar unchanged Convert terminals within rules to dummy non-terminals Convert unit productions Make all rules with NTs on the right binary In lecture: what these mean; apply to example on next two slides

Speech and Language Processing - Jurafsky and Martin Sample L1 Grammar

Speech and Language Processing - Jurafsky and Martin CNF Conversion

Speech and Language Processing - Jurafsky and Martin CKY • Build a table so that an A spanning from i to j in the input is placed in cell [i,j] in the table. • E.g., a non-terminal spanning an entire string will sit in cell [0, n] • Hopefully an S

Speech and Language Processing - Jurafsky and Martin CKY • If • there is an A spanning i,j in the input • A B C is a rule in the grammar • Then • There must be a B in [i,k] and a C in [k,j] for some i<k<j

Speech and Language Processing - Jurafsky and Martin CKY • The loops to fill the table a column at a time, from left to right, bottom to top. • When we’re filling a cell, the parts needed to fill it are already in the table • to the left and below

Speech and Language Processing - Jurafsky and Martin CKY Algorithm

Speech and Language Processing - Jurafsky and Martin Example Go through full example in lecture

Speech and Language Processing - Jurafsky and Martin CKY Parsing • Is that really a parser? • So, far it is only a recognizer • Success? an S in cell [0,N] • To turn it into a parser … see Lecture

Speech and Language Processing - Jurafsky and Martin CKY Notes • Since it’s bottom up, CKY populates the table with a lot of worthless constituents. • To avoid this we can switch to a top-down control strategy • Or we can add some kind of filtering that blocks constituents where they can not happen in a final analysis.

Dynamic Programming Parsing Methods CKY (Cocke-Kasami-Younger) algorithm based on bottom-up parsing and requires first normalizing the grammar. Earley parser is based on top-down parsing and does not require normalizing grammar but is more complex. More generally, chart parsers retain completed phrases in a chart and can combine top-down and bottom-up search.

Conclusions • Syntax parse trees specify the syntactic structure of a sentence that helps determine its meaning. • John ate the spaghetti with meatballs with chopsticks. • How did John eat the spaghetti? What did John eat? • CFGs can be used to define the grammar of a natural language. • Dynamic programming algorithms allow computing a single parse tree in cubic time or all parse trees in exponential time.