Algorithms and Software for Large-Scale Nonlinear Optimization

This document presents cutting-edge algorithms and software for large-scale nonlinear optimization, highlighting key projects by Richard Waltz from Northwestern University. It covers two main projects: Large-scale Active-Set methods and Adaptive Barrier Updates for interior-point methods, focusing on challenges in current active-set methods, such as convergence issues and efficient scaling. The text evaluates various methods, including Successive Linear Programming (SLP), Sequential Quadratic Programming (SQP), and adaptive strategies, emphasizing their strengths and areas for further development in ensuring robust performance on large-scale problems.

Algorithms and Software for Large-Scale Nonlinear Optimization

E N D

Presentation Transcript

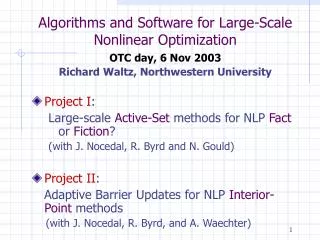

Algorithms and Software for Large-Scale Nonlinear Optimization OTC day, 6 Nov 2003 Richard Waltz, Northwestern University • Project I: Large-scale Active-Set methods for NLP Fact or Fiction? (with J. Nocedal, R. Byrd and N. Gould) • Project II: Adaptive Barrier Updates for NLP Interior-Point methods (with J. Nocedal, R. Byrd, and A. Waechter)

Current Active-Set Methods • Successive Linear Programming (SLP) • Inefficient, slow convergence • Successively Linearly Constrained (SLC) • e.g. MINOS • Difficulty scaling up • Sequential Quadratic Programming (SQP) • e.g. filterSQP, SNOPT • Very robust when less than a couple thousand degrees of freedom • For larger problems QP subproblems may be too expensive

SLP-EQP Approach • Fletcher, Sainz de la Maza (1989) Overview 0. Given: x • Solve LP to get working setW. • Compute a step, d, by solving an equality constrainedQP using constraints in W. • Set: xT= x+d.

SLP-EQP • Strengths: • Only solve LP and EQP subproblems • Early results very encouraging • Competitive with SQP – able to solve problems with more degrees of freedom • But… • Not yet competitive with Interior • Difficulties in warm starting LP subproblems • How to handle degeneracy? • Theory needs more development

Adaptive barrier updates NLP • Functions twice continuously differentiable

Adaptive barrier updates Solve a sequence of barrier subproblems • Approach solution to NLP as

Adaptive barrier updates (NLP) Overview of Barrier Strategies: • Fixed decrease with barrier stop test (e.g. KNITRO) • Centrality-based strategies (e.g. LOQO) • Probing strategies (e.g. Mehrotra PC)

Adaptive barrier updates (NLP) KNITRO • Conservative rule • Initially m=0.1 • Decrease m linearly • Fastlinear decrease near solution • Globally convergent • Robust but trade-off some efficiency • Initial point option

Adaptive barrier updates (NLP) • Develop a more flexible adaptive rule • Allow increases in barrier parameter! • q : function of: Spread of complementarity pairs Recent steplengths Ease of meeting a barrier stop test Probing step (e.g. predictor step)

Globally Convergent Framework • Official mfor global conv (satisfies barrier stop test) • Trial m for flexibility 1 2 3