MA2213 Lecture 8

MA2213 Lecture 8. Eigenvectors. Application of Eigenvectors. Vufoil 18, lecture 7 : The Fibonacci sequence satisfies. Fibonacci Ratio Sequence. Fibonacci Ratio Sequence. Another Biomathematics Application.

MA2213 Lecture 8

E N D

Presentation Transcript

MA2213 Lecture 8 Eigenvectors

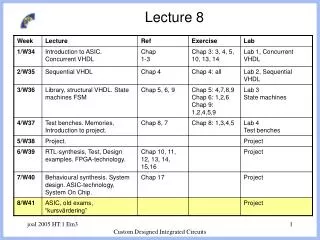

Application of Eigenvectors Vufoil 18, lecture 7 : The Fibonacci sequence satisfies

Another Biomathematics Application Leonardo da Pisa, better known as Fibonacci, invented his famous sequence to compute the reproductive success of rabbits* Similar sequences describe frequencies in males, females of a sex-linked gene. For genes (2 alleles) carried in the X chromosome** The solution has the form where *page i, ** pages 10-12 in The Theory of Evolution and Dynamical Systems ,J. Hofbauer and K. Sigmund, 1984.

Eigenvector Problem (pages 333-351) is a square matrix then a nonzero Recall that if vector is an eigenvector corresponding to the eigenvalue if Eigenvectors and eigenvalues arise in biomathematics where they describe growth and population genetics They arise in numerical solution of linear equations because they determine convergence properties They arise in physical problems, especially those that involve vibrations in which eigenvalues are related to vibration frequencies

Example 7.2.1 pages 333-334 For the eigenvalue-eigenvector pairs are and We observe that every (column) vector where

Example 7.2.1 pages 333-334 Therefore, since x Ax is a linear transformation and since are eigenvectors We can repeat this process to obtain ? Question What happens as

Example 7.2.1 pages 333-334 General Principle : If a vector v can be expressed as a linear combination of eigenvectors of a matrix A, then it is very easy to compute Av It is possible to express every vector as a linear combination of eigenvectors of an n by n matrix A iff either of the following equivalent conditions is satisfied : (i) there exists a basis consisting of eigenvectors of A (ii) the sum of dimensions of eigenspaces of A = n Question Does this condition hold for ? Question What special form does this matrix have ?

Example 7.2.1 pages 333-334 The characteristic polynomial of is 2 is the (only) eigenvalue, it has algebraicmultiplicity 2 so the eigenspace for eigenvalue 5 has dimension 1 the eigenvalue 5 is said to have geometricmultiplicity 1 Question What are alg.&geom. mult. in Example 7.2.7 ?

Characteristic Polynomials pp. 335-337 Example 7.22 (p. 335) The eigenvalue-eigenvector pairs in Example 7.2.1 are of the matrix corresponding eigenvectors Question What is the equation for ?

Eigenvalues of Symmetric Matrices The following real symmetric matrices that we studied have real eigenvalues and eigenvectors correspondingto distinct eigenvectors are orthogonal. Question What are the eigenvalues of these matrices ? Question What are the corresponding eigenvectors ? Question Compute their scalar products

Eigenvalues of Symmetric Matrices Theorem 1. All eigenvalues of real symmetric matrices are real valued. Proof For a matrix with complex (or real) entries let denote the matrix whose entries are the complex conjugates of the entries of Question Prove is real (all entries are real) iff Question Prove Assume that and observe that therefore and

Eigenvalues of Symmetric Matrices Theorem 2. Eigenvectors of a real symmetric matrix that correspond to distinct eigenvalues are orthogonal. Proof Assume that Then compute and observe that

Orthogonal Matrices Definition A matrix is orthogonal if If is orthogonal then therefore either or so is nonsingular and has an inverse hence so Examples

Permutation Matrices Definition A matrix is called a permutation matrix if there exists a function (called a permutation) that is 1-to-1 (and therefore onto) such that Examples Question Why is every permutation matrix orthogonal ?

Eigenvalues of Symmetric Matrices Theorem 7.2.4 pages 337-338 If is symmetric of then there exists a set eigenvalue-eigenvector pairs Proof Uses Theorems 1 and 2 and a little linear algebra. Choose eigenvectors so that construct matrices and observe that

MATLAB EIG Command >> help eig EIG Eigenvalues and eigenvectors. E = EIG(X) is a vector containing the eigenvalues of a square matrix X. [V,D] = EIG(X) produces a diagonal matrix D of eigenvalues and a full matrix V whose columns are the corresponding eigenvectors so that X*V = V*D. [V,D] = EIG(X,'nobalance') performs the computation with balancing disabled, which sometimes gives more accurate results for certain problems with unusual scaling. If X is symmetric, EIG(X,'nobalance') is ignored since X is already balanced. E = EIG(A,B) is a vector containing the generalized eigenvalues of square matrices A and B. [V,D] = EIG(A,B) produces a diagonal matrix D of generalized eigenvalues and a full matrix V whose columns are the corresponding eigenvectors so that A*V = B*V*D. EIG(A,B,'chol') is the same as EIG(A,B) for symmetric A and symmetric positive definite B. It computes the generalized eigenvalues of A and B using the Cholesky factorization of B. EIG(A,B,'qz') ignores the symmetry of A and B and uses the QZ algorithm. In general, the two algorithms return the same result, however using the QZ algorithm may be more stable for certain problems. The flag is ignored when A and B are not symmetric. See also CONDEIG, EIGS.

MATLAB EIG Command Example 7.2.3 page 336 >> A = [-7 13 -16;13 -10 13;-16 13 -7] A = -7 13 -16 13 -10 13 -16 13 -7 >> [U,D] = eig(A); >> U U = -0.5774 0.4082 0.7071 0.5774 0.8165 -0.0000 -0.5774 0.4082 -0.7071 >> D D = -36.0000 0 0 0 3.0000 0 0 0 9.0000 >> A*U ans = 20.7846 1.2247 6.3640 -20.7846 2.4495 -0.0000 20.7846 1.2247 -6.3640 >> U*D ans = 20.7846 1.2247 6.3640 -20.7846 2.4495 -0.0000 20.7846 1.2247 -6.3640

Positive Definite Symmetric Matrices is [lec4,slide24] Theorem 4 A symmetric matrix (semi) positive definite iff all of its eigenvalues Proof Let be the orthogonal, diagonal matrices on the previous page that satisfy Then for every where Since is nonsingular is (semi) positive definite iff therefore Clearly this condition holds iff

Singular Value Decomposition then there and Theorem 3 If exist orthogogonal matrices has the form such that where Singular Values = sqrt eig Proof Outline Choose so and are diagonal, then satisfies try to finish

MATLAB SVD Command >> help svd SVD Singular value decomposition. [U,S,V] = SVD(X) produces a diagonal matrix S, of the same dimension as X and with nonnegative diagonal elements in decreasing order, and unitary matrices U and V so that X =U*S*V'. S = SVD(X) returns a vector containing the singular values. [U,S,V] = SVD(X,0) produces the "economy size“ decomposition. If X is m-by-n with m > n, then only the first n columns of U are computed and S is n-by-n. See also SVDS, GSVD.

MATLAB SVD Command >> M = [0 1; 0.5 0.5] M = 0 1.0000 0.5000 0.5000 >> [U,S,V] = svd(M) U = -0.8507 -0.5257 -0.5257 0.8507 S = 1.1441 0 0 0.4370 V = -0.2298 0.9732 -0.9732 -0.2298 >> U*S*V' ans = 0.0000 1.0000 0.5000 0.5000

Square Roots Theorem 5 A symmetric positive definite matrix has a symmetric positive definite ‘square root’. Proof Let be the orthogonal, diagonal matrices on the previous page that satisfy Then construct the matrices and observe that is symmetric positive definite and satisfies

Polar Decomposition Theorem 6 Every nonsingular matrix can be factored as where is symmetric and positive definite and is orthogonal. Proof Construct and observe that is symmetric and positive definite. Let be symmetric and construct positive definite and satisfy Then and clearly

Löwdin Orthonormalization (1) Per-Olov Löwdin, On the Non-Orthogonality Problem Connected with the use of Atomic Wave Functions in the Theory of Molecules and Crystals, J. Chem. Phys. 18, 367-370 (1950). http://www.quantum-chemistry-history.com/Lowdin1.htm in an inner product space Proof Start with (assumed to be linearly independent), compute the Gramm matrix Since is symmetric and positive definite, Theorem 5 gives (and provides a method to compute) a matrix that is symmetric and positive definite and are orthonormal. Then

The Power Method pages 340-345 Finds the eigenvalue with largest absolute value of a whose eigenvalues satisfy matrix Step 1 Compute a vector with random entries Step 2 Compute and and Step 3 Compute ( recall that ) Step 4 Compute and and Repeat Then with

The Inverse Power Method Result If is an eigevector of then corresponding to eigenvalue and is an eigenvector of corresponding to eigenvalue Furthermore, if then corresponding to is an eigenvector of eigenvalue Definition The inverse power method is the power method applied to the matrix It can find the eigenvalue-eigenvector pair if there is one eigenvalue that has smallest absolute value.

Inverse Power Method With Shifts Computes eigenvalue of closest to and a corresponding eigenvector Step 1 Apply 1 or more interations of the power method to estimate an eigenvalue using the matrix - eigenvector pair - better estimate of Step 2 Compute Step 3 Apply 1 or more interations of the power method using the matrix to estimate an eigenvalue and iterate. Then - eigenvector pair with cubic rate of convergence !

Unitary and Hermitian Matrices Definition The adjoint of a matrix is the matrix Example Definition A matrix is unitary if Definition A matrix is hermitian if is (semi) positive definite Definition A matrix if (or self-adjoint) Super Theorem : All previous theorems true for complex matrices if orthogonal is replaced by unitary, symmetric by hermitian, and old with new (semi) positive definite.

Homework Due Tutorial 5 (Week 11, 29 Oct – 2 Nov) 1. Do Problem 1 on page 348. 2. Read Convergence of the Power Method (pages 342-346) and do Problem 16 on page 350. 3. Do problem 19 on pages 350-351. 4. Estimate eigenvalue-eigenvector pairs of the matrix M using the power and inverse power methods – use 4 iterations and compute errors 5. Compute the eigenvalue-eigenvector pairs of the orthogonal matrix O 6. Prove that the vectors defined at the bottom of slide 29 are orthonormal by computing their inner products

Extra Fun and Adventure We have discussed several matrix decompositions : LU Eigenvector Singular Value Polar Find out about other matrix decompositions. How are they derived / computed ? What are their applications ?