File Compression Techniques

File Compression Techniques. Alex Robertson. Outline. History Lossless vs Lossy Basics Huffman Coding Getting Advanced Lossy Explained Limitations Future. History, where this all started. The Problem! 1940s Shannon- Fano coding Properties

File Compression Techniques

E N D

Presentation Transcript

File Compression Techniques Alex Robertson

Outline • History • Lossless vsLossy • Basics • Huffman Coding • Getting Advanced • Lossy Explained • Limitations • Future

History, where this all started • The Problem! • 1940s • Shannon-Fano coding • Properties • Different codes have different numbers of bits. • Codes for symbols with low probabilities have more bits, and codes for symbols with high probabilities have fewer bits. • Though the codes are of different bit lengths, they can be uniquely decoded.

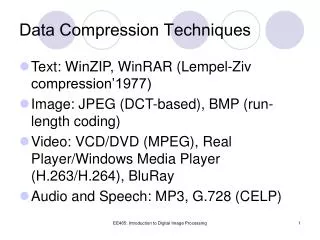

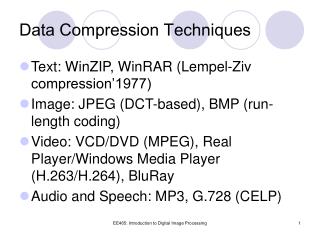

Lossless vsLossy • Lossless • DEFLATE • Data, every little detail is important • Lossy • JPEG • MP3 • Data can be lost and unnoticed

Understanding the Basics • Properties • Different codes have different numbers of bits. • Codes for symbols with low probabilities have more bits, and codes for symbols with high probabilities have fewer bits. • Though the codes are of different bit lengths, they can be uniquely decoded. • Encode “SATA” S = 10 A = 0 T = 11

Prefix Rule • S = 01 A = 0 T = 00 • SATA • SAAAA • STT 01 0 00 0 No code can be the prefix of another code. If 0 is a code, 0* can’t be a code.

Make a Tree • Create a Tree A = 010 B = 11 C = 00 D = 10 R = 011

Decode • 01011011010000101001011011010 A = 010 B = 11 C = 00 D = 10 R = 011 • Violates the property: • Codes for symbols with low probabilities have more bits, and codes for symbols with high probabilities have fewer bits.

Huffman Coding • Create a Tree • Encode “ABRACADABRA” • Determine Frequencies • The two least frequent “nodes” are located. • A parent node is created from the two above nodes and it is given a weight equal to the sum of the two contain node frequencies. • One of the child nodes is given the 0 bit and the other the 1 bit • Repeat the above steps until only one node is left.

Does it work? • Re-encode • 01011011010000101001011011010 • 29 bits

It Works! 01011001110011110101100 = 23 bits ABRACADABRA = 11 character * 7 bits each = 77 bits but…

It Works… With Issues. • Header includes the probability table • Not the best in certain cases Example. ‘A’ 100 times Huffman only reduces this to 100 bits (minus the header)

Moving Forward • Arithmetic Method • Not Specific Code • Continuously changing single floating-point output number • Example

Dictionary Based • Implemented in the late 70s • Uses previously seen words as a dictionary. • the quick brown fox jumped over the lazy dog • I bought a Mississippi Banana in Mississippi.

Lossy Compression • Lossy Formula • Lossless Formula • My Sound!

Mathematical Limitations • Claude E. Shannon • http://www.data-compression.com/theory.html

Example • DEFLATE • http://en.wikipedia.org/wiki/DEFLATE

Future • Hardware is getting better • Theories are the same

Thanks You • Questions