Uncertainty

E N D

Presentation Transcript

Uncertainty ECE457 Applied Artificial Intelligence Spring 2007 Lecture #8

Outline • Uncertainty • Probability • Bayes’ Theorem • Russell & Norvig, chapter 13 ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 2

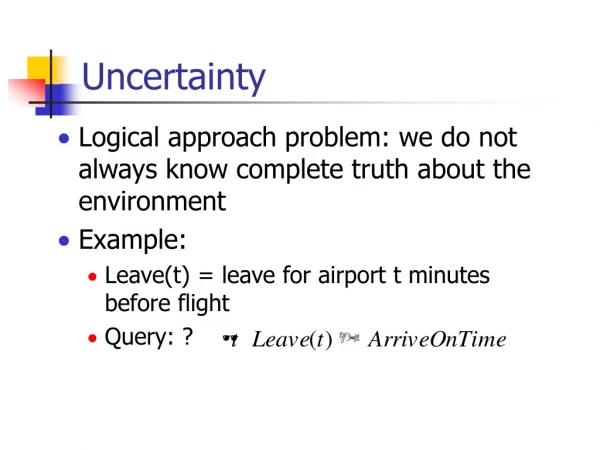

Limit of FOL • FOL works only when facts are known to be true or false • “Some purple mushrooms are poisonous” • x Purple(x) Mushroom(x) Poisonous(x) • In real life there is almost always uncertainty • “There’s a 70% chance that a purple mushroom is poisonous” • Can’t be represented as FOL sentence ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 3

Acting Under Uncertainty • So far, rational decision is to pick action with “best” outcome • Two actions • #1 leads to great outcome • #2 leads to good outcome • It’s only rational to pick #1 • Assumes outcome is 100% certain • What if outcome is not certain? • Two actions • #1 has 1% probability to lead to great outcome • #2 has 90% probability to lead to good outcome • What is the rational decision? ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 4

Acting Under Uncertainty • Maximum Expected Utility (MEU) • Pick action that leads to best outcome averaged over all possible outcomes of the action • Same principle as Expectiminimax, used to solve games of chance (see Game Playing, lecture #5) • How do we compute the MEU? • First, we need to compute the probability of each event ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 5

Types of Uncertain Variables • Boolean • Can be true or false • Warm {True, False} • Discrete • Can take a value from a limited, countable domain • Temperature {Hot, Warm, Cool, Cold} • Continuous • Can take a value from a set of real numbers • Temperature [-35, 35] • We’ll focus on discrete variables for now ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 6

Probability • Each possible value in the domain of an uncertain variable is assigned a probability • Represents how likely it is that the variable will have this value • P(Temperature=Warm) • Probability that the “Temperature” variable will have the value “Warm” • We can simply write P(Warm) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 7

Probability Axioms • P(x) [0, 1] • P(x) = 1 • x is necessarily true, or certain to occur • P(x) = 0 • x is necessarily false, or certain not to occur • P(A B) = P(A) + P(B) – P(A B) • P(A B) = 0 • A and B are said to be mutually exclusive • P(x) = 1 • If all values of x are mutually exclusive ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 8

Visualizing Probabilities • P(A) is the proportion of the event space in which A is true • Area of green circle / Total area • P(A) = 0 if the green circle doesn’t exist • P(A) = 1 if the green circle covers the entire event space Event space P(A) P(A) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 9

Visualizing Probabilities • P(A B) = P(A) + P(B) – P(A B) • Sum of area of both circles / Total area • P(A B) = 0 • There is no intersection between both regions • A and B can’t happen together: mutually exclusive P(A B) P(A) P(B) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 10

Prior (Unconditional) Probability • Probability that A is true in the absence of any other information • P(A) • Example • P(Temperature=Hot) = 0.2 • P(Temperature=Warm) = 0.6 • P(Temperature=Cool) = 0.15 • P(Temperature=Cold) = 0.05 • P(Temperature) = {0.2, 0.6, 0.15, 0.05} • This is a probability distribution ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 11

Joint Probability Distribution • Let’s add another variable • Condition {Sunny, Cloudy, Raining} • We can compute P(Temperature,Condition) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 12

Joint Probability Distribution • Given a joint probability distribution P(a,b), we can compute P(a=Ai) • P(Ai) = j P(Ai,Bj) • Assumes all events (Ai,Bj) are mutually exclusive • This is called marginalization • P(Warm) = P(Warm,Sunny) + P(Warm,Cloudy) + P(Warm,Raining)P(Warm) = 0.23 + 0.2 + 0.17P(Warm) = 0.6 ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 13

Visualizing Marginalization • P(A) = P(A,B) + P(A,C) + P(A,D) • No area of A not covered by B, C or D • B, C and D do not intersect inside A P(A,C) P(A,B) P(B) P(A) P(C) P(D) P(A,D) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 14

Posterior (Conditional) Probability • Probability that A is true given that we know that B is true • P(A|B) • Can be computed using prior and joint probability • P(A|B) = P(A,B) / P(B) • P(Warm|Cloudy) = P(Warm,Cloudy) / P(Cloudy)P(Warm|Cloudy) = 0.2 / 0.32P(Warm|Cloudy) = 0.625 ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 15

Visualizing Posterior Probability • P(A|B) = P(A,B) / P(B) • We know that B is true • We want the area of B where A is also true • We don’t care about the area P(B) P(A | B) P(A) P(B) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 16

Bayes’ Theorem • Start from previous conditional probability equation • P(A|B)P(B) = P(A,B) • P(B|A)P(A) = P(B,A) • P(A|B)P(B) = P(B|A)P(A) • P(A|B) = P(B|A)P(A) / P(B) (important!) • P(A|B): Posterior probability • P(A): Prior probability • P(B|A): Likelihood • P(B): Normalizing constant ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 17

Bayes’ Theorem • Allows us to compute P(A|B) without knowing P(A,B) • In many real-life situations, P(A|B) cannot be measured directly, but P(B|A) is available • Bayes’ Theorem underlies all modern probabilistic AI systems ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 18

Visualizing Bayes’ Theorem • P(A|B) = P(B|A)P(A) / P(B) • We know the portion of the event space where A is true, and that where B is true • We know the portion of A where B is also true • We want the portion of B where A is also true P(B|A) P(B) P(A) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 19

Bayes’ Theorem Example #1 • We want to design a classifier (for email spam) • Compute the probability that an item belongs to class C (spam) given that it exhibits feature F (the word “Viagra”) • We know that • 20% of items in the world belong to class C • 90% of items in class C exhibit feature F • 40% of items in the world exhibit feature F • P(C|F) = P(F|C) * P(C) / P(F)P(C|F) = 0.9 * 0.2 / 0.4P(C|F) = 0.45 ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 20

Bayes’ Theorem Example #2 • A drug test returns “positive” if drugs are detected in an athlete’s system, but it can make mistakes • If an athlete took drugs, 99% chance of + • If an athlete didn’t take drugs, 10% chance of + • 5% of athletes take drugs • What’s the probability that an athlete who tested positive really does take drugs? • P(drug|+) = P(+|drug) * P(drug) / P(+) P(+) = P(+|drug)P(drug) + P(+|nodrug)P(nodrug)P(+) = 0.99 * 0.05 + 0.1*0.95 = 0.1445 P(drug|+) = 0.99 * 0.05 / 0.1445 = 0.3426 ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 21

Bayes’ Theorem • We computed the normalizing constant using marginalization! • P(B) = i P(B|Ai)P(Ai) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 22

Chain Rule • Recall that P(A,B) = P(A|B)P(B) • Can be extended to multiple variables • Extend to three variables • P(A,B,C) = P(A|B,C)P(B,C)P(A,B,C) = P(A|B,C)P(B|C)P(C) • General form • P(A1,A2,…,An) = P(A1|A2,…,An)P(A2|A3,…,An)…P(An-1|An)P(An) • Compute full joint probability distribution • Simple if variables conditionally independent ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 23

Visualizing Chain Rule • P(A,B,C) = P(A|B,C)P(B|C)P(C) • We want the proportion of the event space where A, B and C are true • Proportion of B,C where A is also true *proportion of C where B is also true *proportion of the event space where C is true P(A,B,C) P(B|C) P(C) P(B) P(A|B,C) P(A) P(B,C) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 24

Independence • Two variables are independent if knowledge of one does not affect the probability of the other • P(A|B) = P(A) • P(B|A) = P(B) • P(A B) = P(A)P(B) • Impact on chain rule • P(A1,A2,…,An) = P(A1)P(A2)…P(An) P(A1,A2,…,An) = i=1n P(Ai) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 25

Conditional Independence • Independence is hard to satisfy • Two variables are conditionally independent given a third if knowledge of one does not affect the probability of the other if the value of the third is known • P(A|B,C) = P(A|C) • P(B|A,C) = P(B|C) • Impact on chain rule • P(A1,A2,…,An) = P(A1|An)P(A2|An)…P(An-1|An)P(An) P(A1,A2,…,An) = P(An) i=1n-1 P(Ai|An) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 26

Bayes’ Theorem Example #3 • We want to design a classifier • Compute the probability that an item belongs to class C given that it exhibits features F1 to Fn • We know • % of items in the world that belong to class C • % of items in class C that exhibit feature Fi • % of items in the world exhibit features F1 to Fn • P(C|F1,…,Fn) = P(F1,…,Fn|C)*P(C)/P(F1,…,Fn) P(F1,…,Fn|C) * P(C) = P(C,F1,…,Fn) by chain rule P(C,F1,…,Fn) = P(C) iP(Fi|C) assuming features are conditionally independent given the class P(C|F1,…,Fn) = P(C) iP(Fi|C) / P(F1,…,Fn) ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 27

Bayes’ Theorem Example #3 • P(F1,…,Fn) • Independent of class C • In multi-class problems, it makes no difference! • P(C|F1,…,Fn) = P(C) iP(Fi|C) • This is called the Naïve Bayes Classifier • “Naïve” because it assumes conditional independence of Fi given C whether it’s actually true or not • Often used in practice in cases where Fi are not conditionally independent given C, with very good results ECE457 Applied Artificial Intelligence R. Khoury (2007) Page 28