Instruments

NTR 629 - Week 5. Instruments. What is an Instrument?. The device the researcher uses to collect data is called an instrument. Data refers to the information researchers obtain on the subjects of their research. Demographic information or scores from a test are examples of data collected .

Instruments

E N D

Presentation Transcript

NTR 629 - Week 5 Instruments

What is an Instrument? • The device the researcher uses to collect data is called an instrument. • Data refers to the information researchers obtain on the subjects of their research. • Demographic information or scores from a test are examples of data collected. • First have clear research hypothesis/questions • Four Step Instrument Design Process: • 1. Conceptualization • Who (target audience) • What • 2. Construction • Writing test questions • Validity, reliability, objectivity, usability • 3. Pre-testing • 4. Administration • Steps often overlap

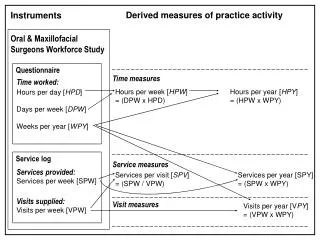

Types of Instruments • Classified based on who provides the information: • Subjects: Self-report data • Questionnaire/Survey • Test • Directly or indirectly: from the subjects of the study • Interview • From informants (people who are knowledgeable about the subjects and provide this information) • Types of Researcher-completed instruments • Rating scales • Interview schedules • Tally sheets • Flowcharts • Performance checklists • Anecdotal records • Time-and-motion logs • Observation forms

Conceptualization of “Who” • WHO are the potential respondents? Define: • Age, gender, race, location, health status, occupation, socioeconomic status, educational level, languages spoken, motivation level • How many subjects & sampling procedure including are they representative of population?) • Access to phone/mail/ email/fax as appropriate • Experimental and control group?

Conceptualization of “What” • Survey should foster meeting the research objectives • Be clear as to which variables are to be assessed and how. • Align questions with the hypothesis or research question • What do we NEED to know? • Attitudes? Knowledge gain? • Consider RELEVANCE. • Consider cost.

Review Existing Instruments • Review questions from existing instruments • From research articles (or obtain actual instrument) • Using some existing questions reported in the literature provides comparative data • Review existing instruments • Reliability, validity, target audience • Consider adaptation of questions • Format, content (e.g., variable)

Usability of Existing Instruments • Usability is an important consideration in choosing or designing an instrument • How easy the instrument will actually be to use. • Some of the questions asked which assess usability are: • How long will it take to administer? • Are the directions clear? • How easy is it to score? • Do equivalent forms exist? • Have any problems been reported by others who used it? • Getting satisfactory answers can save a researcher a lot of time and energy.

Instrument Format ID number or participant name Descriptive Title (not ‘questionnaire’) Group items by content, providing subtitles as needed for each group Within groupings, place items with same format together Between grouping provide visual distinction Allow some “white space” Question Order Have logic to the order Sensitive/difficult at end Important items NOT last Clear question-numbering system Provide Directions Clear concise directions for every question Response area close to question “OVER” if 2-sided form or print 1 sided only Clearly indicate return method General Construction Principles

Questionnaires • Types of questionnaires: • Personality • Measure stable behavior patterns http://www.unl.edu/buros • Attitude Tests • Measure feelings, opinions • Thurstone Scale • “Equal intervals” • Likert Scale • Often 5-point scale • Evaluation

Directions! Reasonable length! Relevant! Clear, professional, straightforward, easy to understand, good format, structured questions. Cover letter. Informed consent. Reverse score unfavorable items. Are “undecided” responses acceptable?… Should the scale be even or odd-numbered? If attitude scale, include statements clearly reflecting the spectrum of possible attitudes. Questionnaire Construction Review references on survey construction by SPSS and SurveyMonkey.

Purposes of Achievement Tests • Achievement Tests measure knowledge • Standardized vs. teacher/researcher made tests • Norm-referenced vs. criterion-referenced tests • Purposes: • Determine outcome(s) of experiment • Which training/education program is more effective? • Diagnostic and screening tools • e.g., reading level or developmental diagnostic tests • May be used to correlate with outcome(s) • Placement • e.g., A.P. test to earn credit for course/placement level • Selection • e.g., MAT, GRE, skills test • Evaluate/test program outcome(s)

Comparison of Types of Tests • Norm-Referenced vs. Criterion-Referenced • The group used to determine derived scores is called the norm group and the instruments that provide such scores are referred to as norm-referenced instruments. • All derived scores give meaning to individual scores by comparing them to the scores of a group. • An alternative to the use of achievement or performance instruments is to use a criterion-referenced test. • This is based on a specific goal or target (criterion) for each learner to achieve. • The difference between the two tests is that the criterion referenced tests focus more directly on instruction.

Determine most appropriate item format Match to learning outcomes Avoid unintended clues Appropriate reading level Avoid bias No double questions or double negatives Set aside before final review Rubrics for performance based assessment “Anatomy” of multiple choice question Plausible distracters Test Item Formats Questions used in a subject-completed instrument are classified as either selection or supply items. Examples of selection items are: True-false items Matching items Multiple choice items Interpretive exercises Examples of supply items: Short answer items Essay questions Test Construction

Interviews • Types of Interviews • Unstructured • “Client centered” approach gives subject freedom to express self. Usually topic in highly personal. Greater potential for bias or errors. • Semistructured • Built around a core of structured questions. Opportunity to probe for clarifications. • Structured • Well-defined structure resembling format of objective questionnaire – allowing for elaboration and clarification within narrow limits.

Interviewing Pros and Cons • Especially useful to explore an area in which insufficient information exists • Advantages: • Permits greater depth • Permits probing for more completeness of data • Effectiveness of communication checked • Disadvantages: • Costly and time-consuming • Inconvenient • Potentially overeager respondents

Quiet place Dress appropriately Rapport – warm up subject with conversation Informed consent Stay on task Be direct May need to rephrase a question Tell them how valuable their participation is! Thank the interviewee. Take notes Tape record (with permission) Determine coding method Confidence and mastery increase with practice! Interviewing Techniques

Recording Observations • Duration: length of time of specific behavior • Frequency: how often specific behavior occurs • Interval (or time sampling): observe at specific time for specific length of time, regardless of activity occurring at that time • Continuous: all the behavior is recorded without concern of specificity of the behavior • Need to still ensure confidentiality. • May need to have consent. • Many instruments require the cooperation of the respondent in one way or another. An intrusion into an ongoing activity could cause negativity within the respondent. • Observations are valuable as supplements to the use of interviews and questionnaires, often providing a useful way to corroborate what more traditional data sources reveal. • Be non-obtrusive. Try to observe from afar.

Before Pilot • Reviews by: • Subject matter experts • Design experts • Wording of questions • Structure of questions • Response alternatives • Order of questions • Instructions • Navigational rules • Reviews by persons typical of response population • Use “Think-aloud” method • Use tape recorder

Pilot Sample (subjects) Smaller number – perhaps only 15 subjects Representative of inclusion/exclusion criteria (e.g., demographics) Use to examine: Readability and language use Willingness to complete all questions Time estimate for completion Suggestions for improvement (adjustments to response choices or questions) Procedures for distribution/administration, education, measurement, coding, etc. Reliability (statistics) with 20-25 people. (e.g., item analysis, Cronbach alpha) Field Pre-test or Pilot Test

Mode of Questionnaire Administration and Receiving Responses Step 4: Administration

Methods of Data Collection • Mailing paper questionnaires • Web-based surveys • Disk by mail • Telephone interview • Personal interview • Computer assisted telephone interview • Interactive voice response on phone • Personal interview • Face-to-face • Computer assisted • Audio computer assisted self-interviewing • (and observation methods – discussed already)

Key Questions • Questions arise regarding the procedures and conditions under which the instruments will be administered: • Where will the data be collected? • When will the data be collected? • How often are the data to be collected? • Who is to collect the data? • The most highly regarded types of instruments can provide useless data if administered incorrectly, by someone disliked by respondents, under noisy, inhospitable conditions, or when subjects are exhausted.

Response rate = C/E x 100 C = completed Include all returns, such as partials E = number sampled (could subtract those ineligible and nonreachable) Reality of Response Rate Surveys 30-50% and in person interviews 70+% BIAS if lower Interpreting response rate 90% - Considered reliable 75-90% - Usually yield reliable results 50-74% - Interpret with scrutiny, but “adequate” <50% - Caution when interpreting Survey Response Rate

Failure to deliver the survey request Contactability Access impediments No computer No telephone Inability to provide the requested data Poor health Low literacy Mentally incapable Refusals Frequency of experience Level of public knowledge Use of incentives Persistence at contact Nature of request Opportunity cost Social isolation Topic interest Oversurveying Leverage-salience theory (value attributes differently) Major Types of Nonresponse

Inclusion of stamped (1st class) return envelope Delivery by overnight courier Pre-notification Persuasion letters Mention of positively perceived sponsor Use of letterhead Hand signed Use of incentives all receive or raffle Good design of tool Household/ interviewer “match” Respondent “rules” (decide who answers) Number and timing of attempts Follow-Up (2-3 times) Postcard vs. resending the questionnaire Questionnaire better but more costly Phone calls Follow-Up letters Increase Survey Response Rate