Hybrid Systems for Continuous Speech Recognition

290 likes | 317 Vues

Explore the implementation of a hybrid hierarchical decoder using Support Vector Machines in speech recognition systems, outperforming traditional HMMs. Details statistical models and modern training frameworks.

Hybrid Systems for Continuous Speech Recognition

E N D

Presentation Transcript

Hybrid Systems for Continuous Speech Recognition Issac Alphonso alphonso@isip.msstate.edu Institute for Signal and Information Processing Mississippi State University

Abstract Statistical techniques based on Hidden Markov models (HMMs) with Gaussian emission densities have dominated the signal processing and pattern recognition literature for the past 20 years. However, HMMs suffer from an inability to learn discriminative information and are prone to over-fitting and over-parameterization. Recent work in machine learning has focused on models, such as the support vector machine (SVM), that automatically control generalization and parameterization as part of the overall optimization process. SVMs have been shown to provide significant improvements in performance on small pattern recognition tasks compared to a number of conventional approaches. In this presentation, I will describe some of the work that I have done in implementing a kernel based speech recognition system (this is based on work done by Aravind Ganipathiraju). I will then describe our work in using kernel based machines as acoustic models in large vocabulary speech recognition systems. Finally, I will show that SVM’s perform better than Gaussian mixture-based HMMs in open-loop recognition.

Bio Issac Alphonso is a M.S. graduate from the Department of Electrical and Computer Engineering at Mississippi State University (MSU) under the supervision of Dr. Joe Picone. He has been a member of the Institute for Signal and Information Processing (ISIP) at MSU since 1997. Mr. Alphonso's work as a graduate student has revolved around exploring new acoustic modeling techniques for continuous speech recognition systems. His most recent work has been in the implementation of a hybrid hierarchical decoder that employs kernel based techniques like Support Vector machines, which replaces the underlying Gaussian distribution in hidden Markov models. His thesis work looks at a new network training framework that reduces the complexity of the training process, while retaining the robustness of the expectation-maximization based supervised training framework.

Outline • What we do and how we fit in the big picture • The acoustic modeling problem for speech • Structural risk minimization • Support vector classifiers • Coupling vector machines to ASR systems • Proof of concept and experiments

Technology • Focus: speech recognition • First pubic-domain LVCSR system • Goal: Accelerate research • Extensibility, Modular (C++, Java) • Easy to Use (Docs, Tutorials, Toolkits) • Benefit: Technology • Standard benchmarks

Approach • ASR: • HTK • SPHINX • CSLU • Research: • Matlab • Octave • Python • ISIP: • IFC’s • Java Apps • Toolkits Research: Rapid Prototyping “Fair” Evaluations Ease of Use Lightweight Programming Efficiency: Memory Hyper-real time training Parallel processing Data intensive

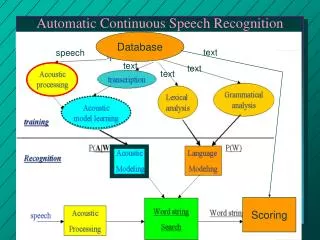

ASR Problem • Front-end maintains information important for modeling in a reduced parameter set • Language model typically predicts a small set of next words based on knowledge of a finite number of previous words (N-grams) • Search engine uses knowledge sources and models to chooses amongst competing hypotheses

Acoustic Confusability Requires reasoning under uncertainty! • Regions of overlap represent classification error • Reduce overlap by introducing acoustic and linguistic context Comparison of “aa” in “lOck” and “iy” in “bEAt” for SWB

THREE TWO FIVE EIGHT s0 s1 s4 s2 s3 Acoustic Modeling - HMMs • HMMs model temporal variation in the transition probabilities of the state machine • GMM emission densities are used to account for variations in speaker, accent, and pronunciation • Sharing model parameters is a common strategy to reduce complexity

Hierarchical Search • Each node in the hierarchy can dynamically expand to explore sub-networks at the next level. • HMM’s are employed at the lowest level of the search hierarchy. • Word networks can generalize to unseen pronunciation variants in the data.

Statistical Models • Each state in the HMM is associated with a statistical model (except the non-emitting state and stop). • The statistical model can implement any pdf, which follows a defined interface contract. • The statistical model can transparently take the form of a GMM or SVM.

Maximum Likelihood Training • Data-driven modeling supervised only from a word-level transcription • Approach: maximum likelihood estimation • The EM algorithm is used to improve our estimates: • Guaranteed convergence to local maximum • No guard against overfitting! • Computationally efficient training algorithms (Forward-Backward) have been crucial • Decision trees are used to optimize sharing parameters, minimize system complexity and integrate additional linguistic knowledge

ML Convergence does not translate to optimal classification Error from incorrect modeling assumptions Finding the optimal decision boundary requires only one parameter! Drawbacks of Current Approach

Data not separable by a hyperplane – nonlinear classifier is needed Gaussian MLE models tend toward the center of mass – overtraining leads to poor generalization Drawbacks of Current Approach

The VC dimension is a measure of the complexity of the learning machine Higher VC dimension gives a looser bound on the actual risk – thus penalizing a more complex model (Vapnik) Expected Risk: Not possible to estimate P(x,y) Empirical Risk: Related by the VC dimension, h: Approach: choose the machine that gives the least upper bound on the actual risk Structural Risk Minimization Expected risk bound on the expected risk optimum VC confidence empirical risk VC dimension

Hyperplanes C0-C2 achieve zero empirical risk. C0 generalizes optimally The data points that define the boundary are called support vectors Optimization: Separable Data Hyperplane: Constraints: Quadratic optimization of a Lagrange functional minimizes risk criterion (maximizes margin). Only a small portion become support vectors Final classifier: C2 H2 CO C1 class 1 H1 w origin optimal classifier class 2 Support Vector Machines

SVMs as Nonlinear Classifiers • Data for practical applications typically not separable using a hyperplane in the original input feature space • Transform data to higher dimension where hyperplane classifier is sufficient to model decision surface • Kernels used for this transformation • Final classifier:

Experimental Progression • Proof of concept on speech classification using the Deterding vowel corpus • Coupling the SVM classifier to ASR system • Results on the OGI Alphadigits corpus

Vowel Classification • Deterding Vowel Data: 11 vowels spoken in “h*d” context; 10 log area parameters; 528 train, 462 test

k frames hh aw aa r y uw region 1 0.3*k frames region 2 0.4*k frames region 3 0.3*k frames mean region 1 mean region 2 mean region 3 Coupling to ASR • Data size: • 30 million frames of data in training set • Solution: Segmental phone models • Source for Segmental Data: • Solution: Use HMM system in bootstrap procedure • Could also build a segment-based decoder • Probabilistic decoder coupling: • SVMs: Sigmoid-fit posterior

Coupling to ASR System Features (Mel-Cepstra) HMM RECOGNITION Segment Information SEGMENTAL CONVERTER N-best List Segmental Features HYBRID DECODER Hypothesis

A word-internal N-gram decoder is used to generate the N-best word-graphs. The word-graphs come with the HMM and LM score, which is used in the rescoring process. The SVM score which is computed during rescoring is used as an additional knowledge source. N-Best Rescoring

Alphadigit Recognition • OGI Alphadigits: continuous, telephone bandwidth letters and numbers (“A19B4E”) • 3329 utterances using 10-best lists generated by the HMM decoder • SVM’s require a sigmoid posterior estimate to produce likelihoods – sigmoid parameters estimated from large held-out set

SVM Alphadigit Recognition • HMM system is cross-word state-tied triphones with 16 mixtures of Gaussian models • SVM system has monophone models with segmental features • System combination experiment yields another 1% reduction in error

Summary • We are the first speech group to apply kernel machines to the acoustic modeling problem • Performance exceeds that of HMM/GMM system, with a bit of HMM interaction • Algorithms for increased data sizes are key

Acknowledgments • Collaborators: Naveen Parihar and Joe Picone at Mississippi State • Consultants: Aravind Ganapathiraju (Conversay) and Jonathan Hamaker (Microsoft)

References • A. Ganapathiraju, “Support Vector Machines for Speech Recognition”, Ph.D. Dissertation, Department of Electrical and Computer Engineering, Mississippi State University, January 2002. • J. Platt, “Fast Training of Support Vector Machines using Sequential Minimal Optimization,” Advances in Kernel Methods, MIT Press, 1998. • V.N. Vapnik, “Statistical Learning Theory”, John Wiley, New York, NY, USA, 1998. • C.J.C. Burges, “A Tutorial on Support Vector Machines for Pattern Recognition,” AT&T Bell Laboratories, November 1999.

Accomplishments • Developed a set of Java based graphical tools used to demonstrate fundamental concepts in signal processing and speech recognition. http://www.isip.msstate.edu/projects/speech/software/demonstrations/applets/. • Developed a set of Tcl-Tk based graphical tools used to transcribe, segment and analyze speech recognition databases. http://www.isip.msstate.edu/projects/speech/software/legacy/). • Developed a generalized network based speech recognition trainer, which is part of my masters thesis work. • Developed a hybrid HMM/SVM system used to rescore N-best word-graphs, which is based on work by Aravind Ganipathiraju. • Worked as part of a team to design and implement a public-domain HMM-based speech recognition system. http://www.isip.msstate.edu/projects/speech/software/