Network Training for Continuous Speech Recognition

This thesis introduces a new network training paradigm to simplify speech recognition training while maintaining robustness. Learn about the theoretical framework, experiments, and motivations behind this innovative approach.

Network Training for Continuous Speech Recognition

E N D

Presentation Transcript

Network Training for Continuous Speech Recognition • Author: Issac John Alphonso Inst. for Signal and Info. Processing Dept. Electrical and Computer Eng. Mississippi State University • Contact Information: Box 0452 Mississippi State University Mississippi State, Mississippi 39762 Tel: 662-325-8335 Fax: 662-325-2298 Email: alphonso@isip.msstate.edu • URL: isip.msstate.edu/publications/books/msstate_theses/2003/network_training/

INTRODUCTION ABSTRACT A traditional trainer uses an expectation maximization (EM) based supervised training framework to estimate the parameters of a speech recognition system. EM-based parameter estimation for speech recognition is performed using several complicated stages of iterative re-estimation. These stages are prone to human error. This thesis describes a new network training paradigm that reduces the complexity of the training process, while retaining the robustness of the EM-based supervised training framework. The network trainer can achieve comparable recognition performance to a traditional trainer while alleviating the need for complicated systems and training recipes for speech recognition systems.

INTRODUCTION ORGANIZATION • Motivation: Why do we need a new training paradigm? • Theoretical: Review the EM-based supervised training framework. • Network Training: The differences between the network training and traditional training. • Experiments: Verification of the approach using industry standard databases (e.g., TIDigits, Alphadigits and Resource Management). Motivation Theoretical Background Network Training Experiments Conclusion & Future Work

INTRODUCTION MOTIVATION • A traditional trainer uses an EM-based framework to estimate the parameters of a speech recognition system. • EM-based parameter estimation is performed in several complicated stages which are prone to human error. • A network trainer reduces the complexity of the training process by employing a soft decision criterion. • A network trainer achieves comparable performance and retains the robustness of the EM-based framework.

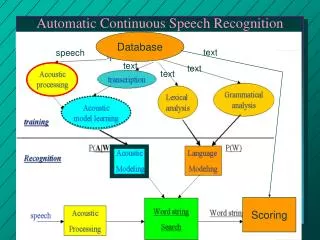

THEORETICAL BACKGROUND COMMUNICATION THEORETIC APPROACH Message Source Linguistic Channel Articulatory Channel Acoustic Channel Features Observable: Message Words Sounds • Maximum likelihood formulation for speech recognition: • P(W|A) = P(A|W) P(W) / P(A) Objective: minimize the word error rate Approach: maximize P(W|A)during training • Components: • P(A|W) : acoustic model (HMM’s/GMM’s) • P(W) : language model (statistical, FSN’s, etc.)

T å l = l log[ P ( O | )] log[ P ( o | )] i = 1 t THEORETICAL BACKGROUND MAXIMUM LIKELIHOOD • The approach treats the parameters of the model as fixed quantities whose values need to be estimated. • The model parameters are estimated by maximizing the log likelihood of observing the training data. • The estimation of the parameters is computationally tractable due to the availability of efficient algorithms.

å ¢ ¢ l l = l l Q ( , ) P ( O , q | ) log( O , q | ) q THEORETICAL BACKGROUND EXPECTATION MAXIMIZATION • A general framework that can be used to determine the maximum likelihood estimates of the model parameters. • The algorithm iteratively estimates the likelihood of the model by maximizing Baum’s auxiliary function. • The expectation maximization algorithm is guaranteed to converge to the maximum likelihood estimate.

p = = = P ( t 0 , state j ) j = = + = = = a P ( time t 1 , state j | time t , state i ) ij = = = b ( O ) P ( O | time t , state j ) j t THEORETICAL BACKGROUND HIDDEN MARKOV MODELS • A random process that consists of a set of states and their corresponding transition probabilities: • The priori probabilities: • The state transition probabilities: • The state emission probabilities:

NETWORK TRAINER TRAINING RECIPE State Tying CI Training Flat Start CD Training Context-Independent Context-Dependent • The flat start stage segments the acoustic signal and seed the speech and non-speech models. • The context-independent stage inserts and optional silence model between words. • The state-tying stage clusters the model parameters via linguistic rules to compensate for sparse training data. • The context-dependent stage is similar to the context-independent stage (words are modeled using context).

Traditional Trainer: hh sil ae v sil NETWORK TRAINER TRANSCRIPTIONS Network Trainer: SILENCE HAVE SILENCE • The network trainer uses word level transcriptions which does not impose restrictions on the word pronunciation. • The traditional trainer uses phone level transcriptions which uses the canonical pronunciation of the word. • Using orthographic transcriptions removes the need for directly dealing with phonetic contexts during training.

NETWORK TRAINER SILENCE MODELS Multi-Path: Single-Path: • The multi-path silence model is used between words. • The single-path silence model is used at utterance ends.

NETWORK TRAINER DURATION MODELING Network Trainer: Traditional Trainer: • The network trainer uses a silence word which precludes the need for inserting it into the phonetic pronunciation. • The traditional trainer deals with silence between words by explicitly specifying it in the phonetic pronunciation.

NETWORK TRAINER PRONUNCIATION MODELING Network Trainer: Traditional Trainer: • A pronunciation network precludes the need to use a single canonical pronunciation for each word. • The pronunciation network has the added advantage of being able to generalize to unseen pronunciations.

NETWORK TRAINER OPTIONAL SILENCE MODELING • The network trainer uses a fixed silence at utterance bounds and an optional silence between words. • We use a fixed silence at utterance bounds to avoid an underestimated silence model.

NETWORK TRAINER SILENCE DURATION MODELING • Network training uses a single-path silence at utterance bounds and a multi-path silence between words. • We use a single-path silence at utterance bounds to avoid uncertainty in modeling silence.

EXPERIMENTS SPEECH DATABASES Word Error Rate Conversational Speech 40% 30% Broadcast News 20% Read Speech 10% Continuous Digits Letters and Numbers Digits Command and Control 0% Level Of Difficulty

EXPERIMENTS TIDIGITS DATABASE • Collected by Texas Instruments in 1983 to establish a common baseline for connected digit recognition tasks. • Includes digits from ‘zero’ through ‘nine’ and ‘oh’ (an alternative pronunciation for ‘zero’). • The corpora consists of 326 speakers (111, men, 114 women and 101 children).

EXPERIMENTS TIDIGITS: WER COMPARISON • The network trainer achieves comparable performance to the traditional trainer. • The network trainer converges in word error rate to the traditional trainer.

EXPERIMENTS TIDIGITS: LIKELIHOOD COMPARISON _ _ _ _ Network Trainer ______ Traditional Trainer Average Log Likelihood Iterations

EXPERIMENTS ALPHADIGITS (AD) DATABASE • Collected by the Oregon Graduate Institute (OGI) using the CLSU T1 data collection system. • Includes letters (‘a’ through ‘z’) and numbers (‘zero’ through ‘nine’ and ‘oh’). • The database consists of 2,983 speakers (1,419 men, 1,533 women and 30 children).

EXPERIMENTS AD: WER COMPARISON • The network trainer achieves comparable performance to the traditional trainer. • The network trainer converges in word error rate to the traditional trainer.

EXPERIMENTS AD: LIKELIHOOD COMPARISON _ _ _ _ Network Trainer ______ Traditional Trainer Average Log Likelihood Iterations

EXPERIMENTS RESOURCE MANAGEMENT (RM) DATABASE • Was collected by the Defense Advanced Research Project Agency (DARPA). • Includes a collection of spoken sentences pertaining to a naval RM task. • The database consists of 80 speakers, each reading two ‘dialect’ sentences plus 40 sentences from the RM text corpus.

EXPERIMENTS RM: WER COMPARISON • The network trainer achieves comparable performance to the traditional trainer. • It is important to note that the 1.8% degradation in performance is not significant (MAPSSWE test).

EXPERIMENTS RM: LIKELIHOOD COMPARISON _ _ _ _ Network Trainer ______ Traditional Trainer Average Log Likelihood Iterations

CONCLUSIONS SUMMARY • Explored the effectiveness of a novel training recipe in the reestimation process of for speech processing. • Analyzed performance on three databases. • For TIDigits, at 7.6% WER, the performance of the network trainer was better by about 0.1%. • For OGI Alphadigits, at 35.3% WER, the performance of the network trainer was better by about 2.7%. • For Resource Management, at 27.5% WER, the performance degraded by about 1.8% (not significant).

CONCLUSIONS FUTURE WORK • The results presented use single-mixture context-dependent models for training and recognition. • A efficient tree-based decoder is currently under development and context-dependent results are planned. • The databases presented all use single pronunciations for each word in the lexicon. • The ability to run large databases like Switchboard, which has multiple pronunciations, requires a tree-based decoder.

PROGRAM OF STUDY APPENDIX PROGRAM OF STUDY

APPENDIX ACKNOWLEDGEMENTS • I would like to thank Dr. Joe Picone for his mentoring and guidance through out my graduate program. • I would also like to thank Jon Hamaker for his valuable suggestions throughout my thesis. • Finally, I would like to thank my co-workers at the Institute for Signal and Information Processing (ISIP) for all their help.

APPENDIX REFERENCES • S. Pinker, The Language Instinct, Harper Collins, New York City, New York, USA, 1994. • L. Rabiner, B. Juang, Fundamentals of Speech Recognition, Prentice Hall, Upper Saddle River, New Jersey, USA, 1993. • R. O. Duda, P. E. Hart, D. G. Stork, Pattern Classification, John Wiley & Sons, New York City, New York, USA, 2001. • X. Huang, A. Acero, H. Hon, Spoken Language Processing, Prentice Hall, Upper Saddle River, New Jersey, USA, 2001.