Innovative Methods for Learning Classifier Combinations: Exploring Federated and Global Fusion

This paper discusses various local fusion methods and presents a novel approach termed "federated fusion" for multi-topic problems. We empirically compare this method against the previously defined global fusion approach. Our experiments utilize the Reuters Corpus to evaluate the effectiveness of individual classifiers. Results show that federated fusion yields higher average utility for topics with fewer relevant documents, while global fusion excels across a larger number of topics. Ultimately, the choice between methods depends on user objectives and topic characteristics.

Innovative Methods for Learning Classifier Combinations: Exploring Federated and Global Fusion

E N D

Presentation Transcript

Methods for Learning Classifier Combinations: No Clear Winner Dmitriy Fradkin, Paul Kantor DIMACS, Rutgers University Dmitriy Fradkin, ACM SAC'2005

Topic 1 Topic 2 ….. New Topics System 2 System 2 System 2 System 1 System 1 System 1 ? Local Fusion Federated or Global Fusion Dmitriy Fradkin, ACM SAC'2005

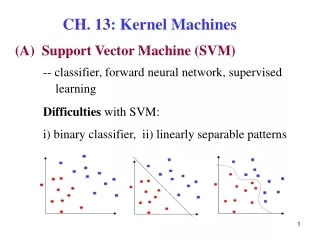

Overview • Discuss local fusion methods • Describe a new fusion approach for multi-topic problems that we call “federated” • Compare it empirically to the global approach, previously described in [Bartell et. al. 1994] • Interpret the results Dmitriy Fradkin, ACM SAC'2005

Related Work in IR • [Bartell et. al, 1994] - global fusion of systems • [Hull et. al, 1996] - local fusion methods for document filtering (averaging, linear and logistic regression, grid search) • [Lam and Lai 2001] used category-specific features to model error-rate, and then picked the single best system for a category • [Bennet et.al, 2002] uses “reliability indicators” together with scores as input to a metaclassifier Dmitriy Fradkin, ACM SAC'2005

Combination of Classifiers Relevance Judgment: Decision Rule: The problem of fusion can be formulated as the problem of finding a way to combine several decision rules Dmitriy Fradkin, ACM SAC'2005

Linear Combinations Dmitriy Fradkin, ACM SAC'2005

Input to Local Fusion Dmitriy Fradkin, ACM SAC'2005

Local Fusion Methods A new fusion method: Other methods: Dmitriy Fradkin, ACM SAC'2005

Local Fusion Methods (cont.) Since log is a monotone function, the underlying decision rule is linear Dmitriy Fradkin, ACM SAC'2005

Threshold Tuning • Once a vector of parameters is found for a local rule, we compute fusion score on the training set and find a threshold maximizing a particular utility measure: Different combinations lead to different scores and decisions. Dmitriy Fradkin, ACM SAC'2005

Global Fusion When there are many topics: • Combine all document-query relevance judgments and corresponding score together (as if for a single query) • Compute a local fusion rule When data for a new training topic becomes available we can either: • solve the problem from the scratch, or • continue using the same rule. Dmitriy Fradkin, ACM SAC'2005

Input to Global Fusion Dmitriy Fradkin, ACM SAC'2005

Question: • Suppose we know local fusion rules on a set of queries. • Can we exploit this knowledge on other queries? • Can we come up with a scheme that can easily incorporate new training queries? Dmitriy Fradkin, ACM SAC'2005

Federated Fusion New training topics are easy to incorporate! Dmitriy Fradkin, ACM SAC'2005

Experimental Evaluation • Reuters Corpus v1, version 2 (RCV1-v2) • 99 topics • Completely judged • ~23K documents (as in Lewis et. al. 2004) to train individual systems • Selected 4060 (from ~ 800K) to construct fusion rules • 9-fold cross-validation over topics Dmitriy Fradkin, ACM SAC'2005

Utility Measures T+ - all positive documents; D+ - submitted positive; D- - submitted negative Dmitriy Fradkin, ACM SAC'2005

Term Representation where f’(t,d) is number of times a term occurs in a document. IDF weighting: let i’(t) is the number of documents, in the training set T, containing term t. Then: Dmitriy Fradkin, ACM SAC'2005

Individual Classifiers • Bayesian Binary Regression (BBR) [Genkin et. al. 2004] • kNN, k=384 (k was chosen on the basis of prior experiments) • Rocchio Classifier Dmitriy Fradkin, ACM SAC'2005

Results Average T11SU measure across 99 topics of RCV1 Dmitriy Fradkin, ACM SAC'2005

Conclusions • Centroid method performs best with federated fusion • Federated fusion gives higher average utility, • But global fusion performs better on greater number of topics. • This seems to be related to the number of relevant documents for individual topics (federated is better for topics with few relevant documents). • No Clear Winner: the choice of methods depends on user’s objectives • However, computationally Federated fusion is more efficient • Have to consider topic properties when choosing a combination method Dmitriy Fradkin, ACM SAC'2005

Acknowledgments • KD-D group via NSF grant EIA-0087022 • Members of DIMACS MMS project: Fred Roberts (PI), Andrei Anghelescu, Alex Genkin, Dave Lewis, David Madigan, Vladimir Menkov • Kwong Bor Ng • Anonymous reviewers Dmitriy Fradkin, ACM SAC'2005