Differential Privacy: Case Studies

Differential Privacy: Case Studies. Denny Lee (dennyl@microsoft.com), Microsoft SQLCAT Team | Best Practices. Case Studies. Quantitative Case Study: Windows Live / MSN Web Analytics data Qualitative Case Study: Clinical Physicians Perspective Future Study

Differential Privacy: Case Studies

E N D

Presentation Transcript

Differential Privacy:Case Studies Denny Lee (dennyl@microsoft.com), Microsoft SQLCAT Team | Best Practices

Case Studies • Quantitative Case Study: • Windows Live / MSN Web Analytics data • Qualitative Case Study: • Clinical Physicians Perspective • Future Study • OHSU/CORI data set to apply differential privacy to Healthcare setting

Sanitization Concept • Mask individuals within the data by creating a sanitization point between user interface and data. • The magnitude of the noise is given by the theorem. If many queries f1, f2, … are to be made, noise proportional to ΣiΔfi suffices. For many sequences, we can often use less noise than ΣiΔfi . Note that Δ Histogram = 1, independent of number of cells

Generating the noise • To generate the noise, a pseudo-random number generator will create a stream of numbers, e.g.: • The resulting translation of this stream is:

Adding noise • The stream of numbers above is applied to the result set. • While masking the individuals, it allows accurate percentages and trending. • Presuming the magnitude is small (i.e. small error), the numbers are themselves accurate within an acceptable margin. noise

Windows Live User Data • Our initial case study is based on Windows Live user data: • 550 million Passport users • Passport has web site visitor self-reported data: gender, birth date, occupation, country, zip code, etc. • Web data has: IP address, pages viewed, page view duration, browser, operating system, etc. • Created two groups for this case study to study the acceptability / applicability of differential privacy within the WL reporting context: • WL Sampled Users Web Analytics • Customer Churn Analytics

Windows Live Example Report • As per below, you can see the effect on the data

Sampled Users Web Analytics Group • New solution built on top of an existing Windows Live web analytics solution to provide a sample specific to Passport users. • Built on top of an OLAP database to provide analysts to view the data from multiple dimensions. • Built as well to showcase the privacy preserving histogram for various teams including Channels, Search, and Money.

Web Analytics Group Feedback • Feedback was negative because customers could not accept any amount of error. • This group had been using reporting systems for over two years that had perceived accuracy issues. • They were adamant that all of the totals matched; the difference on the right was not acceptable even though this data was not used for financial reconciliation.

Customer Churn Analysis Group • This reporting solution provided an OLAP cube, based on an existing targeted marketing system, to allow analysts to understand how services (Messenger, Mail, Search, Spaces, etc.) are being used. • A key difference between the groups is that this group did not have access to any reporting (though it was requested for many months). • Within a few weeks of their initial request, CCA customers received a working beta in which they were able to interact, validate, and provide feedback to the precision and accuracy of the data.

Discussion • The collaborative effort lead to the customer trusting the data, a key difference in comparison to the first group. • Because of this trust, the small amount of error introduced into the system to ensure customer privacy was well within a tolerable error margin. • The CCA group is in direct marketing hence had to deal more regularly with customer privacy.

An important component to the acceptance of privacy algorithms is the users’ trust of the data.

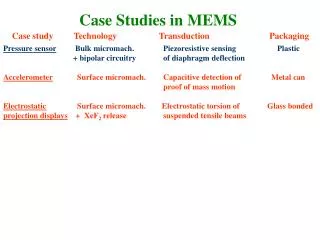

Clinical Researchers Perceptions • A pilot qualitative study on the perceptions of clinical researchers was recently completed. • It has noted three categories of six themes: • Unaffected Statistics • Understanding the privacy algorithms • Can get back to the original data • Understanding the purpose of the privacy algorithms • Management ROI • Protecting Patient Privacy

Unaffected Statistics • The most important point – no point applying privacy if we get faulty statistics. • Primary concern is healthcare studies involve smaller number of patients than other studies. • We are currently planning to provide in the near future a healthcare template for the use of these algorithms.

Understanding the privacy algorithms • As we have done in these slides, we have described the mathematics behind these algorithms only briefly. • But most clinical researchers are willing to accept the science behind them without necessarily understanding them. • While this is good, it does pose the problem that one will implement them w/o understanding them incorrectly guaranteeing the privacy of patients.

Can get back to the original data • It is very important to get back to the original data set if so required. • Many existing privacy algorithms perturb the data so while guaranteeing the privacy of an individual, it is impossible to get back to the individual. • Healthcare research always requires the ability to get back to the original data to potentially inform patients of new outcomes. • The privacy preserving data analysis approach here will allow this ability.

Understand the purpose of the privacy algorithms • Most educated healthcare professionals understand the issues and providing case studies such as the Gov Weld case make this more apparent. • But we will still want to provide well-worded text and/or confidence intervals below a chart or report that has privacy algorithms applied.

Management ROI • We should be limiting the number of users who need access to full data. So is there a good return-on-investment to provide this extra step if you can securely authorize the right people to access this data? • This is where standards from IRB, privacy & security steering committees, and the government get involved. • Most importantly: the ability to share data.

Protecting Patient Privacy For us to be able to analyze and mine medical data so we can help patients as well as lower the costs of healthcare, we must first ensure patient privacy.

Future Collaboration • As noted above, we are currently working with OHSU to build a template for the application of these privacy algorithms to healthcare. • For more information and/or interest in participating in future application research, please email Denny Lee at dennyl@microsoft.com.

Thanks • Thanks to Sally Allwardt for helping implement the privacy preserving histogram algorithm used in this case study. • Thanks to Kristina Behr, Lead Marketing Manager, for all of her help and feedback with this case study.

Practical Privacy: The SuLQ Framework • Reference paper “Practical Privacy: The SuLQ Framework” • Conceptually, this application of privacy can be applied to: • Principal component analysis • k means clustering • ID3 algorithm • Perceptron algorithm • Apparently, all algorithms in the statistical queries learning model.