Memory Hierarchy

Explore the evolution of memory systems through the memory hierarchy, virtual memory, cache memory, and address translation in computer architecture from 1980 to 2000. Learn the reasons for caring about memory hierarchy and how processor-memory performance gaps impact system design for efficient data processing.

Memory Hierarchy

E N D

Presentation Transcript

Memory Hierarchy • Memory Hierarchy • Reasons • Virtual Memory • Cache Memory • Translation Lookaside Buffer • Address translation • Demand paging

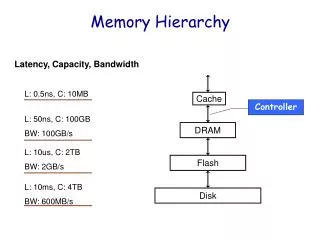

1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 1980 1981 1982 1983 1984 1985 1986 Why Care About the MemoryHierarchy? Processor-DRAM Memory Gap (latency) µProc 60%/yr. (2X/1.5yr) 1000 CPU “Moore’s Law” 100 Processor-Memory Performance Gap:(grows 50% / year) Performance 10 DRAM 9%/yr. (2X/10 yrs) DRAM 1 Time

DRAMs over Time DRAM Generation 1st Gen. Sample Memory Size Die Size (mm2) Memory Area (mm2) Memory Cell Area (µm2) ‘84 ‘87 ‘90 ‘93 ‘96 ‘99 1 Mb 4 Mb 16 Mb 64 Mb 256 Mb 1 Gb 55 85 130 200 300 450 30 47 72 110 165 250 28.84 11.1 4.26 1.64 0.61 0.23 (from Kazuhiro Sakashita, Mitsubishi)

Recap: • Two Different Types of Locality: • Temporal Locality (Locality in Time): If an item is referenced, it will tend to be referenced again soon. • Spatial Locality (Locality in Space): If an item is referenced, items whose addresses are close by tend to be referenced soon. • By taking advantage of the principle of locality: • Present the user with as much memory as is available in the cheapest technology. • Provide access at the speed offered by the fastest technology. • DRAM is slow but cheap and dense: • Good choice for presenting the user with a BIG memory system • SRAM is fast but expensive and not very dense: • Good choice for providing the user FAST access time.

Memory Hierarchy of a Modern Computer • By taking advantage of the principle of locality: • Present the user with as much memory as is available in the cheapest technology. • Provide access at the speed offered by the fastest technology. Processor Control Tertiary Storage (Disk /Tape) Secondary Storage (Disk) Second Level Cache (SRAM) Main Memory (DRAM) On-Chip Cache Datapath Registers Speed : 1ns Xns 10ns 100ns 10 ms 10 sec Size (bytes): 100 64K K..M M G T

Reg:s Cache Memory Disk Tape Levels of the Memory Hierarchy Staging Xfer Unit faster prog 1-8 bytes Instr. Operands cache cntl 8-128 bytes Blocks OS 512-4K bytes Pages user/operator Mbytes Files Larger

Workload or Benchmark programs Processor Memory $ MEM The Art of Memory System Design Optimize the memory system organization to minimize the average memory access time for typical workloads • reference stream • <op,addr>, <op,addr>,<op,addr>,<op,addr>, . . . • op: i-fetch, read, write

disk mem cache reg pages frame Virtual Memory System Design size of information blocks that are transferred from secondary to main storage (M) block of information brought into M, and M is full, then some region of M must be released to make room for the new block --> replacement policy which region of M is to hold the new block --> placement policy missing item fetched from secondary memory only on the occurrence of a fault --> demand load policy Paging Organization virtual and physical address space partitioned into blocks of equal size page frames pages

Address Map V = {0, 1, . . . , n - 1} virtual address space M = {0, 1, . . . , m - 1} physical address space MAP: V --> M U {0} address mapping function n > m MAP(a) = a' if data at virtual address a is present in physical address a' and a' in M = 0 if data at virtual address a is not present in M a missing item fault Name Space V fault handler Processor 0 Secondary Memory Addr Trans Mechanism Main Memory a a' physical address OS performs this transfer

V.A. P.A. unit of mapping 0 frame 0 1K 0 Addr Trans MAP 1K page 0 1024 1 1K 1024 1 1K also unit of transfer from virtual to physical memory 7 1K 7168 Physical Memory 31 1K 31744 Address Mapping Virtual Memory 10 VA page no. disp Page Table Page Table Base Reg Access Rights V + PA index into page table table located in physical memory physical memory address Paging Organization actually, concatenation is more likely

Address Mapping CP0 User Memory MIPS PIPELINE Instr Data 32 32 24-bit Physical Address 32-bit Virtual Address User process 2 running Kernel Memory Page Table 1 Here we need page table 2 for address mapping Page Table 2 Page Table n

Translation Lookaside Buffer (TLB) CP0 On TLB hit, the 32-bit virtual address is translated into a 24-bit physical address by hardware We never call the Kernel! User Memory MIPS PIPELINE 32 32 24 D R Physical Addr [23:10] Virtual Address Kernel Memory Page Table 1 Page Table 2 Page Table n

So Far, NO GOOD 60 ns, RAM CP0 STALL IM DE EX DM 32 32 Critical path 20 ns 24-bit Physical Address TLB MIPS pipe is clocked at 50 MHz Kernel Memory 5ns Page Table 1 But RAM needs 3 cycles to read/write STALLS the pipe Page Table 2 Page Table n

Let’s put in a Cache 60 ns, RAM CP0 IM DE EX DM 32 32 Critical path 20 ns TLB Cache MIPS pipe is clocked at 50 MHz Kernel Memory 5ns 15ns Page Table 1 A cache Hit never STALLS the pipe Page Table 2 Page Table n

Fully Associative Cache 23 2 1 0 24-bit PA Check all Cache lines Cache Hit if PA[23:2]=TAG Tag PA[23:2] Data Word PA[1:0] 16 all 2 lines 16 2 * 4=256kb

Fully Associative Cache • Very good hit ratio (nr hits/nr accesses) But! • Too expensive checking all 2 Cache lines concurrently • A comparator for each line! A lot of hardware 16

Direct Mapped Cache 23 18 17 2 1 0 24-bit PA Selects ONE cache line Cache Hit if PA[23:18]=TAG Tag PA[23:18] Data Word PA[1:0] 1 line 16 2 * 4=256kb

Direct Mapped Cache • Not so good hit ratio • Each line can hold only certain addresses, less freedom But! • Much cheaper to implement, only one line checked • Only one comparator

Set Associative Cache 23 18-z 17-z 2 1 0 24-bit PA z Selects ONE set of lines, size 2 Cache Hit if PA[23:18-z]=TAG in the set Tag PA[23:18-z] Data Word PA[1:0] z 2 lines 16 2 * 4=256kb

Set Associative Cache • Quite good hit ratio • The number (set) of different addresses for each line is greater than that of a directly mapped cache • The larger Z the better hit ratio, but more expensive • 2z comparators • Cost-performance tradeoff

Cache Miss • A Cache Miss should be handled by the hardware • If handled by the OS it would be very slow (>>60 ns) • On a Cache Miss • Stall the pipe • Read in new data to cache • Release the pipe, now we get a Cache Hit

A Summary on Sources of Cache Misses • Compulsory (cold start or process migration, first reference): first access to a block • “Cold” fact of life: not a whole lot you can do about it • Note: If you are going to run “billions” of instruction, Compulsory Misses are insignificant • Conflict (collision): • Multiple memory locations mappedto the same cache location • Solution 1: increase cache size • Solution 2: increase associativity • Capacity: • Cache cannot contain all blocks access by the program • Solution: increase cache size • Invalidation: other process (e.g., I/O) updates memory

31 9 4 0 Cache Tag Example: 0x50 Cache Index Byte Select Ex: 0x01 Ex: 0x00 Stored as part of the cache “state” Cache Tag Valid Bit Cache Data : Byte 31 Byte 1 Byte 0 0 : 0x50 Byte 63 Byte 33 Byte 32 1 2 3 : : : : Byte 1023 Byte 992 31 Example: 1 KB Direct Mapped Cache with 32 B Blocks • For a 2N byte cache: • The uppermost (32 - N) bits are always the Cache Tag • The lowest M bits are the Byte Select (Block Size = 2M)

Block Size Tradeoff • In general, larger block size take advantage of spatial locality BUT: • Larger block size means larger miss penalty: Takes longer time to fill up the block • If block size is too big relative to cache size, miss rate will go up: Too few cache blocks • In gerneral, Average Access Time: TimeAv= Hit Time x (1 - Miss Rate) + Miss Penalty x Miss Rate Average Access Time Miss Rate Miss Penalty Exploits Spatial Locality Increased Miss Penalty & Miss Rate Fewer blocks: compromises temporal locality Block Size Block Size Block Size

Valid Bit Cache Tag Cache Data Byte 3 Byte 2 Byte 1 Byte 0 0 Extreme Example: single big line • Cache Size = 4 bytes Block Size = 4 bytes • Only ONE entry in the cache • If an item is accessed, likely that it will be accessed again soon • But it is unlikely that it will be accessed again immediately!!! • The next access will likely to be a miss again • Continually loading data into the cache butdiscard (force out) them before they are used again • Worst nightmare of a cache designer: Ping Pong Effect • Conflict Missesare misses caused by: • Different memory locations mapped to the same cache index • Solution 1: make the cache size bigger • Solution 2: Multiple entries for the same Cache Index

Hierarchy Small, fast and expensive VS Slow big and inexpensive Cache Contains copies What if copies are changed? INCONSISTENCY! HD 2 Gb RAM 16 Mb Cache 256kb I D

Cache Miss, Write Through/Back To avoid INCONSISTENCY we can • Write Through • Always write data to RAM • Not so good performance (write 60ns) • Therefore, WT always combined with write buffers so that don’t wait for lower level memory • Write Back • Write data to memory only when cache line is replaced • We need a Dirty bit (D) for each cache line • D-bit set by hardware on write operation • Much better performance, but more complex hardware

Cache Processor DRAM Write Buffer Write Buffer for Write Through • A Write Buffer is needed between the Cache and Memory • Processor: writes data into the cache and the write buffer • Memory controller: write contents of the buffer to memory • Write buffer is just a FIFO: • Typical number of entries: 4 • Works fine if: Store frequency (w.r.t. time) << 1 / DRAM write cycle • Memory system designer’s nightmare: • Store frequency (w.r.t. time) -> 1 / DRAM write cycle • Write buffer saturation

Cache L2 Cache Processor DRAM Write Buffer Write Buffer Saturation • Store frequency (w.r.t. time) -> 1 / DRAM write cycle • If this condition exist for a long period of time (CPU cycle time too quick and/or too many store instructions in a row): • Store buffer will overflow no matter how big you make it • The CPU Cycle Time <= DRAM Write Cycle Time • Solution for write buffer saturation: • Use a write back cache • Install a second level (L2) cache:

Replacement Strategy in Hardware • A Direct mapped cache selects ONE cache line • No replacement strategy • Set/Fully Associative Cache selects a set of lines. Strategy to select one Cache line • Random, Round Robin • Not so good, spoils the idea with Associative Cache • Least Recently Used, (move to top strategy) • Good, but complex and costly for large Z • We could use an approximation (heuristic) • Not Recently Used, (replace if not used for a certain time)

Sequential RAM Access • Accessing sequential words from RAM is faster than accessing RAM randomly • Only lower address bits will change • How could we exploit this? • Let each Cache Line hold an Array of Data words • Give the Base address and array size • “Burst Read” the array from RAM to Cache • “Burst Write” the array from Cache to RAM

System Startup, RESET • Random Cache Contents • We might read incorrect values from the Cache • We need to know if the contents is Valid, a V-bit for each cache line • Let the hardware clear all V-bits on RESET • Set the V-bit and clear the D-bit for the line copied from RAM to Cache

Final Cache Model 23 18-z 17-z 2+j 1+j 0 24-bit PA z Selects ONE set of lines, size 2 Cache Hit if (PA[23:18-z]=TAG) and V in set Set D bit if Write V D Tag PA[23:18-z] Data Word PA[1+j:0] z 2 lines ...

Translation Lookaside Buffer (TLB) CP0 On TLB hit, the 32-bit virtual address is translated into a 24-bit physical address by hardware We never call the Kernel! User Memory MIPS PIPELINE 32 32 24 D R Physical Addr [23:10] Virtual Address Kernel Memory Page Table 1 Page Table 2 Page Table n

TLBs A way to speed up translation is to use a special cache of recently used page table entries -- this has many names, but the most frequently used is Translation Lookaside Buffer or TLB Virtual Address Physical Address Dirty Ref Valid Access TLB access time comparable to cache access time (much less than main memory access time)

miss VA PA Trans- lation Cache Main Memory CPU hit data Virtual Address and a Cache • It takes an extra memory access to translate VA to PA • This makes cache access very expensive, and this is the "innermost loop" that you want to go as fast as possible • ASIDE: Why access cache with PA at all? VA caches have a problem! synonym / alias problem: two different virtual addresses map to same physical address => two different cache entries holding data for the same physical address! • for update: must update all cache entries with same physical address or memory becomes inconsistent • determining this requires significant hardware, essentially an associative lookup on the physical address tags to see if you have multiple hits; or • software enforced alias boundary: same lsb of VA &PA > cache size

Translation Look-Aside Buffers Just like any other cache, the TLB can be organized as fully associative, set associative, or direct mapped TLBs are usually small, typically not more than 128 - 256 entries even on high end machines. This permits fully associative lookup on these machines. Most mid-range machines use small n-way set associative organizations. hit miss VA PA TLB Lookup Cache Main Memory CPU miss hit Translation with a TLB Trans- lation data t 20 t 1/2 t

Reducing Translation Time Machines with TLBs go one step further to reduce cycles/cache access They overlap the cache access with the TLB access Works because high order bits of the VA are used to look in the TLB while low order bits are used as index into cache

Cache TLB index assoc lookup 1 K 32 4 bytes 10 2 00 Hit/ Miss PA Data PA Hit/ Miss 12 20 page # disp = Overlapped Cache & TLB Access IF cache hit AND (cache tag = PA) then deliver data to CPU ELSE IF [cache miss OR (cache tag = PA)] and TLB hit THEN access memory with the PA from the TLB ELSE do standard VA translation

11 2 cache index 00 12 20 virt page # disp 1K 10 4 4 Problems With Overlapped TLB Access • Overlapped access only works as long as the address bits used to • index into the cache do not changeas the result of VA translation • This usually limits things to small caches, large page sizes, or • high n-way set associative caches if you want a large cache • Example: suppose everything the same except that the cache is • increased to 8 K bytes instead of 4 K: This bit is changed by VA translation, but is needed for cache lookup Solutions: go to 8K byte page sizes; go to 2 way set associative cache; or SW guarantee VA[13]=PA[13] 2 way set assoc cache

Startup a User process • Allocate Stack pages, Make a Page Table; • Set Instruction (I), Global Data (D) and Stack pages (S) • Clear Resident (R) and Dirty (D) bits • Clear V-bits in TLB Kernel Memory V D R Page Table Page Table 0 0 0 I Place on Hard Disk 0 0 0 I ... 0 0 I 0 0 0 D 0 0 S TLB

Demand Paging • IM Stage: We get a TLB Miss and Page Fault (page 0 not resident) • Page Table (Kernel memory) holds HD address for page 0 (P0) • Read page to RAM page X, Update PA[23:10] in Page Table • Update TLB, set V, clear D, Page #, PA[23:10] • Restart failing instruction: TLB hit! RAM XX…X00..0 Page 0 I TLB V 22-bit Page # D Physical Addr PA[23:10] 1 00…...….0 0 XX………………..X I 0 P0 ... 0

Demand Paging • DM Stage: We get a TLB Miss and Page Fault (page 3 not resident) • Page Table (Kernel memory) holds HD address for page 3 (P3) • Read page to RAM page Y, Update PA[23:10] in Page Table • Update TLB, set V, clear D, Page #, PA[23:10] • Restart failing instruction: TLB hit! RAM Page 0 I TLB YY…Y00..0 Page 3 D V 22-bit Page # D Physical Addr PA[23:10] 1 00…...….0 0 XX………………..X I 1 00…...…11 0 YY………………..Y D P0 P3 ... P1 P2 0

Spatial and Temporal Locality Spatial Locality • Now TLB holds page translation; 1024 bytes, 256 instructions • The next instruction (PC+4) will cause a TLB Hit • Access a data array, e.g., 0($t0),4($t0) etc Temporal Locality • TLB holds translation • Branch within the same page, access the same instruction address • Access the array again e.g., 0($t0),4($t0) etc THIS IS THE ONLY REASON A SMALL TLB WORKS

Replacement Strategy If TLB is full the OS selects the TLB line to replace • Any line will do, they are the same and concurrently checked Strategy to select one • Random • Not so good • Round Robin • Not so good, about the same as random • Least Recently Used, (move to top strategy) • Much better, (the best we can do without knowing or predicting page access). Based on temporallocality

Hierarchy Small, fast and expensive VS Slow big and inexpensive TLB 64 Lines TLB/RAM Contains copies What if copies are changed? INCONSISTENCY! RAM 256Mb >> 64 Kernel Memory HD 32 Gb Page Table

Inconsistency Replace a TLB entry, caused by TLB Miss • If old TLB entry dirty (D-bit) we update Page Table (Kernel memory) Replace a page in RAM (swapping) caused by Page Fault • If old Page is in TLB • Check old page TLB D-bit, if Dirty write page to HD • Clear TLB V-bit and Page Table R-bit (now not resident) • If old Page is in not in TLB • Check old page Page Table D-bit, if Dirty write page to HD • Clear Page Table R-bit (page not resident any more)

Current Working Set If RAM is full the OS selects a page to replace, Page Fault • OBS! The RAM is shared by many User processes • Least Recently Used, (move to top strategy) • Much better, (the best we can do without knowing or predicting page access) • Swapping is VERY expensive (, maybe > 100 ms) • Why not try harder to keep the pages needed (the working set) in RAM using Advanced memory paging algorithms Current working set of process P {p0,p3,...} set of pages used under t t t now

Trashing Probability of Page Fault 1 Trashing No useful work done! This we want to avoid Fragment of working set not resident 0 0 1

Summary: Cache, TLB, Virtual Memory • Caches, TLBs, Virtual Memory all understood by examining how they deal with 4 questions: • Where can block be placed? • How is block found? • What block is repalced on miss? • How are writes handled? • Page tables map virtual address to physical address • TLBs are important for fast translation • TLB misses are significant in processor performance: • (most systems can’t access all of 2nd level cache without TLB misses!)