Advanced Pass Selection Strategy in Multi-Agent Robotic Soccer Using TPOT-RL

This work explores an innovative approach to pass selection in robotic soccer utilizing a TPOT-RL framework. By training decision trees (DT) in artificial scenarios and employing them to limit potential receivers, the system selects the best pass based on confidence of success, measured by team behavior and long-term success metrics in real games. TPOT-RL learners operate online, managing large state spaces with delayed rewards, while adapting to the dynamics of a multi-agent environment. The study emphasizes the importance of strategic decision-making in team play, underscoring the nuances of receiver selection based on evolving game states.

Advanced Pass Selection Strategy in Multi-Agent Robotic Soccer Using TPOT-RL

E N D

Presentation Transcript

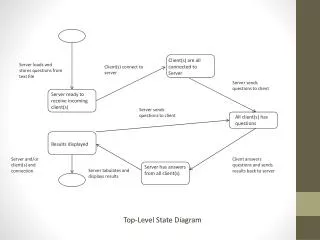

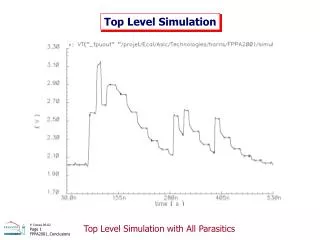

Top level learning Pass selection using TPOT-RL

DT receiver choice function • DT is trained off-line in artificial situation • DT used in a heuristic, hand-coded function to limit the potential receivers to those that are at least as close to the opponent‘s goal as the passer • always passes to the potential receiver with the highest confidence of success (max(passer, receiver))

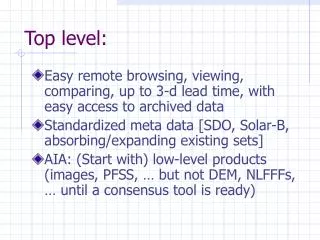

requirement in „reality“ • best pass may be to a receiver farther away from the goal than the passer • the receiver that is most like to successfully receive the pass may not be the one that will subsequently act most favorable for the team

Pass Selection - a team behavior • learn how to act strategically as part of a team • requires understanding of long-term effects of local decisions • given the behaviors and abilities of teammates and opponents • measured by the team‘s long-term success in a real game • -> must be trained on-line against an opponent

ML algorithm characteristics for pass selection • On-line • Capable of dealing with a large state space despite limited training • Capable of learning based on long-term, delayed reward • Capable of dealing with shifting concepts • Works in a team-partitioned scenario • Capable of dealing with opaque transitions

TPOT-RL succeeds by: • Partitioning the value function among multiple agents • Training agents simultaneously with a gradually decreasing exploration rate • Using action-dependent features to aggressively generalize the state space • Gathering long-term, discounted reward directly from the environment

TPOT-RL: policy mapping(S -> A) • State generalization • Value function learning • Action selection

State generalization I • Mapping state space to feature vectorf : S -> V • Using action-dependent feature functione : S x A -> U • Partitioning state space among agentsP : S -> M

State generalization II • |M| >= m ... Number of agents in team • A = {a0, ..., an-1} • f(s) = <e(s, a0), ..., e(s, an-1), P(s)> • V = U|A| x M

Value function learning I • Value function Q(f(s), ai)Q : V x A -> reell • Depends on e(s, ai)independent of e(s, aj) j i • Q-table has |U|1 * |M| * |A| entries

Value function learning II • f(s) = v • Q(v, a) = Q(v, a) + * (r – Q(v, a)) • r is derived from observable environmental characteristics • Reward function R : Stlim -> reell • Range of R is [-Qmax, Qmax] • Keep track of action taken ai and feature vector v at that time

Action selection • Exploration vs. Exploitation • Reduce number of free variables with action filter • W U: if e(s, a) W -> a shouldn‘t be a potential action in s • B(s) = {a A | e(s, a) W} • B(s) = {} (W U) ?

TPOT-RL applied to simulated robotic soccer • 8 possible actions in A (see action space) • Extend definition of (Section 6) • Input for L3 is DT from L2 to define e

State generalization using a learned feature I • M = team‘s set of positions (|M| = 11) • P(s) = player‘s current position • Define e using DT (C = 0.734) • W = {Success}

State generalization using a learned feature II • |U| = 2 • V = U8 x {PlayerPositions}|V| = |U||A| * |M| = 28 * 11 • Total number of Q-values:|U| * |M| * |A| = 2 * 11 * 8 • With action filtering (W):each agent learns |W| * |A| = 8 Q-values • 10 training examples per 10-minute game

Value function learning via intermediate reinforcement I • Rg: if goal is scoredr = Qmax / t ... t tlim • Ri: notice t, xt3 conditions to fix reward • Ball is goes out of bounds at t+t0 (t0 < tlim) • Ball returns to agent at t+tr (tr < tlim) • Ball still in bounds at t+tlim

Ri: Case 1 • Reward r is based on value r0 • tlim = 30 seconds (300 sim. cycles) • Qmax = 100 • = 10

Ri: Cases 2 & 3 • r based on average x-position of ball • xog= x-coordinate of opponent goal • xlg = x-coordinate of learner‘s goal

Value function learning via intermediate reinforcement II • After taking ai and receiving r, update QQ(e(s, ai), ai) = (1 - ) * Q(e(s, ai), ai) + r • = 0.02

Action selection for multiagent training • Multiple agents are concurrently learning-> domain is non-stationary • To deal with this: • Each agent stays in the same state partition throughout training • Exploration rate is very high at first, then gradually decreasing

State partitioning • Distribute training into |M| partitionseach with a lookup-table of size |A| * |U| • After training, each agent can be given the trained policy for all partitions

Exploration rate • Early exploitation runs the risk of ignoring the best possible actions • When in state s choose • Action with highest Q-value with prob. p(ai such that j, Q(f(s),ai) Q(f(s),aj)) • Random Action with probability (1 – p) • p increases gradually from 0 to 0.99

Results I • Agents start out acting randomly with empty Q-tables • v V, a A, Q(v,a) = 0 • Probability of acting randomly decreases linearly over periods of 40 games • to 0.5 in game 40 • to 0.1 in game 80 • to 0.01 in game 120 • Learning agents use Ri

Result II Statistics • 160 10-minute games • |U| = 1 • Each agent • gets 1490 action-reinforcement pairs • -> reinforcement 9.3 times per game • tried each action 186.3 times • -> each action only once per game

Results IV Statistics • Action predicted to succeed vs. Selected • 3 of 8 „attack“ actions (37.5%):6437 / 9967 = 64.6% • Action filtering • 39.6% of action options were filtered out • 10400 action opportunities B(s) {}

Domain characteristics for TPOT-RL: • There are multiple agents organized in a team. • There are opaque state transitions. • There are too many states and/or not enough training examples for traditional RL. • The target concept is non-stationary. • There is long-range reward available. • There are action-dependent features available.

Examples for such domains: • Simulated robotic soccer • Network packet-routing • Information networks • Distributed logistics