Chapter 5 Transformations and Weighting to Correct Model Inadequacies

520 likes | 1.25k Vues

Chapter 5 Transformations and Weighting to Correct Model Inadequacies. Ray-Bing Chen Institute of Statistics National University of Kaohsiung. 5.1 Introduction. Recall several implicit assumptions: The model errors have mean zero and constant variance and are uncorrelated.

Chapter 5 Transformations and Weighting to Correct Model Inadequacies

E N D

Presentation Transcript

Chapter 5 Transformations and Weighting to Correct Model Inadequacies Ray-Bing Chen Institute of Statistics National University of Kaohsiung

5.1 Introduction • Recall several implicit assumptions: • The model errors have mean zero and constant variance and are uncorrelated. • The model errors have a normal distribution • The form of the model, including the specification of the regressors, is correct. • Plots of residuals are very powerful methods for detecting violations of these basic regression assumptions.

In this chapter, we focus on methods and procedures for building regression models when some of the above assumptions are violated. • We place considerable emphasis on data transformation. • The method of weighted least squares is also useful in building regression model in situations where some of the underlying assumptions are violated.

5.2 Variance-stabilizing Transformations • The assumption of constant variance is a basic requirement of regression analysis. • A common reason for the violation of this assumption is for the response variable y to follow a probability distribution in which the variance is functionally related to the mean. For example: Poisson r.v.

Several common used variance-stabilizing transformations are in Table 5.1

The strength of a transformations depends on the amount of curvature that it induces. • Sometimes we can use prior experience or theoretical considerations to guide us in selecting an appropriate transformation. • In these caes the appropriate transformation must be selected empirically. • If we do not correct the non-constant error variance problem, then the least-squares estimators will still be unbiased, but they will no longer have the minimum variance property. That is the regression coefficients will have larger standard errors than necessary.

When the response variable has been reexpressed, the predicted values are in the transformed scale. • The predicted values => the original units • Confidence or prediction intervals Example 5.1 The Electric Utility Data: • Develop a model relating peak hour demand (y) to total energy usage during the month (x). • Data (Table 5.2): 53 residential customers for the month of August, 1979 • Figure 5.1: the scatter plot of data

A simple linear regression model is assumed: • ANOVA is shown in Table 5.3 • A plot of the R-student residuals v.s. the fitted values is shown in Figure 5.2 • From Figure 5.2, the residuals form an outward-opening funnel, indicating that the error variance is increasing as energy consumption increases.

Suggest • The R-student values from this least-squares fit are plot against the new fitted values in Figure 5.3 • From Figure 5.3, the variance should be stable.

5.3 Transformations to Linearize the Model • The assumption of linear relationship between y and the regressors • Nonlinearity may be detected via: Lack-of-fit test, scatter diagram, the matrix of scatter-plots, or residual plots such as the partial regression plot. • Some nonlinear models are called intrinsically or transformably linear if the corresponding nonlinear function can be linearized by using a suitable transformation.

For example: Example 5.2 The Windmill Data • A research engineer is investigating the use of a windmill to generate electricity. He collected the data on the DC output (y) and the corresponding wind velocity (x). • Data is listed in Table 5.5. Figure 5.5 is the scatter diagogram.

From Figure 5.5, the relationship between y and x may be nonlinear. • Assume the simple linear regression model: and the summary statistics for this model are R2 = 0.8745, MSRes = 0.0557 and F0 = 160.26 • A plot of the residuals versus the fitted values is shown in Figure 5.6. • From this plot, clearly some other model form should be considered.

According to some reasons, the new model is assumed to be y = 0 + 1 (1/x) + • Figure 5.7 is a scatter diagram with the transformed variable x’ = 1/x. • The new fitted regression model is • The summary statistics are R2 = 0.9800, MSRes = 0.0089 and F0 = 1128.43 • Figure 5.8: R-student residuals from the transformed model v.s. the fitted values. • Figure 5.9: The normal probability plot (heavy tails)

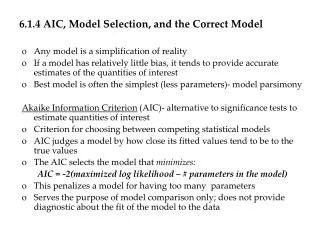

5.4 Analysis Methods for Selecting a Transformation • While in many instances transformation are selected empirically, more formal, objective techniques can be applied to help specify an appropriate transformation. 5.4.1 Transformation on y: The Box-Cox Method • Want to transform y to correct nonnormality and/or nonconstant variance. • Power transformation: yλ

Box and Cox (1964) show how the parameters of the regression model and can be estimated simultaneously using the method of maximum likelihood. • Use Where is the geometric mean of the observations and fit the model • is related to the Jocobian of the transformation converting the response variable y into

Computation Procedure: • Choose to minimize SSRes(λ) • Use 10-20 values of to compute SSRes(λ). Then plot SSRes(λ) v.s. . Finally read the value of that minimizes SSRes(λ) from graph. • A second iteration can be performed using a finer mesh of values if desired. • Cannot select by directly comparing residual sum of squares from the regressions of on x because of a different scale. • Once is selected, the analyst is free to fit the model using yλ ( 0) or ln y ( = 0).

An Approximate Confidence Interval for • The C.I. can be useful in selecting the final value for . • For example: if the 0.596 is the minimizing value of SSRes(λ) , but if 0.5 is in the C.I., then we would prefer choose = 0.5. If 1 is in the C.I., then no transformation may be necessary. • Maximize • An approximate 100(1-)% C.I. for is

Let • can be approximated by or where is the number of residual degrees of freedom. • This is based on • exp(x) = 1 + x + x2/2! +…

Example 5.3 The Electric Utility Data • Use the Box-Cox procedure to select a variance-stabilizing transformation. • The values of SSRes(λ) for various values of λare shown in Table 5.7 • A graph of the residual sum of squares v.s. is shown in Figure 5.10. • The optimal value = 0.5 • Find an approximate 95% C.I. The critical sum of squares SS* is 104.23. Then the C.I. is [0.26,0.80].

5.4.2 Transformation on the Regressor Variables • Suppose that the relationship between y and one or more of the regressor variables is nonlinear but that the usual assumptions of normally and independently distribution responses with constant variance are at least approximately satisfied. • Select an appropriate transformation on the regressor variables so that the relationship between y and the transformed regressor is as simple as possible. • Box and Tidwell (1962) describe an analytical procedure for determining the form of transfomation on x.

Box and Tidwell (1962) note that this procedure usually converges quite rapidly and often the first-stage resultα1 is a satisfactory estimate of α. • Convergence problem may be encountered in case where the error standard deviation is large or when the range of the regressor is very samll compared to its mean. Example 5.4 The Windmill Data • Figure 5.5 suggests that the relationship between y and x is not a straight line!

5.5 Generalized and Weighted Least Squares • Linear regression models with nonconstant error variance can also be fitted by the method of weighted least squares. • Choose weight wi 1/ Var(εi) • For the simple linear regression, • The normal equations:

5.5.1 Generalized Least Squares • Model: • Forε, assume that E(ε) = 0 and Var(ε) =σ2 V • Since σ2 V is a covariance matrix, V must be nonsingular and positive definite. So there exists a matrix K such that V = K’K. K is also called the square root of V. • New model: • For this new model, z = K-1 y, B = K-1 X and g = K-1ε Orz = (K’)-1 y, B = (K’)-1 X and g = (K’)-1ε

E(g) = 0, Var(g) = σ2 I • The least-squares functions: S()=(y - X )’ V-1 (y - X )

5.5.2 Weighted Least Squares • Assume • The estimation procedure is usually called weighted least squares. • W = V-1 is also a diagonal matrix with diagonal elements (weights) w1, …, wn

The normal equation: • The weighted least-squares estimator: • Transformed set of data

5.5.3 Some Practical Issues • To use weighted least-squares, the weights wi must be known! • Sometimes prior knowledge or experience or information from a theoretical model can be used to determine the weights. • Alternatively, residual analysis may indicate that the variance of the errors may be a function of one of the regressors, say Var(i) =2xij, i.e. wi = 1/xij • In some cases yi is actually an average of ni observations at xi and if all original observations have constant variance 2, then Var(yi) = Var(i) =2/ni, i.e. wi = ni

Another possible: inversely proportional to the variances of the measurement error. • Several iterations: Guess at the weights => Perform the analysis => reestimate the weights • When Var() =2V and V I, the ordinary least-square estimator is still unbiased. • The corresponding covariance matrix • This estimator is no longer a minimum variance estimator, because the covariance matrix of the generalized least-squares estimators gives the smaller variances for the regression coefficients.

Example 5.5 Weighted Least Squares • 30 restaurants: the average monthly food sale(y) v.s. the annual advertising expenses (x)(Table 5.9) • Use the ordinary least-squares, • Figure 5.11: the residuals v.s. the fitted values. • This figure indicates violation of the constant variance assumption. • Consider the near-neighbors as the repeat points.

Let the fitted values from the above equations to be the inverse of weights. • The weighted least-squares: • Plot weighted residuals ( ) v.s. the fitted values ( ). See Figure 5.12 • For several regressors, it is not easy to identify the near-neighbors. • Be careful to check if the weights procedure is reasonable or not!