Time dilation in RAMP

Time dilation in RAMP. Zhangxi Tan and David Patterson Computer Science Division UC Berkeley. A time machine. Using RAMP as datacenter simulator Vary DC configurations: processors, disks, network and etc.

Time dilation in RAMP

E N D

Presentation Transcript

Time dilation in RAMP Zhangxi Tan and David Patterson Computer Science Division UC Berkeley

A time machine • Using RAMP as datacenter simulator • Vary DC configurations: processors, disks, network and etc. • Evaluate different system implementations: Mapreduce with 10 Gbps, 2ms delay or 100 Gbps, 80ms delay interconnect • Explore and predict what happened if update hardware in your cluster: powerful CPU, fast/large disks • Try things in the future! RAMP inside

The problems • Emulate fast and manycomputers in FPGA • What are the problems? • First comment half year ago in RadLab retreat: 100 MHz is too slow can’t reflect GHz machine • Targets are becoming more and more complex • Implement them in FPGA and cycle accurate is desired • How many cores can we put in FPGA?(Original vision 16-24 cores per chip.Now, 1 Leon on V2P30, 2-3 on V2P70)

Methodologies • RDL • Target cycle, host cycle, start, stop, channel model… • Transfer data between units with extra start/stop control • Replace original transferring logic with RDL • control target clock: If no data, still send something to keep the target time “running” • Bad control logic implementation may cause deadlock • RDLizing unit (build channels, units) if you want to talk with each other • Compared to porting APPs for MicroBlaze? • RDLizing is obvious and simple?? • Model: event driven? or clock driven? • Time dilation • Remove target cycle control • Stepping every clock cycle is the way to debug 1000 nodes system? • Use standard data transfer interface • Rescale everything to a “virtual wall clock” and “slow down” events accordingly • Events: Timer interrupt, data sent/received and etc

Basic Idea • “Slow down”time passage to make target faster • 10 ms wall clock time = 2 ms target time • Network: shorter time to send packet -> BW increase, latency decrease • Disk: shorter time to read/write • CPU: shorter time to do computation • Virtual wall clock is the coordinate in target, only control event interval in implementation No time dilation 10 ms Wall clock 10 ms perceived event interval Time dilation 2 ms Virtual wall clock 2 ms perceived event interval 10 ms perceived event interval

Real world examples • Sending data at the same rate with the same logic Network Sending 100 Mb data between two events 1 sec Real Perceived BW : 100 Mbps 100 ms Time dilation Perceived BW : 1 Gbps CPU and OS • OS updates its timer every 10 ms (jiffies) in each timer interrupt • Reprogram the timer to slow the interrupt down • No OS modifications • No HW changes • Speed up the processor by x5 10 ms Timer interrupt before time dilation 50 ms in wall clock time Timer interrupt after time dilation 10 ms perceived in target

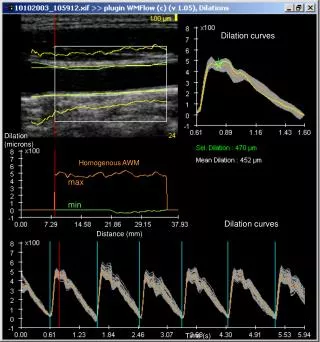

Experiments • HW Emulator (FPGA): 32-bit Leon3 with, 50MHz, 90 MHz DDR memory, 8K L1 Cache (4K Inst and 4K Data) • Target system: Linux 2.6 kernel, Leon @ 50 MHz / 250 MHz / 500 MHz / 1 GHz / 2 GHz • Run Dhrystone benchmark • Tomorrow: HW/SW co-simulation example • Concept Time Dilation Factor = wall clock time / emulated clock time

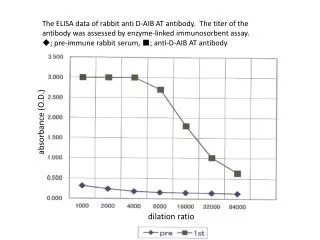

Dhrystone result (w/o memory TD) How close to a 3 GHz x86 ~8000 Dhrystone MIPS? Memory, Cache, CPI

Problems • Similar to time dilation in VM • To Infinity and Beyond: Time-Warped Network Emulation, NSDI 06 • Everything scaled linearly, including memory! • VM is lucky: networking code can fit in cache easily. • RAMP has more knobs to tweak. • Solution: slow down the memory and redo the experiment

Dhrystone w. Memory TD Keep the memory access latency constant -90 MHz DDR DRAM w. 200 ns latency in all target (50MHz to 2GHz)- Latency is pessimistic, but reflect the trendRAMP blue result + Time dilation vs. real system?

Limitation of Naïve time dilation Unit Time dilation counter • Fixed CPI (memory/CPU) model • Next step • Variable time dilation factor: distribution and state (statistic model) • Emulate OOO with time dilation Peek each instruction and dilate it • Going to deterministic? No, I’ll do statistic • No extra control between units • Reprogram Time Dilation Counter (TDC) in each unit to get different target configuration Proposed model