Deep belief nets experiments and some ideas.

300 likes | 436 Vues

This work presents a comprehensive exploration of Deep Belief Networks (DBNs) through a series of experiments, focusing on image databases and temporal sequence prediction. The research utilizes techniques like the bag of words model with SIFT for feature extraction and investigates the effectiveness of pre-training on larger datasets. By comparing DBNs to traditional methods like support vector machines, the study delves into error rates, learning representations, and deeper learning dynamics. Additionally, it touches on unsupervised learning, predictive capabilities, and the need for hidden units in supervised learning contexts.

Deep belief nets experiments and some ideas.

E N D

Presentation Transcript

Deep belief nets experiments and some ideas. Karol Gregor NYU/Caltech

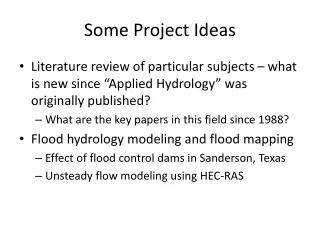

Outline DBN Image database experiments Temporal sequences

Deep belief network Backprop Labels H3 H2 H1 Input

Preprocessing – Bag of words of SIFT With: Greg Griffin (Caltech) Images Features (using SIFT) Bag of words Image1 Image2 Word1 23 11 Word2 12 55 Word3 92 33 … … … Group them (e.g. K-means)

- Pre-training on larger dataset - Comparison to svm, spm

Compatibility between databases Pretraining: Corel database Supervised training: 15 Scenes database

Simple prediction Y t W t-1 t-2 t-3 X Supervised learning

With hidden units(need them for several reasons) G H t-1,t-2,t-3 t t-1,t-2,t-3 t X Y Memisevic, R. F. and Hinton, G. E., Unsupervised Learning of Image Transformations. CVPR-07

Example pred_xyh_orig.m

Additions G H t-1 t t-1 t X Y Sparsity: When inferring the H the first time, keep only the largest n units on Slow H change: After inferring the H the first time, take H=(G+H)/2

Examples pred_xyh.m present_line.m present_cross.m

Hippocampus Cortex+Thalamus Muscles (through sub-cortical structures) Senses e.g. Eye (through retina, LGN) e.g. See: Jeff Hawkins: On Intelligence

Cortical patch: Complex structure(not a single layer RBM) From Alex Thomson and Peter Bannister, (see numenta.com)

1) Prediction A B C D E F G H J K L E F H

2) Explicit representations for sequences VISIONRESEARCH time

3) Invariance discovery e.g. complex cell time

4) Sequences of variable length VISIONRESEARCH time

5) Long sequences Layer1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 ? ? 2 2 2 2 2 2 2 2 2 2 Layer2 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 1 1 2 3 5 8 13 21 34 55 89 144

6) Multilayer - Inferred only after some time VISIONRESEARCH time

8) Variable speed - Can fit a knob with small speed range

Hippocampus Cortex+Thalamus Muscles (through sub-cortical structures) Senses e.g. Eye (through retina, LGN)

Hippocampus Cortex+Thalamus In Addition • Top down attention • Bottom up attention • Imagination • Working memory • Rewards Muscles (through sub-cortical structures) Senses e.g. Eye (through retina, LGN)

Training data • Videos • Of the real world • Simplified: Cartoons (Simsons) • A robot in an environment • Problem: Hard to grasp objects • Artificial environment with 3D objects that are easy to manipulate (e.g. Grand theft auto IV with objects)