NTEN Evaluation Session MIT OpenCourseware

NTEN Evaluation Session MIT OpenCourseware. November 6, 2003. What is MIT OpenCourseware (OCW)?. The public face of OCW…. What is MIT OpenCourseware?. OCW, in its own words…. ?. ?. ?. How to apply results?. What to measure?. How to measure?. The BIG THREE (evaluation questions).

NTEN Evaluation Session MIT OpenCourseware

E N D

Presentation Transcript

NTEN Evaluation Session MIT OpenCourseware November 6, 2003

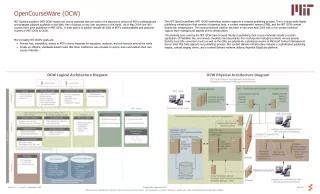

What is MIT OpenCourseware (OCW)? The public face of OCW…

What is MIT OpenCourseware? OCW, in its own words…

? ? ? How to apply results? What to measure? How to measure? The BIG THREE (evaluation questions) Evaluation explores how Web pages meet (or fail to meet) their goals. • Do we know what people are doing on-line? • Do user behaviors support “business” goals? • What usage data is most relevant? • How much data is too much data? • How can we derive a coherent picture from an ad hoc collection of methods and tools? • How can we coordinate different groups responsible for different parts of the measurement puzzle? • What is synthesis? Who “owns” the findings? • Which comes first: strategic decisions, solution changes, or further research? • Who is accountable for impact?

? ? ? How to apply results? What to measure Making Measurement Matter How to measure What to measure? How to measure? MIT OCWObjectives The OCW Evaluation We structured the evaluation around OCW’s missions and goals. • What to Measure • Basic profile information • A scenario-based theory of action • A view of access, use, and impact • Complement definitions of goals and success indicators with key questions and hypotheses • How to Measure • Apply an integrated toolkit of approaches and methods, including • Web Analytics • Multiple on-line surveys • Interviews • Applying results • Understand the decision support needs of the people who rely on measurement based-insights • Ensure that the evaluation enables key users to answer critical questions and guide decision making • Develop and refine an effective evaluation infrastructure (people, processes, and tools)

The research methodology Making research actionable. Goals Indicators On-line survey

Phases of Action | 2003 Chunking tasks over time helps tame the beast. Conduct integrated baseline evaluation Disseminate, evaluate, and evolve Refine eval framework Prepare for evaluations • Draft scenarios • Develop indicators • Establish decisionsupport matrix • Set reporting needs • Select technologies • Draft research instruments • Establish sampling criteria and screeners • Begin recruiting • Pilot test • Data gathering • Analysis • Initial reporting • Targeted reporting • Inquiry response • Promotions?

? ? ? How to apply results? What to measure? How to measure? Report from the trenches complex goals + complex organizations = complex evaluations • Multiple overlapping goals can make for complex indicators… • …and complex research instruments • Priorities matter • Hypotheses help • Applying multiple techniques helps to minimize data gaps • Mixing qualitative and quantitative techniques delivers data and tests assumptions • Coordination is not easy… • Decision support is a critical touchstone at all stages