Structurally Discriminative Graphical Models for ASR

750 likes | 961 Vues

Structurally Discriminative Graphical Models for ASR. The Graphical Models Team WS’2001. The Graphical Models Team. Geoff Zweig Kirk Jackson Peng Xu Eva Holtz Eric Sandness Bill Byrne. Jeff A. Bilmes Thomas Richardson Karen Livescu Karim Filali Jerry Torres Yigal Brandman.

Structurally Discriminative Graphical Models for ASR

E N D

Presentation Transcript

Structurally Discriminative Graphical Models for ASR The Graphical Models Team WS’2001

The Graphical Models Team • Geoff Zweig • Kirk Jackson • Peng Xu • Eva Holtz • Eric Sandness • Bill Byrne • Jeff A. Bilmes • Thomas Richardson • Karen Livescu • Karim Filali • Jerry Torres • Yigal Brandman

GM: Presentation Outline • Part I: Main Presentations: 1.5 Hour • Graphical Models for ASR • Discriminative Structure Learning • Explicit GM-Structures for ASR • The Graphical Model Toolkit (for ASR) • Corpora Description & Baselines • Structure Learning • Visualization of bivariate MI • Improved Results Using Structure Learning • Analysis of Learned Structures • Future Work & Conclusions • Part II: Student Presentations & Discussion. • Undergraduate Student Presentations (5 minutes each) • Graduate Student Presentations (10 minutes each) • Floor open for discussion (20 minutes)

Accomplishments • Designed and built brand-new Graphical Model based toolkit for ASR and other large-task time-series modeling. • Stress-tested the toolkit on three speech corpora: Aurora, IBM Audio-Visual, and SPINE • Evaluated the toolkit using different features • Began improving WER results with discriminative structure learning • Structure induction algorithm provides insight into the models and feature extraction procedures used in ASR

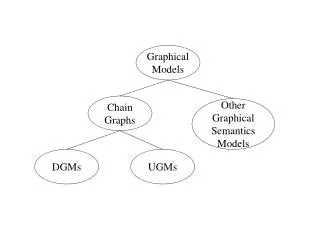

Graphical Models (GMs) • GMs provide: • A formal and abstract language with which to accurately describe families of probabilistic models and their conditional independence properties. • A set of algorithms that provide efficient probabilistic inference and statistical decision making.

Why GMs for ASR • Quickly communicate new ideas. • Rapidly specify new models for ASR (but with the right & computationally efficient tools). • Graph structure learning lets data tell us more than just parameters of model • [Novel] acoustic features better modeled with customized graph structure • Structural Discriminability: improve recognition while concentrating modeling power on what is important (i.e., that which helps ASR word error). • Resulting Structures can increase knowledge about speech and language • An HMM is only one instance of a GM

Q1 Q2 Q3 Q4 X1 X2 X3 X4 But HMMs are only one example within the space of Graphical Models. An HMM is a Graphical Model Hidden Markov Model

Novel Features The HMM Hole Features, HMMs, and MFCCs MFCCs The HMM Hole

Novel Features Features and Structure Learned GMs The structurally discriminative data-driven self-adjusting GM Hole

. HMM/ASR The Bottom Line • ASR/HMM technology occupies only a small portion of GM space. GMs

Discriminatively Structured Graphical Models • Overall goal: model parsimony, i.e., algorithmically efficient and accurate, software efficient, small memory footprint, low-power, noise robust, etc. • achieve same or better performance with same or fewer parameters. • To increase parsimony in a classification task (such as ASR), graphical models should represent only the “unique” dependencies of each class, and not those “common” dependencies across all classes that do not help classification.

Structural Discriminability Object generation process: V3 V4 V3 V4 V1 V2 V1 V2 Object recognition process: remove non-distinct dependencies that are found to be superfluous for classification. V3 V3 V4 V4 V1 V1 V2 V2

Information theoretic approach to towards discriminative structures. Discriminative Conditional Mutual Information used to determine edges in the graphs

The EAR Measure • EAR: Explaining Away Residual • A way of judging the discriminative quality of Z as a parent of X in context of Q. • Marginally Independent, Conditionally Dependent • Intractable To Optimize • A goal of workshop: Evaluate EAR measure approximations Z X Q

Hidden Variable Structures for Training and Decoding • HMM paths and GM assignments • Simple Training Structure • Bigram Decoding Structure • Training with Optional Silence • Some Extensions

IH D JH T IH IH JH IH D 3 5 4 7 1 2 6 Paths and Assignments HMM HMM Grid T Transition Probabilities Emission Probabilities D IH IH JH IH T T Transition Probabilities Emission Probabilities

End-of-Utterance Observation 1 2 2 3 4 Position 5 Transition 1 0 1 1 1 Phone D IH IH JH IH T Observation The Simplest Training Structure • Each BN assignment = Path through HMM • Sum over BN assignments = sum over HMM Paths • Max over BN assignments = Max over HMM paths

Decoding with a Bigram LM End-of-utterance = 1 Word Word Transition Word Position Phone Transition ... Phone Feature

Training - Optional Silence Skip-Sil End-of Utterance=1 Pos. in Utterance Word Word Transition Word Position Phone Transition ... Phone Feature

End-of-Utterance Observation Position Transition Phone Observation Articulatory Networks Articulators

End-of-Utterance Observation Position Transition Phone Noise Condition C Noise Clustering Network Observation

GMTK: New Dynamic Graphical Model Toolkit • Easy to specify the graph structure, implementation, and parameters • Designed both for large scale dynamic problems such as speech recognition and for other time series data. • Alpha-version of toolkit used for WS01 • Long-term goal: public-domain open source high-performance toolkit.

Q1 Q2 Q3 Q4 X1 X2 X3 X4 An HMM can be described with GMTK

GMTK Structure file for HMMs frame : 0 { variable : state { type : discrete hidden cardinality 4000; switchingparents : nil; conditionalparents : nil using MDCPT(“pi”); } variable : observation { type : continuous observed 0:39; switchingparents : nil; conditionalparents : state(0) using mixGaussian mapping(“state2obs”); } } frame : 1 { variable : state { type : discrete hidden cardinality 4000; switchingparents : nil; conditionalparents : state(-1) using MDCPT(“transitions”); } variable :observation { type : continuous observed 0:39; switchingparents : nil; conditionalparents : state(0) using mixGaussian mapping(“state2obs”); } }

M1 M1 F1 F1 S M2 F2 Switching Parents S=0 C S=1

M1 M1 F1 F1 C S M2 F2 GMTK Switching Structure variable : S { type : discrete hidden cardinality 2; switchingparents : nil; conditionalparents : nil using MDCPT(“pi”); } variable : M1 {...} variable : F1 {...} variable : M2 {...} variable : F2 {...} variable : C { type : discrete hidden cardinality 30; switchingparents : S(0) using mapping(“S-mapping”); conditionalparents : M1(0),F1(0) using MDCPT(“M1F1”) | M2(0),F2(0) using MDCPT(“M2F2”); }

Summary: Toolkit Features • EM/GEM training algorithms • Linear/Non-linear Dependencies on observations • Arbitrary parameter sharing • Gaussian Vanishing/Splitting • Decision-Tree-Based implementations of dependencies • EM Training & Decoding • Sampling • Logspace Exact Inference – Memory O(logT) • Switching Parent Functionality

Corpora • Aurora 2.0 • Noisy continuous digits recognition • 4 hours training data, 2 hours test data in 70 Noise Types/SNR conditions • MFCC + Delta + Double-Delta • SPINE • Noisy defense-related utterances • 10,254 training, 1,331 test Utterances • OGI Neural Net Features • WS-2000 AV Data • 35 hours training data, 2.5 hours test data • Simultaneous audio and visual streams • MFCC + 9-Frame LDA + MLLT

Aurora Benchmark Accuracy GMTK Emulating HMM

Relative Improvements GMTK Emulating HMM

AM-FM Feature Results GMTK Emulating HMM

SPINE Noise Clustering 24k, 18k, and 36k Gaussians for 0, 3, 6 Clusters Flat Start Training; With Byrne Gaussians, 33.5%

.... Q0 Qt Q1 Qt-1 . . . . Xt-1 X0 X1 Xt Baseline model structure States Feature Vectors Implies: Xt|| X0,...,Xt-1 | Qt

Structure Learning States .... Q0 Qt Q1 Qt-1 Feature Vectors . . . . Xti Xt-1 X0 X1 Xt Use observed data to decide which edges to add as parents for a given feature: Xti

EAR Measure (Bilmes 1998) Qt Goal: find a set of parents pa (Xti) which maximizes: E[ log p (Q | Xti , pa (Xti)) ] Xti

EAR Measure (Bilmes 1998) Qt Goal: find a set of parents pa (Xti) which maximizes: E[ log p (Q | Xti , pa (Xti)) ] Xti

EAR Measure (Bilmes 1998) Qt Goal: find a set of parents pa (Xti) which maximizes: E[ log p (Q | Xti , pa (Xti)) ] equivalently maximizing Xti I ( Q ; Xti | pa (Xti) )

EAR Measure (Bilmes 1998) Qt Goal: find a set of parents pa (Xti) which maximizes: E[ log p (Q | Xti , pa (Xti)) ] equivalently maximizing Xti I ( Q ; Xti | pa (Xti) ) equivalently maximizing the EAR measure: EAR [pa (Xti )] = I (pa (Xti) ; Xti | Q )- I (pa (Xti) ; Xti)

EAR Measure (Bilmes 1998) Qt Goal: find a set of parents pa (Xti) which maximizes: E[ log p (Q | Xti , pa (Xti)) ] equivalently maximizing Xti I ( Q ; Xti | pa (Xti) ) Discriminative performance will improve only if EAR [pa (Xti )] > 0

Structure Learning I(X;Z) Parents for each feature I(X;Z|Q)-I(X;Z) Structure Learning I(X;Z|Q) EAR measure referred to as ‘dlinks’

EAR measure cannot be decomposed: e.g. possible to have for Xti : EAR ( { Z1, Z2 } ) >> 0 EAR ( { Z1} ) < 0 EAR ( {Z2 } ) < 0 2( # of features) ( max lag for parent) Finding the optimal structure is hard No. of possible sets of parents for each Xti :

EAR measure cannot be decomposed: e.g. possible to have for Xti : EAR ( { Z1, Z2 } ) >> 0 EAR ( { Z1} ) < 0 EAR ( {Z2 } ) < 0 Finding the optimal structure is hard Evaluating the EAR measure is computationally intensive: During the short time of the workshop we restricted to EAR ( {Zi} ) for sets of parents of size 1.

Approximation of the EAR criterion We approximated EAR ( { Z1,..., Zk } ) with EAR ({ Z1} ) + ...... + EAR ({Z2 }) This is a crude heuristic, which gave reasonable performance for k = 2.