Floating Point (FLP) Representation

This article explains the concept of floating point representation, focusing on the IEEE Standard 754. We will discuss the structure of floating point values, including normalization, mantissa, exponent, and sign. You'll learn how values are computed and represented in single and double precision formats, handling biases in exponent representation and understanding overflow and underflow conditions. Additionally, we’ll cover the precision aspects related to fractional representations and give practical examples for converting decimal values into normalized binary formats.

Floating Point (FLP) Representation

E N D

Presentation Transcript

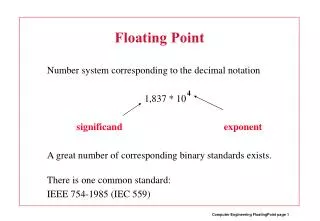

Floating Point (FLP) Representation A Floating Point value: f = m*r**e Where: m – mantissa or fractional r – base or radix, usually r = 2 e - exponent

Normalization • Normalized value: 0.1011 • Unnormalized value: 0.001011 • Normalization: 0.001011*(2**2)*(2**-2)= • =0.1011*2**-2 • Value of normalized mantissa: • 0.5<=m<1

FLP Format • sign exponent mantissa • sign: 0 + • 1 - • Biased exponent: assume exponent q bits • -2**(q-1)<=e<=2**(q-1)-1 add bias: • +2**(q-1) to all sides, get: • 0 <= eb <= 2**q -1 • e – true exponent; • eb – biased exponent

Example • f = -0.5078125*2**-2 • Assume a 32-bit format: • sign – 1 bit, exponent – 10 bits (q=10), • mantissa – 21 bits • q-1 = 9, b = bias = 2**9 = 512, • e = -2, eb = e + b = -2 + 512 = 510 • f representation: • 1 0111111110 10000010………0 • since 0.5=0.1, 0.0078125=2**-7

Range of representation • In fixed point, the largest number representable in 32 bits: • 2**31-1 approximately equal 10**9 • In the previous 32-bit format, the largest number representable: (1-2**-21)*2**511 • Approximately equal 10**153 • The smallest: 0.5*2**-512 • If a number falls above the largest, we have an overflow, if below the smallest, we have an underflow.

IEEE FLP Standard 754 1985 • Single precision: 32 bits • Double precision: 64 bits • Single Precision. • f = +- 1.M*2**(E’-127) where: • M – fractional, E’ – biased exponent, • bias = 127 • Format: sign: 1 bit, exponent – 8 bits, • fractional – 23 bits. • True exponent E = E’ – 127 • 0 < E’ < 255

Normalized single precision • Normalized: • 1.xxxxxx • The 1 before the binary point is not stored, but assumed to exist. • Example: convert 5.25 to single precision representation. • 5.25 = 101.01 not normalized. • Normalized: 1.0101*2**2 • True exponent E = 2, • Biased exponent E’ = E + 127 = 129, thus: • 0 10000001 01010…………0

Double precision • Value represented: • +- 1.M*2**(E’-1023) • Format: sign: 1 bit, exponent 11 bits, • fractional 52bits. • Bias = 1023 • Maximal number represented in single precision, approximately: 10**38 • In double precision: approximately 10**308

Precision • Increasing the exponent field, increases the range, but then, the fractional is decreased, decreasing the precision. • Suppose we want to receive a precision of n decimal digits. How many bits x we need in the fractional? • 2**x = 10**n, take decimal log on both sides: xlog2 = n; x=n/log2=n/0.301 • For n=7, need 7/0.301=23.3, 24 bits. • Achieved in single precision standard, since M has 23 bit and there is 1., not stored but existing.

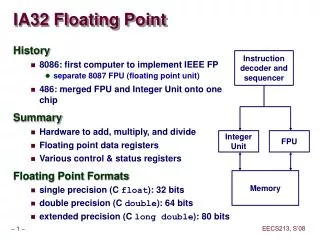

Extended Precision 80 bits • Not a part of IEEE standard. Used primarily in Intel processors. • Exponent 15 bits. • Fractional 64 bits. • This is why FLP registers in Intel processors are 80 and not 64 bits. • Its precision is 19 decimal digits.

FLP Computation • Given 2 FLP values: • X=Xm*2**Xe; Y=Ym*2**Ye; Xe<Ye • X+-Y = (Xm*2**(Xe-Ye)+-Ym)*2**Ye • X*Y = Xm*Ym*2**(Xe+Ye) • X/Y = (Xm/Ym)*2**(Xe-Ye)