Decision Analysis: Navigating Risk and Uncertainty in Decision-Making

This presentation explores the fundamentals of decision analysis, including the concepts of risk and uncertainty, expected utility theory, and various decision-making strategies. Key topics such as the St. Petersburg game, Ellsberg Paradox, and decision criteria like maximin, maximax, and minimax regret are discussed. The influence of junk science and the precautionary principle on decisions is examined. Additionally, approaches to model uncertainties, probabilities under imprecision, and rationality in decision-making are highlighted, providing tools to enhance informed decision-making.

Decision Analysis: Navigating Risk and Uncertainty in Decision-Making

E N D

Presentation Transcript

HANDOUT MASTER HAS HEADER AND FOOTER ANIMATED Decision Analysis Scott Ferson, scott@ramas.com 25 September 2007, Stony Brook University, MAR 550, Challenger 165

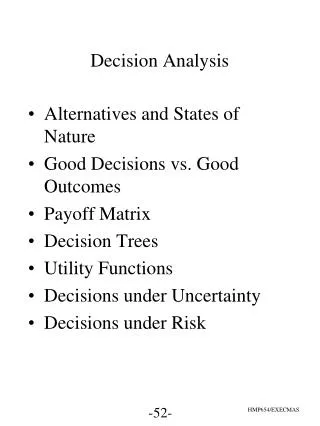

Outline • Risk and uncertainty • Expected utility decisions • St. Petersburg game, Ellsberg Paradox • Decisions under uncertainty • Maximin, maximax, Hurwicz, minimax regret, etc. • Junk science and the precautionary principle • Decisions under ranked probabilities • Extreme expected payoffs • Decisions under imprecision • E-admissibility, maximality, -maximin, -maximax, etc. • Synopsis and conclusions

Decision theory • Formal process for evaluating possible actions and making decisions • Statistical decision theory is decision theory using statistical information • Knight (1921) • Decision under risk (probabilities known) • Decision under uncertainty (probabilities not known)

Discrete decision problem • Actions Ai (strategies, decisions, choices) • Scenarios Sj • Payoffs Xij for action Ai in scenario Sj • Probability Pj (if known) of scenario Sj • Decision criterion S1 S2 S3 … A1 X11 X12 X13 … A2 X21 X22 X23 … A3 X31 X32 X33 … . . . . . . . . . . . . P1 P2 P3 …

Decisions under risk • If you make many similar decisions, then you’ll perform best in the long run using “expected utility” (EU) as the decision rule • EU = maximize expected utility (Pascal 1670) • Pick the action Ai so (Pj Xij) is biggest

Example Scenario 1 Scenario 2 Scenario 3 Scenario 4 Action A 10 5 15 5 Action B 20 10 0 5 Action C 10 10 20 15 Action D 0 5 60 25 Probability .5 .25 .15 .1 10*.5 + 5*.25 + 15*.15 + 5*.1 = 9 20*.5 + 10*.25 + 0*.15 + 5*.1 = 13 20*.5 + 10*.25 + 0*.15 + 5*.1 = 13 10*.5 + 10*.25 + 20*.15 + 15*.1 = 12 0*.5 + 5*.25 + 60*.15 + 25*.1 = 12.75 Maximizing expected utility prefers action B

Strategizing possible actions • Office printing • Repair old printer • Buy new HP printer • Buy new Lexmark printer • Outsource print jobs • Protection Australia marine resources • Undertake treaty to define marine reserve • Pay Australian fishing vessels not to exploit • Pay all fishing vessels not to exploit • Repel encroachments militarily • Support further research • Do nothing

Scenario development • Office printing • Printing needs stay about the same/decline/explode • Paper/ink/drum costs vary • Printer fails out of warranty • Protection Australia marine resources • Fishing varies in response to ‘healthy diet’ ads/mercury scare • Poaching increases/decreases • Coastal fishing farms flourish/are decimated by viral disease • New longline fisheries adversely affect wild fish populations • International opinion fosters environmental cooperation • Chinese/Taiwanese tensions increase in areas near reserve

How do we get the probabilities? • Modeling, risk assessment, or prediction • Subjective assessment • Asserting A means you’ll pay $1 if not A • If the probability of A is P, then a Bayesian agrees to assert A for a fee of $(1-P), and to assert not-A for a fee of $P • Different people will have different Ps for an A selling buying

Rationality • Your probabilities must make sense • Coherent if your bets don’t expose you to sure loss • guaranteed loss no matter what the actual outcome is • Probabilities larger than one are incoherent • Dutch books are incoherent • Let P(X) denote the price of a promise to pay $1 if X • Setting P(Hillary OR Obama) to something other than the sum P(Hillary) + P(Obama) is a Dutch book If P(Hillary OR Obama) is smaller than the sum, someone could make a sure profit by buying it from you and selling you the other two

for i = 1 to 100 do say i, tab,tab,2^(i-1)/100 1 0.01 2 0.02 3 0.04 4 0.08 5 0.16 6 0.32 7 0.64 8 1.28 9 2.56 10 5.12 11 10.24 12 20.48 13 40.96 14 81.92 15 163.84 16 327.68 17 655.36 18 1310.72 19 2621.44 20 5242.88 21 10 485.76 22 20971.52 23 41943.04 24 83886.08 25 167772.16 26 335544.32 27 671088.64 28 1342177.28 29 2684354.56 30 5368709.12 31 10737418.24 32 21474836.48 33 42949672.96 34 85899345.92 35 171 798 691.84 36 343597383.68 37 687194767.36 38 1374389534.7 39 2748779069.4 40 5497558138.9 41 10995116278 42 21990232556 43 43980465111 44 87960930222 45 1.7592186044e+11 46 3.5184372089e+11 47 7.0368744178e+11 48 1.4073748836e+12 49 2.8147497671e+12 50 5.6294995342e+12 51 1.1258999068e+13 52 2.2517998137e+13 53 4.5035996274e+13 54 9.0071992547e+13 55 1.8014398509e+14 56 3.6028797019e+14 57 7.2057594038e+14 58 1.4411518808e+15 59 2.8823037615e+15 60 5.764607523e+15 61 1.1529215046e+16 62 2.3058430092e+16 63 4.6116860184e+16 64 9.2233720369e+16 65 1.8446744074e+17 66 3.6893488147e+17 67 7.3786976295e+17 68 1.4757395259e+18 69 2.9514790518e+18 70 5.9029581036e+18 71 1.1805916207e+19 72 2.3611832414e+19 73 4.7223664829e+19 74 9.4447329657e+19 75 1.8889465931e+20 76 3.7778931863e+20 77 7.5557863726e+20 78 1.5111572745e+21 79 3.022314549e+21 80 6.0446290981e+21 81 1.2089258196e+22 82 2.4178516392e+22 83 4.8357032785e+22 84 9.6714065569e+22 85 1.9342813114e+23 86 3.8685626228e+23 87 7.7371252455e+23 88 1.5474250491e+24 89 3.0948500982e+24 90 6.1897001964e+24 91 1.2379400393e+25 92 2.4758800786e+25 93 4.9517601571e+25 94 9.9035203143e+25 95 1.9807040629e+26 96 3.9614081257e+26 97 7.9228162514e+26 98 1.5845632503e+27 99 3.1691265006e+27 100 6.3382530011e+27 St. Petersburg game . First tail Winnings 1 0.01 2 0.02 3 0.04 4 0.08 5 0.16 6 0.32 7 0.64 8 1.28 9 2.56 10 5.12 11 10.24 12 20.48 13 40.96 14 81.92 15 163.84 . . . . . . 28 1,342,177.28 29 2,684,354.56 30 5,368,709.12 . . . . . . Bernoulli boys (early 1700’s) D. Bernoulli believed that the notion of utility was sufficient to solve this paradox • Pot starts at 1¢ • Pot doubles with every coin toss • Coin tossed until “tail” appears • You win whatever’s in the pot • What would you pay to play?

What’s a fair price? • The expected winnings would be a fair price • The chance of ending the game on the kth toss (i.e., the chance of getting k1 heads in a row) is 1/2k • If the game ends on the kth toss, the winnings would be 2k1 cents So you should be willing to pay any price to play this game of chance

St. Petersburg paradox If the game is truncated, then a related paradox emerges that we might call the “Powerball paradox” in which people grossly overpay (relative to the EU) to play the game. Consider the expected winnings of the St. Petersburg game if the coin tossing is limited to 35 tosses. The maximum possible winnings (i.e., 35 heads) would be about 172 million dollars. The EU for such a game would be less that 20 cents, yet a lot of people would be willing to throw away substantially more than this, perhaps a dollar or two, for even a remote chance at 172 million. The reason is that people overweight low probability events (Kahneman and Tversky <<>>). • The paradox is that nobody’s gonna pay more than a few cents to play • To see why, and for a good time, call http://www.mathematik.com/Petersburg/Petersburg.htmlclick • No “solution” really resolves the paradox • Bankrolls are actually finite • Can’t buy what’s not sold • Diminishing marginal utility of money

Utilities • Payoffs needn’t be in terms of money • Probably shouldn't be if marginal value of different amounts vary widely • Compare $10 for a child versus Bill Gates • A small profit may be a lot more valuable than the amount of money it takes to cover a small loss • Use utilities in matrix instead of dollars

$50 Risk aversion • EITHER get $50 • OR get $100 if a randomly drawn ball is red from urn with half red and half blue balls • Which prize do you want? EU is the same, but most people take the sure $50

Ellsberg Paradox HERO • Balls can be red, black or yellow (probs are R, B, Y) • A well-mixed urn has 30 red balls and 60 other balls • Don’t know how many are black, how many are yellow Gamble A Gamble B Get $100 if draw red Get $100 if draw black Gamble C Gamble D Get $100 if red or yellow Get $100 if black or yellow R > B R + Y < B + Y

Persistent paradox • Most people prefer A to B (so are saying R.>B) but also prefer D to C (saying R<B) • Doesn’t depend on your utility function • Payoff size is irrelevant • Not related to risk aversion • Evidence for ambiguity aversion • Can’t be accounted for by EU

Ambiguity aversion • Balls can be either red or blue • Two urns, both with 36 balls • Get $100 if a randomly drawn ball is red • Which urn do you wanna draw from?

Assumptions • . • Discrete decisions • Closed world Pj = 1 • Analyst can come up with Ai, Sj, Xij, Pj • Ai and Sj are few in number • Xij are unidimensional • Ai not rewarded/punished beyond payoff • Picking Ai doesn’t influence scenarios • Uncertainty about Xij is negligible

Why not use EU? • Clearly doesn’t describe how people act • Needs a lot of information to use • Unsuitable for important unique decisions • Inappropriate if gambler’s ruin is possible • Sometimes Pj are inconsistent • Getting even subjective Pj can be difficult

Decisions without probability • Pareto (some action dominates in all scenarios) • Maximin (largest minimum payoff) • Maximax (largest maximum payoff) • Hurwicz(largest average of min and max payoffs) • Minimax regret (smallest of maximum regret) • Bayes-Laplace (max EU assuming equiprobable scenarios)

Maximin • Cautious decision maker • Select Ai that gives largest minimum payoff (across Sj) • Important if “gambler’s ruin” is possible (e.g. extinction) • Chooses action C Scenario Sj 1 2 3 4 A 10 5 15 5 B 20 10 0 5 Action Ai C 10 10 20 15 D 0 5 60 25

Maximax • Optimistic decision maker • Loss-tolerant decision maker • Examine max payoffs across Sj • Select Ai with the largest of these • Prefers action D Scenario Sj 1 2 3 4 A 10 5 15 5 B 20 10 0 5 Action Ai C 10 10 20 15 D 0 5 60 25

Hurwicz • Compromise of maximin and maximax • Index of pessimism h where 0 h 1 • Average min and max payoffs weighted by h and (1h) respectively • Select Ai with highest average • If h=1, it’s maximin • If h=0 it’s maximax • Favors D if h=0.5 Scenario Sj 1 2 3 4 A 10 5 15 5 B 20 10 0 5 Action Ai C 10 10 20 15 D 0 5 60 25

Minimax regret • Several competing decision makers • Regret Rij = (max Xij under Sj)Xij • Replace Xij with regret Rij • Select Ai with smallest max regret • Favors action D Payoff Regret 1 2 3 4 1 2 3 4 A 10 5 15 5 A 10 5 45 20 B 0 0 60 20 B 20 10 0 5 C 10 0 40 10 C 10 10 20 15 D 20 5 0 0 D 0 5 60 25 minuends (20) (10) (60) (25)

Bayes-Laplace • Assume all scenarios are equally likely • Use maximum expected value • Chris Rock’s lottery investments • Prefers action D

Pareto • Choose an action if it can’t lose • Select Ai if its payoff is always biggest (across Sj) • Chooses action B Scenario Sj 1 2 3 4 A 10 5 5 5 B 20 15 30 25 Action Ai C 10 10 20 15 D 0 5 20 25

Why not • Complete lack of knowledge of Pj is rare • Except for Bayes-Laplace, the criteria depend non-robustly on extreme payoffs • Intermediate payoffs may be more likely than extremes (especially when extremes don’t differ much)

Junk science (sensu Milloy) Steven Milloy is a Fox News columnist whose website junkscience.com was hosted by the Cato Institute until revelvations that he had accepted money from Exxon while criticizing global warming research and from the Tobacco Institute while criticizing the evidence about adverse health effects of second-hand smoke. • “Faulty scientific data and analysis used to further a special agenda” • Myths and misinformation from scientists, regulators, attorneys, media, and activists seeking money, fame or social change that create alarm about pesticides, global warming, second-hand smoke, radiation, etc. • Surely not all science is sound • Small sample sizes Wishful thinking • Overreaching conclusions Sensationalized reporting • But Milloy has a very narrow definition of science • “The scientific method must be followed or you will soon find yourself heading into the junk science briar patch. … The scientific method [is] just the simple and common process oftrial and error. A hypothesis is tested until it is credible enough to be labeled a ‘theory’…. Anecdotes aren’t data. … Statistics aren’t science.”(http://www.junkscience.com/JSJ_Course/jsjudocourse/1.html)

Science is more • Hypothesis testing • Objectivity and repeatability • Specification and clarity • Coherence into theories • Promulgation of results • Full disclosure (biases, uncertainties) • Deduction and argumentation

Classical hypothesis testing • Alpha • probability of Type I error • i.e., accepting a false statement • strictly controlled, usually at 0.05 level, so false statements don’t easily enter the scientific canon • Beta • probability of Type II error • rejecting a true statement • one minus the power of the test • traditionally left completely uncontrolled

Decision making One might expect Milloy, who studied law, to be familiar with this idea from jurisprudence, where a Type I error (an innocent person is convicted and the guilty person escapes justice) is considered much worse than the Type II error (acquitting the guilty person). • Balances the two kinds of error • Weights each kind of error with its cost • Not anti-scientific, but it has much broader perspective than simple hypothesis testing (Milloy’s criticisms would have merit if he discussed the power of tests that don’t show significance)

Why is a balance needed? • Consider arriving at the train station 5 min before, or 5 min after, your train leaves • Error of identical magnitudes • Grossly different costs • Decision theory = scientific way to make optimal decisions given risks and costs

Statistics in the decision context • Estimation and inference problems can be reexpressed as decision problems • Costs are determined by the use that will be made of the statistic or inference • The question isn’t just “whether” anymore, it’s “what are we gonna do” Wald (1939)

It’s not your father’s statistics • Classical statistics addresses the use of sample information to make inferences which are, for the most part, made without regard to the use to which they’ll be put • Modern (Bayesian) statistics combines sample information, plus knowledge of the possible consequences of decisions, and prior information, in order to make the best decision

Policy is not science • Policy making may be sound even if it does not derive specifically from application of the scientific method • The precautionary principle is a non-quantitative way of acknowledging the differences in costs of the two kinds of errors

Precautionary principle (PP) • “Better safe than sorry” • Has entered the general discourse, international treaties and conventions • Some managers have asked how to “defend against” the precautionary principle (!) • Must it mean no risks, no progress?

Two essential elements • Uncertainty • Without uncertainty, what’s at stake would be clear and negotiation and trades could resolve disputes. • High costs or irreversible effects • Without high costs, there’d be no debate. It is these costs that justify shifting the burden of proof.

Proposed guidelines for using PP (Science 12 May 2000) • Transparency • Proportionality • Non-discrimination • Consistency • Explicit examination of the costs and benefits of action or inaction • Review of scientific developments

But consistency is not essential • Managers shouldn’t be bound by prior risky decisions • “Irrational” risk choices very common • e.g., driving cars versus pollutant risks • Different risks are tolerated differently • control • scale • fairness

Take-home messages • Guidelines (a lumper’s version) • Be explicit about your decisions • Revisit the question with more data • Quantitative risk assessments can overrule PP • Balancing errors and their costs is essential for sound decisions

Intermediate approach • Knight’s division is awkward • Rare to know nothing about probabilities • But also rare to know them all precisely • Like to have some hybrid approach

j k=1 Kmietowicz and Pearman (1981) • Criteria based on extreme expected payoffs • Can be computed if probabilities can be ranked • Arrange scenarios so PjPj +1 • Extremize partial averages of payoffs, e.g. max ( Xik / j ) j

Difficult example • Neither action dominates the other • Min and max are the same so maximin, maximax and Hurwicz cannot distinguish • Minimax regret and Bayes-Laplace favor A Scenario 1 Scenario 2 Scenario 3 Scenario 4 Action A 7.5 -5 15 9 Action B 5.5 9 -5 15

Most likely Least likely If probabilities are ranked Scenario 1 Scenario 2 Scenario 3 Scenario 4 • Maximin chooses B since 3.17 > 1.25 • Maximax slightly prefers A because 7.5 > 7.25 • Hurwicz favors B except in strong optimism • Minimax regret still favors A somewhat (7.33 > 7) Action A 7.5 -5 15 9 Action B 5.5 9 -5 15 Partial 7.5 1.25 5.83 6.62 averages 5.5 7.25 3.17 6.12

Sometimes appropriate • Uses available information more fully than criteria based on limiting payoffs • Better than decisions under uncertainty if • several decisions are made (even if actions, scenarios, payoffs and rankings change) • number of scenarios is large (because standard methods ignore intermediate payoffs)

Extreme expected payoffs • Focusing on maximin expected payoff is more conservative than traditional maximin • Focusing on maximax expected payoff is more optimistic than the old maximax • Focusing on minimax expected regret will have less regret than using minimax regret