Solving Linear Systems (Numerical Recipes, Chap 2)

370 likes | 896 Vues

Solving Linear Systems (Numerical Recipes, Chap 2). Jehee Lee Seoul National University. A System of Linear Equations. Consider a matrix equation There are many different methods for solving this problem We will not discuss how to solve it precisely

Solving Linear Systems (Numerical Recipes, Chap 2)

E N D

Presentation Transcript

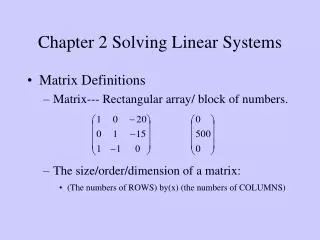

Solving Linear Systems(Numerical Recipes, Chap 2) Jehee Lee Seoul National University

A System of Linear Equations • Consider a matrix equation • There are many different methods for solving this problem • We will not discuss how to solve it precisely • We will discuss which method to choose for a given problem

What to Consider • Size • Most PCs are now sold with a memory of 1GB to 4GB • The 1GB memory can accommodate only a single 10,000x10,000 matrix • 10,000*10,000*8(byte) = 800(MB) • Computing the inverse of A requires additional 800 MB • In real applications, we have to deal with much bigger matrices

What to Consider • Sparse vs. Dense • Many of linear systems have a matrix A in which almost all the elements are zero • Both the representation matrix and the solution method are affected • Sparse matrix representation by linked lists • There exist special algorithms designated to sparse matrices • Matrix multiplication, linear system solvers

What to Consider • Special properties • Symmetric • Tridiagonal • Banded • Positive definite

What to Consider • Singularity (det A=0) • Homogeneous systems • There exist non-zero solutions • Non-homogeneous systems • Multiple solutions, or • No solution

What to Consider • Underdetermined • Effectively fewer equations than unknowns • A square singular matrix • # of rows < # of columns • Overdetermined • More equations than unknowns • # of rows > # of columns

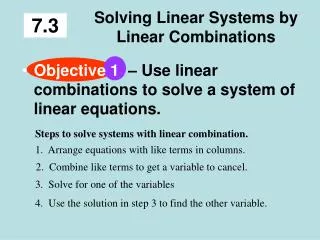

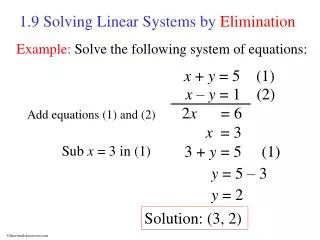

Solution Methods • Direct Methods • Terminate within a finite number of steps • End with the exact solution • provided that all arithmetic operations are exact • Ex) Gauss elimination • Iterative Methods • Produce a sequence of approximations which hopefully converge to the solution • Commonly used with large sparse systems

Gauss Elimination • Reduce a matrix A to a triangular matrix • Back substitution

Gauss Elimination • Reduce a matrix A to a triangular matrix • Back substitution

Gauss Elimination • Reduce a matrix A to a triangular matrix • Back substitution

Gauss Elimination • Reduce a matrix A to a triangular matrix • Back substitution

Gauss Elimination • Reduce a matrix A to a triangular matrix • Back substitution • O(N^3) computation • All right-hand sides must be known in advance

What does the right-hand sides mean ? • For example, interpolation with a polynomial of degree two

LU Decomposition • Decompose a matrix A as a product of lower and upper triangular matrices

LU Decomposition • Forward and Backward substitution

LU Decomposition • It is straight forward to find the determinant and the inverse of A • LU decomposition is very efficient with tridiagonal and band diagonal systems • LU decomposition and forward/backward substitution takes O(k²N) operations, where k is the band width

Cholesky Decomposition • Suppose a matrix A is • Symmetric • Positive definite • We can find • Extremely stable numerically

QR Decomposition • Decompose A such that • R is upper triangular • Q is orthogonal • Generally, QR decomposition is slower than LU decomposition • But, QR decomposition is useful for solving • The least squares problem, and • A series of problems where A varies according to

Jacobi Iteration • Decompose a matrix A into three matrices • Fixed-point iteration • It converges if A is strictly diagonally dominant Upper triangular with zero diagonal Lower triangular with zero diagonal Identity matrix

Gauss-Seidel Iteration • Make use of “up-to-date” information • It converges if A is strictly diagonally dominant • Usually faster than Jacobi iteration. • There exist systems for which Jacobi converges, yet Gauss-Seidel doesn’t

Conjugate Gradient Method • Function minimization • If A is symmetric and positive definite, it converges in N steps (it is a direct method) • Otherwise, it is used as an iterative method • Well suited for large sparse systems

Singular Value Decomposition • Decompose a matrix A with M rows and N columns (M>=N) Diagonal Orthogonal Column Orthogonal

SVD for a Square Matrix • If A is non-singular • If A is singular • Some of singular values are zero

Linear Transform • A nonsingular matrix A

Null Space and Range • Consider as a linear mapping from the vector space x to the vector space b • The range of A is the subspace of b that can be “reached” by A • The null space of A is some subspace of x that is mapped to zero • The rank of A = the dimension of the range of A • The nullity of A = the dimension of the null space of A Rank + Nullity = N

Null Space and Range • A singular matrix A (nullity>1)

Null Space and Range • The columns of U whose corresponding singular values are nonzero are an orthonormal set of basis vectors of the range • The columns of V whose corresponding singular values are zero are an orthonormal set of basis vectors of the null space

SVD for Underdetermined Problem • If A is singular and b is in the range, the linear system has multiple solutions • We might want to pick the one with the smallest length • Replace by zero if

SVD for Overdetermined Problems • If A is singular and b is not in the range, the linear system has no solution • We can get the least squares solution that minimize the residual • Replace by zero if

SVD Summary • SVD is very robust when A is singular or near-singular • Underdetermined and overdetermined systems can be handled uniformly • But, SVD is not an efficient solution

(Moore-Penrose) Pseudoinverse • Overdetermined systems • Underdetermined systems

(Moore-Penrose) Pseudoinverse • Sometimes, is singular or near-singular • SVD is a powerful tool, but slow for computing pseudoinverse • QR decomposition is standard in libraries Rotation Back substitution

Summary • The structure of linear systems is well-understood. • If you can formulate your problem as a linear system, you can easily anticipate the performance and stability of the solution