SciDAC Software Introduction

DOE Workshop for Industry Software Developers March 31, 2011. SciDAC Software Introduction. Osni Marques Lawrence Berkeley National Laboratory OAMarques@lbl.gov. Scientific Discovery through Advanced Computing ( SciDAC ).

SciDAC Software Introduction

E N D

Presentation Transcript

DOE Workshop for Industry Software Developers March 31, 2011 SciDAC Software Introduction Osni MarquesLawrence Berkeley National LaboratoryOAMarques@lbl.gov

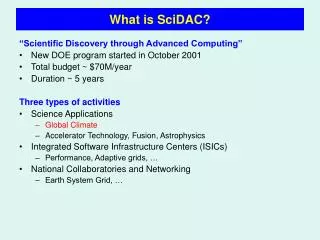

Scientific Discovery through Advanced Computing (SciDAC) • Create comprehensive, scientific computing software infrastructure to enable scientific discovery in the physical, biological, and environmental sciences at the petascale • Develop new generation of data management and knowledge discovery tools for large data sets (obtained from users and simulations) • 35 projects: 9 centers, 4 institutes, 19 efforts in 12 application areas (Astrophysics, Accelerator Science, Climate, Biology, Fusion, Petabyte data, Materials & Chemistry, Nuclear physics, High Energy physics, QCD, Turbulence, Groundwater)

Centers for Enabling Technologies and Institutes • Centers: interconnected multidisciplinary teams that are coordinated with scientific applications; development of a comprehensive, integrated, scalable, and robust high performance software environment (e.g. algorithms, operating systems , tools for data management and visualization of petabyte-scale scientific data sets) to enable the effective use of terascale and petascaleresources • Institutes: university-led centers of excellence intended to increase the presence of the SciDAC program in the academic community and to complement the efforts of the Centers

Software Stack SciDAC Centers and Institutes APPLICATIONS GENERAL PURPOSE TOOLS SUPPORT TOOLS AND UTILITIES HARDWARE

Applied Mathematics • Towards Optimal Petascale Simulation (TOPS, http://www.scalablesolvers.org) Center: development of scalable solver software (linear systems and eigenvalue problems) • Applied Partial Differential Equations Center (APDEC, https://hpcrd.lbl.gov/APDEC): algorithms and software components for the solution of PDEs in complex multicomponent physical systems • Combinatorial Scientific Computing and Petascale Simulations (CSCAPES, http://www.cscapes.org) Institute: development and deployment of algorithms and software tools for tackling combinatorial problems in scientific computing • Interoperable Technologies for Advanced Petascale Simulations (ITAPS, http://www.itaps-scidac.org) Center: interoperable and interchangeable mesh, geometry, and field manipulation services

Applied Mathematics: software • PETSc: solution of PDEs that require the solution of large-scale, sparse linear and nonlinear systems of equations • Hypre: iterative solution of large sparse linear systems of equation using scalable preconditioners • Trilinos: algorithms and enabling technologies within an object-oriented software framework for the solution of large-scale, complex multi-physics problems • TAO: solution of large scale non-linear optimization problems • SuperLU: direct solution of large, sparse, nonsymmetric systems of linear equations • Zoltan: parallel partitioning, load balancing, and data management services • Chombo and Boxlib: C++ class libraries (with Fortran interfaces and computational kernel) for parallel calculations over block-structured, adaptively refined grids ⁞ PARTIAL LIST

Example of usage: hypre problem domain oriented

Example of usage: SuperLU • SuperLUsolves Ax=b by through a factorization A=L*U (with appropriate manipulations of A for reduced storage, performance and stability) • Because of its performance advantage and ease-of-use, it has a large user base worldwide, with nearly 10,000 downloads in FY10 • It has been used in a variety of commercial applications, including Walt Disney Feature Animation, airplane design (e.g. Boeing), oil industry (e.g. Chevron), circuit simulation in semiconductor industry, earthquake simulation and prediction, economic modeling, design of novel materials, and study of alternative energy sources • It has been adopted in many academic/lab's high performance computing libraries or simulation codes (e.g. PETSc, Hyper, FEAP, M3D-C1, Omega3P, OpenSees, NIKE, NIMROD, PHOENIX and Trilinos) • It has been is adopted in many computer vendor's mathematical libraries and commercial software (e.g. Cray's LibSci, HP's MathLib, IMSL, NAG, Optima Numericsand SciPy) (courtesy of Sherry Li)

Computer Science and Visualization (1/2) • Center for Scalable Application Development Software (CScADS, http://CScADS.rice.edu): development of systems software, libraries, compilers, and tools for leadership computing platforms • The Performance Evaluation Research Institute (PERI, http://www.peri-scidac.org): development of models to accurately predict code performance; development of tools for performance monitoring, modeling and optimization; development of technology for automatic performance tuning • Scientific Data Management (SDM, https://sdm.lbl.gov): development of advanced data management technologies for storage efficient access, data mining and analysis and scientific process automation (workflows) • PetascaleData Storage Institute (PDSI, http://www.pdsi-scidac.org): development of high performance storage solutions (e.g. capacity, performance, concurrency, reliability) for large scale computer simulations

Computer Science and Visualization (2/2) • Institute for Ultrascale Visualization (IUSV, http://iusv.ucdavis.edu): development and promotion of cutting edge visualization technologies for the analyses and evaluation of the vast amount of complex data produced by large scientific simulation applications • Visualization and Analytics Center for Enabling Technology (VACET, http://www.vacet.org): leveraging of scientific visualization and analytics software technology as an enabling technology for increasing scientific productivity and insight, focusing on the challenges posed by vast collections of complex data • Center for Technology for Advanced Scientific Component Software (TASCS, http://tascs-scidac.org ): development of a common component architecture (CCA) designed for high-performance scientific computing with support for parallel and distributed computing, mixed language programming and data types

Computer Science and Visualization: software • ZeptoOS: peratingsystems and run-time systems for petascalecomputers • PLASMA: parallel linear algebra for multicore architectures • DynInst: binary rewriter, interfaces for runtime code generation • VisIt: Scalable techniques for the visualization of large data sets, feature detection, analysis and tracking • ParaView: open‐source, scalable, multiplatform visualization tool • CUDPP and DCGN: libraries for general-purpose computing on GPUs • FastBit: compressed bitmap indexing technology to accelerate analysis of very large datasets and to perform query-driven visualization • VisTrails: workflow system for data exploration and visualization • HPCToolkit: tools for node-based performance analysis • Jumpshot: visualization tools for analysis of performance of MPI programs • LoopTool: tool for source-to-source transformations to improve data reuse ⁞ PARTIAL LIST

Example of usage: IUSV technologies close‐up view of turbulent flame surfaces Following the natural coordinate system of a flame, the level‐set distance function‐based adaptive data reduction algorithms enables us to zoom in and see for the first time the interaction of small turbulent eddies with the preheat layer of a turbulent flame, a region that was previously obscured by the multiscale nature of turbulence. (Jackie Chen, SNL)

Example of usage: VACET technologies • new visualization infrastructure for icosahedral grid • infrastructure for the visualization and analysis of ensemble runs of new global cloud models (courtesy of the VACET Team)

Example of usage: FastBit searching for regions that satisfy particular criteria is a challenge but FastBit efficiently finds regions of interest • Cell identification: identify all cells that satisfy user specified conditions, for example “600 < Temperature < 700 AND HO2 concentration > 10-7” • Region growing: connect neighboring cells into regions • Region tracking: track the evolution of the features through time

Code performance (questions for application developers) • How does performance vary with different compilers? • Is poor performance correlated with certain OS features? • Has a recent change caused unanticipated performance? • How does performance vary with MPI variants? • Why is one application version faster than another? • What is the reason for the observed scaling behavior? • Did two runs exhibit similar performance? • How are performance data related to application events? • Which machines will run my code the fastest and why? • Which benchmarks predict my code performance best? ⁞ (courtesy of S. Shende)

Example of usage: HPCToolkit and LoopTool opportunities for performance improvement in S3D (DNS of turbulent combustion) (courtesy of the CScADS Team)

Collaboratories • Earth Systems Grid Center for Enabling Technologies (ESG-CET, http://esg-pcmdi.llnl.gov): infrastructure to provide climate researchers with access to data, models, analysis tools, and computational resources for studying climate simulation datasets • Center for Enabling Distributed Petascale Science (CEDPS, http://www.cedps-scidac.org): tools for moving large quantities of data reliably, efficiently and transparently, among institutions connected by high-speed networks

Delivering the Science Highlighting Scientific Discovery and the Role of High End Computing