Item Development

Item Development. Presented to: Majmaah University Neil Wilkinson. Creating better test items. The FACTS of good test items. Creating good test items. Right Expertise. Subject matter expertise ensures test is valid Content development expertise ensures test is superiorly crafted

Item Development

E N D

Presentation Transcript

Item Development Presented to: Majmaah University Neil Wilkinson

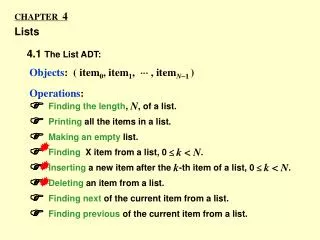

Right Expertise • Subject matter expertise • ensures test is valid • Content development expertise • ensures test is superiorly crafted • Psychometric expertise • ensures test is reliable and fair

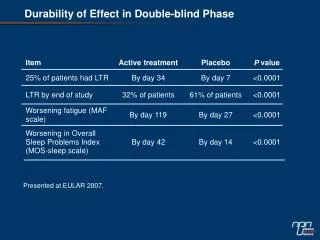

Item performance analysis and feedback Why analyze items? • Statistical behavior of “bad” items is fundamentally different from that of “good” items • Provides quality control indicating items which should be reviewed by content experts • Feedback on how items are performing can be used in future item writing efforts providing continuous quality improvementin the item writing process

Distractoranalysis • High-scoring candidates should select the correct option • Look at discrimination for each distractor • Low-scoring candidates should select from among the distractors at random Look at p-values for each distractor

Feedback into the item development process • Sharing performance statistics to authors of individual items may be problematic due to time between authoring and use, but... • Item performance statistics can still inform the item development process. • Share and discuss item performance and reasons for poorly performing items. • Show examples of good, average, and poorly performing items.

Efficient Tools Item Bank A repository of items with their associated metadata & historical record

Efficient Tools • Item Bank – Data • The item itself • Item author • Item type • Content area reference from test blueprint • Cognitive level • Revision history • Item status • Performance statistics

Efficient Tools • Test construction • Test assembly • Export features • Ancillary features • Security and access • Workflow management • Project tracking • Item Bank – Features • Item authoring • Remote item authoring • Support of multiple item types • Item banking • Search capabilities • Import/export capabilities • Batch editing capabilities

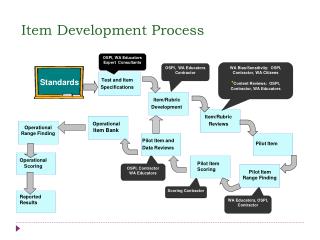

Effective Item Development • Guidelines for an effective item development process • An effective item development process begins well before the first test item is written with development of test blueprints.

Effective Item Development • Guidelines for an effective item development process • Carefully consider the associated information that is required and stored for each item.

Effective Item Development • Guidelines for an effective item development process • Recognize that item development requires more than subject matter expertise.

Effective Item Development • Guidelines for an effective item development process • Item authors require training in item writing.

Effective Item Development • Guidelines for an effective item development process • Item development requires more than authoring, sound review processes are also essential.

Effective Item Development • Guidelines for an effective item development process • Style and grammar guidelines and review processes are necessary to ensure items are testing what they are meant to test.

Effective Item Development • Guidelines for an effective item development process • Use item performance analysis to guide further review of the items and to provide feedback to item authors.

What Makes a Content-Appropriate Item • Reflects specific content • Able to be classified on the test blueprint • Does not contain trivial content • Independent from other items • Not a trick question • Vocabulary suitable for candidate population • Not opinion-based Source: Haladyna, T.M. (1996). Writing Test Items to Evaluate Higher Order Thinking. Boston, USA: Allyn & Bacon.

Anatomy of a Multiple-Choice Item • Which city has been awarded • the 2016 Summer Olympics? • Chicago • Madrid • Rio de Janeiro • Tokyo Stem Distractors Answer Options Key

Not opinion based Avoid the use of opinion based material in items • Poor Stem: Which are the best football team in the world? a. Barcelona b. Manchester United. c. Bayern Munich d. Athletico Madrid • Better Stem: Which football team won the European championship in 1999?

Guidelines for Writing the Stem Place most of the phrasing in the stem. • Poor Stem: Type II diabetes is a. also called juvenile-onset. b. characterised by insulin dependency. c. primarily seen in adults over 40. d. often managed by drug therapy. • Better Stem: What is the most frequent age of onset for Type II diabetes?

Guidelines for Writing the Stem Avoid excessive, unnecessary wording. • Poor Stem: Certified individuals are required to undertake continuing education. What is the minimum number of hours required in a two-year period? a. 10 b. 15 c. 20 d. 25 • Better Stem: How many hours of continuing education must a certified individual take in a two-year period?

Guidelines for Writing the Stem Clearly describe the problem or what is being asked of the candidate. • Poor Stem: Continuing education is a. required every two years. b. important to life-long learning. c. an optional activity. d. undertaken at certified centers. • Better Stem: How many hours of continuing education is a certified individual required to undertake in a 5-year cycle?

Guidelines for the Answer Options • Maintain similarity in the answer options. • Keep the answer options relatively equal in length. • Keep all answer options grammatically correct with the stem and parallel. • Avoid cueing the right answer. • Avoid words such as “always” and “never”. • Avoid repetitive wording in the answer options. • Vary location of key. • Place answer options in logical order.

Number of Answer Options Four is most common. • Recent research indicates three may be sufficient if the two distractors are strong and plausible. • Rarely have three functional distractors. • “Using more options does little to improve item and test score statistics and typically results in implausible distractors.”* *Rodriquez, M.C. (2005). Three options are optimal for multiple-choice items: A meta-analysis of 80 years of research. Educational Measurement: Issues and Practice, 24(2), 3-13 (p. 11).

Ten Steps to an Effective Item Writing Process Use item bank inventories to direct item writing activities.

Ten Steps to an Effective Item Writing Process Provide a training program for item writers.

Ten Steps to an Effective Item Writing Process Develop a style guide.

Ten Steps to an Effective Item Writing Process Require all items to be classified according to the test blueprint.

Ten Steps to an Effective Item Writing Process Ensure all items are validatedwith current references and the validations are recorded.

Ten Steps to an Effective Item Writing Process Editorially review all items for grammar and style.

Ten Steps to an Effective Item Writing Process Include an item review process by Subject Matter Experts (SMEs).

Ten Steps to an Effective Item Writing Process Share acceptance criteria with item writers and item reviewers.

Ten Steps to an Effective Item Writing Process Use statistical analysis of items to guide further review.

Ten Steps to an Effective Item Writing Process Provide feedbackto item writers.