Distributed Adaptive Multi-Criteria Load Balancing

130 likes | 240 Vues

This study analyzes and simulates an end-to-end distributed adaptive multi-criteria load balancing system to enhance network performance, decrease costs, and improve traffic management. The methodology involves load balancing on critical links, multi-path routing, and multiple criteria routing for efficient traffic distribution. Simulations on a large-scale network are conducted to evaluate network and user-level performance metrics.

Distributed Adaptive Multi-Criteria Load Balancing

E N D

Presentation Transcript

Distributed adaptive multi-criteria load balancing: analysis and end to end simulation INFOCOM2006 -Barcelona, April 25-27 2006 S.RANDRIAMASY – ALCATEL R&I L.FOURNIE and D.HONG – N2NSOFT

Context • Goal • Improve best effort traffic by absorbing local temporary traffic increase • Increase network operation performances: at the core network and end user levels • Decrease network cost by downsizing link dimensioning • Methodology • Load balancing triggered on “critical” links (overloaded or prohibited) and based on multi-path and multi-objective routing • On-line and distributed on IP routers, associated to OSPF-TE • LB-aware link capacity allocation

R2 R4 R5 R3 R1 R6 R7 R8 On-line load-sensitive multi-path routing • Default routing = Shortest Path • Requires synchronized flooding of link-states • Triggering : at source I of any link (I,J) with load > ThLoad • Until target traffic repartition reached or load < ThLoad • Progressive shifting on alternative paths • For any destination J is a next hop to • Routing stays in Multi-Path mode • As long as load < ThLoad • Stopping : when load < ThLoadBack << ThLoad • Routing progressively reverses from multi-path to default single path • DMLB triggered before congestion ThLoad [80,90%] • Can also be triggered and stopped upon operator request

Routing with Multiple Criteria (RMC) • Path cost = vectorZ=(z1, z2, z3, z4) • Available bandwidth (MAX-MIN) • Number of hops (MIN-) • Transit delay (MIN-) • Administrative cost (MIN-) • Step 1: extraction of all Pareto-optimal paths • Step 2: path rating w.r.t. distance to ideal path Step 3: path ranking and selection of the K best ones Output of RMC for each destination Set ok K efficient paths = input to multi-path routing

Cost(P1) 80 Packet IP header Mapping of H NH1 TTS1 20% Cost(P2) 60 NH2 TTS2 27% Cost(P1) 30 NH3 H(S, D, pS, pD, pID) TTS3 53% Random picking of TBM flow bins per iteration 100 flow bins Unequal flow distribution & progressive shifting • Hashing on flow attributes: (IP source and dest., source and dest. port, protocol ID) • Flows are mapped into M “flow bins” (default = 100) • Repartition of bins among paths : “Target Traffic Share” reflecting their cost • Every 10s, TBM flow bins (5 or 10) shifted away from overloaded path • Random picking of deviated bins equity between flows

Incoherent routing decisions: S chooses path S-A-D, whereas A prefers path A-B-D R2 R4 R5 R3 R1 R6 R7 R8 Multiple distributed multi-path routing decisions • Routers can have a different view of the network state Several routers may compete to route the same flows differently • Ambiguity on R3 towards R5 • R1 advises R4 as the next hop • R7 advises R8 as the next hop

B [71, 100] LOOP [71, 85] F [85, 100] C Coherency of multi-path routing decisions • Downstream multi-path routing (MPR) advertisement • A router R in DMLB mode sends decisions to the downstream routers • MPR decisions include: sender ID, path ID, set of concerned bins flow-bin centered routing decision • MPR decisions re-ordered/processed after arrival time-slot • MPR advertisements may arrive out of order w.r.t. their emission date • Prioritizing of multiple MPR decisions on a flow bin • Ranking rule that is global over the routers • No ambiguity • No loop between downstream MPR routers • 3D routing 2Dforwarding • Fwd decision depends on: D, hash value

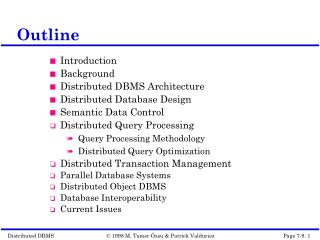

Simulations on European GEANT (2001 topology) Large scale test network: 20 core nodes, 27 links, • Different link capacities • up to 300 000 terminal nodes (ADSL line, modem, server, LAN). • 900 000 flows, • 100.000-3.000.000 parallel sessions • 100 to 1000 Tbits transported • Simulation time: [1000, 7000] secs. • Applications : HTTP, P2P, FTP • Network-level evaluation: • Network capacity, Packet loss, Link load balance, optimal congestion threshold/goodput, • User-level evaluation: • Goodput perapplication, TCP fairness, RTT, packet loss.

Packet losses Bit Error Rate: terminal noise reference for acceptable level Core losses > BER 28 Gb 43 Gb + 53% supported demand Terminal overflow negligible

LB-aware link dimensioning 1/2 C = mean rate + reserve reserve: absorbs traffic variations and bursts When DMLB is available: C*ThLoad = maximum non critical link load Outgoing links mutually absorb their link overload C*ThLoad = m + reserve_MP with reserve_MP < reserve_SP MP routing: allocates capacity for outgoing links as if their traffic/demands were aggregated on one single link.

LB-aware link dimensioning 2/2 • C(G+(E))= SL_outgoing_E C(L)*ThLoad = F(D(Magg, Sagg)) • Distribution on links : e.g. proportional to D(L)/D(E) • Generic model • Numerical example: M, S same for all demands • Guerin model: F(D(M, S)) = M + aS • a depends on overflow proba Pe = 10-7 • Traffic mix of German network • M=1 Gbit/s, S =0.485 Gbits/s, a = 5.916 • SP-based capacity outgoing E: CSP(E)= 9.926 Gbits • MP-based capacity outgoing E: CMP(E)*ThLoad = 8.855Gbit/s • Gain = 10.8% • If 3 demands are shared on 3 links: CSP(E) = 11.8 Gbit/s, Gain = 25 %

Conclusion • Load sensitive Distributed Multi-Criteria Load Balancing • Used « on top » of default standard single/shortest path routing • Studied for pure IP - MPLS implementation easier • Allows to better • Balance traffic over the network • Prevent link congestion • Route more traffic • Allocate less link capacity • Extensions • Generalized « LB-aware » link dimensioning • Mobile core networks • Contribution in network resiliency after link breakdown