Real Parallel Computers

This overview explores the evolution of parallel computing and modular data centers from the 1970s to the 2010s. It highlights key developments in parallel architectures, from vector computers and massively parallel processors to the rise of cluster computing utilizing standard PCs. The document discusses performance metrics and communication advancements that influence the marketplace of high-performance computing, followed by detailed cases of cluster systems in a departmental context. Overall, it provides insights into future trends and current state-of-the-art technologies in distributed computing.

Real Parallel Computers

E N D

Presentation Transcript

Background Information Recent trends in the marketplace of high performance computing Strohmaier, Dongarra, Meuer, Simon Parallel Computing 2005

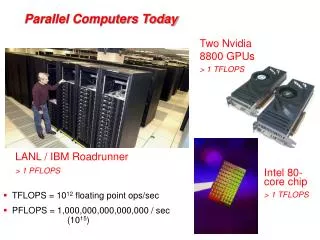

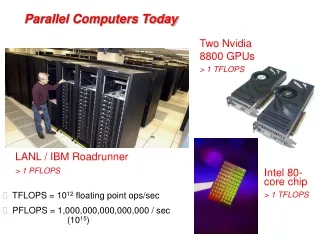

Short history of parallel machines • 1970s: vector computers • 1990s: Massively Parallel Processors (MPPs) • Standard microprocessors, special network and I/O • 2000s: • Cluster computers (using standard PCs) • Advanced architectures (BlueGene) • Comeback of vector computer(Japanese Earth Simulator) • IBM Cell/BE • 2010s: • Multi-cores, GPUs • Cloud data centers

Clusters • Cluster computing • Standard PCs/workstations connected by fast network • Good price/performance ratio • Exploit existing (idle) machines or use (new) dedicated machines • Cluster computers vs. supercomputers (MPPs) • Processing power similar: based on microprocessors • Communication performance was the key difference • Modern networks have bridged this gap • (Myrinet, Infiniband, 10G Ethernet)

Overview • Cluster computers at our department • DAS-1: 128-node Pentium-Pro / Myrinet cluster (gone) • DAS-2: 72-node dual-Pentium-III / Myrinet-2000 cluster • DAS-3: 85-node dual-core dual Opteron / Myrinet-10G • DAS-4: 72-node cluster with accelerators (GPUs etc.) • Part of a wide-area system: • Distributed ASCI Supercomputer

DAS-2 Cluster (2002-2006) • 72 nodes, each with 2 CPUs (144 CPUs in total) • 1 GHz Pentium-III • 1 GB memory per node • 20 GB disk • Fast Ethernet 100 Mbit/s • Myrinet-2000 2 Gbit/s (crossbar) • Operating system: Red Hat Linux • Part of wide-area DAS-2 system(5 clusters with 200 nodes in total) Ethernet switch Myrinet switch

DAS-3 Cluster (Sept. 2006) • 85 nodes, each with 2 dual-core CPUs(340 cores in total) • 2.4 GHz AMD Opterons (64 bit) • 4 GB memory per node • 250 GB disk • Gigabit Ethernet • Myrinet-10G 10 Gb/s (crossbar) • Operating system: Scientific Linux • Part of wide-area DAS-3 system (5 clusters; 263 nodes), using SURFnet-6 optical network with 40-80 Gb/s wide-area links

Nortel 5530 + 3 * 5510 ethernet switch 85 compute nodes 85 * 1 Gb/s ethernet 1 or 10 Gb/s Campus uplink 10 Gb/s ethernet 8 * 10 Gb/s eth (fiber) 85 * 10 Gb/s Myrinet 80 Gb/s DWDM SURFnet6 Nortel OME 6500 with DWDM blade 10 Gb/s Myrinet 10 Gb/s ethernet blade Myri-10G switch Headnode (10 TB mass storage) DAS-3 Networks

DAS-3 Networks Myrinet Nortel

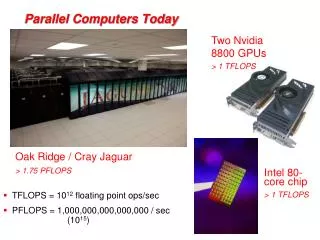

DAS-4 (Sept. 2010) • 72 nodes (2 quad-core Intel Westmere Xeon E5620, 24 GB memory, 2 TB disk) • 2 fat nodes with 94 GB memory • Infiniband network + 1 Gb/s Ethernet • 16 NVIDIA GTX 480 graphics accelerators (GPUs) • 2 Tesla C2050 GPUs

DAS-4 performance • Infiniband network: • - One-way latency: 1.9 microseconds • - Throughput: 22 Gbit/s • CPU performance: • - 72 nodes (576 cores): 4399.0 GFLOPS

Blue Gene/L System 64 Racks, 64x32x32 Rack 32 Node Cards Node Card 180/360 TF/s 32 TB (32 chips 4x4x2) 16 compute, 0-2 IO cards 2.8/5.6 TF/s 512 GB Compute Card 2 chips, 1x2x1 90/180 GF/s 16 GB Chip 2 processors 5.6/11.2 GF/s 1.0 GB 2.8/5.6 GF/s 4 MB

Blue Gene/L Networks 3 Dimensional Torus • Interconnects all compute nodes (65,536) • Virtual cut-through hardware routing • 1.4Gb/s on all 12 node links (2.1 GB/s per node) • 1 µs latency between nearest neighbors, 5 µs to the farthest • Communications backbone for computations • 0.7/1.4 TB/s bisection bandwidth, 68TB/s total bandwidth Global Collective • One-to-all broadcast functionality • Reduction operations functionality • 2.8 Gb/s of bandwidth per link • Latency of one way traversal 2.5 µs • Interconnects all compute and I/O nodes (1024) Low Latency Global Barrier and Interrupt • Latency of round trip 1.3 µs Ethernet • Incorporated into every node ASIC • Active in the I/O nodes (1:8-64) • All external comm. (file I/O, control, user interaction, etc.) Control Network