Sorting

Explore key sorting algorithms such as Insertion Sort, Bubble Sort, Shell Sort, Merge Sort, and Quick Sort. Learn about time complexity, inversions, and the importance of choosing the right pivot. Dive deep into analysis and practical applications in data structures and algorithm theory.

Sorting

E N D

Presentation Transcript

Sorting What makes it hard? Chapter 7 in DS&AA Chapter 8 in DS&PS

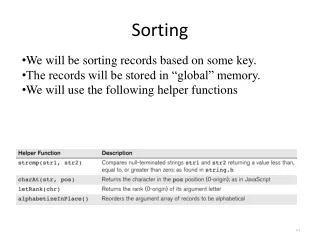

Insertion Sort • Algorithm • Conceptually, incremental add element to sorted array or list, starting with an empty array (list). • Incremental or batch algorithm. • Analysis • In best case, input is sorted: time is O(N) • In worst case, input is reverse sorted: time is O(N2). • Average case is (loose argument) is O(N2) • Inversion: elements out of order • critical variable for determining algorithm time-cost • each swap removes exactly 1 inversion

Inversions • What is average number of inversions, over all inputs? • Let A be any array of integers • Let revA be the reverse of A • Note: if (i,j) are in order in A they are out of order in revA. And vice versa. • Total number of pairs (i,j) is N*(N-1)/2 so average number of inversions is N*(N-1)/4 which is O(N2) • Corollary: any algorithm that only removes a single inversion at a time will take time at least O(N2)! • To do better, we need to remove more than one inversion at a time.

BubbleSort • Most frequently used sorting algorithm • Algorithm: for j=n-1 to 1 …. O(n) for i=0 to j ….. O(j) if A[i] and A[i+1] are out of order, swap them (that’s the bubble) …. O(1) • Analysis • Bubblesort is O(n^2) • Appropriate for small arrays • Appropriate for nearly sorted arrays • Comparision versus swaps ?

Shell Sort: 1959 by Shell • Motivated by inversion result - need to move far elements • Still quadratic • Only in text books • Historical interest and theoretical interest - not fully understood. • Algorithm: (with schedule 1, 3, 5) • bubble sort things spaced 5 apart • bubble sort things 3 apart • bubble sort things 1 apart • Faster than insertion sort, but still O(N^2) • No one knows the best schedule

Divide and Conquer: Merge Sort • Let A be array of integers of length n • define Sort (A) recursively via auxSort(A,0,N) where • Define array[] Sort(A,low, high) • if (low == high) return • Else • mid = (low+high)/2 • temp1 = sort(A,low,mid) • temp2 = sort(A,mid,high) • temp3 = merge(temp1,temp2)

Merge • Int[] Merge(int[] temp1, int[] temp2) • int[] temp = new int[ temp1.length+temp2.length] • int i,j,k • repeat • if (temp1[i]<temp2[j]) temp[k++]=temp1[i++] • else temp[k++] = temp2[j++] • for all appropriate i, j. • Analysis of Merge: • time: O( temp1.length+temp2.length) • memory: O(temp1.length+temp2.length)

Analysis of Merge Sort • Time • Let N be number of elements • Number of levels is O(logN) • At each level, O(N) work • Total is O(N*logN) • This is best possible for sorting. • Space • At each level, O(N) temporary space • Space can be freed, but calls to new costly • Needs O(N) space • Bad - better to have an in place sort • Quick Sort (chapter 8) is the sort of choice.

Quicksort: Algorithm • QuickSort - fastest algorithm • QuickSort(S) • 1. If size of S is 0 or 1, return S • 2. Pick element v in S (pivot) • 3. Construct L = all elements less than v and R = all elements greater than v. • 4. Return QuickSort(L), then v, then QuickSort(R) • Algorithm can be done in situ (in place). • On average runs in O(NlogN), but can take O(N2) time • depends on choice of pivot.

Quicksort: Analysis • Worst Case: • T(N) = worst case sorting time • T(1) = 1 • if bad pivot, T(N) = T(N-1)+N • Via Telescope argument (expand and add) • T(N) = O(N^2) • Average Case (text argument) • Assume equally likely subproblem sizes • Note: chance of picking ith is 1/N • T(N) average cost to sort

Analysis continued • T(left branch) = T(right branch) (average) so • T(N) = 2* ( T(0)+T(1)….T(N-1) )/N + N, where N is cost of partitioning • Multiply by N: • NT(N) = 2(T(0)+…+T(N-1)) +N^2 (*) • Subtract N-1 case of (*) • NT(N) - (N-1)T(N-1) = 2T(N-1) +2N-1 • Rearrange and drop -1 • NT(N) = (N+1)T(N-1) + 2N -1 • Divide by N(N+1) • T(N)/(N+1) = T(N-1)/N + 2/(N+1)

Last Step • Substitute N-1, N-2,... 3 for N • T(N-1)/N = T(N-2)/(N-1) + 2/N • … • T(2)/3 = T(1)/2 +2/3 • Add • T(N)/(N+1) = T(1)/2+ 2(1/3+1/4 + ..+1/(N+1) • = 2( 1+1/2 +…) -5/2 since T(1) = 0 • = O(logN) • Hence T(N) = N logN • In literature, more accurate proof. • For best results, choose pivot as median of 3 random values from array.

Lower Bound for Sorting • Theorem: if you sort by comparisons, then must use at least log(N!) comparisons. Hence Ω(N logN) algorithm. • Proof: • N items can be rearranged in N! ways. • Consider a decision tree where each internal node is a comparison. • Each possible array goes down one path • Number of leaves N! • minimum depth of a decision tree is log(N!) • log(N!) = log1+log2+…+log(N) is O(N logN) • Proof: use partition trick • sum log(N/2) + log(N/2+1)….log(N) >N/2*log(N/2)

Summary • For online sorting, use heapsort. • Online : get elements one at at time • Offline or Batch: have all elements available • For small collections, bubble sort is fine • For large collections, use quicksort • You may hybridize the algorithms, e.g • use quicksort until the size is below some k • then use bubble sort • Sorting is important and well-studied and often inefficiently done. • Libraries often contain sorting routines, but beware: the quicksort routine in Visual C++ seems to run in quadratic time. Java sorts in Collections are fine.