Advanced Classifiers for Efficient Feature Selection and Improved Performance

Explore advanced classifiers like Ridge Regression and SNoW for better face detection. Learn to apply AdaBoost for feature selection and enhanced results in your projects.

Advanced Classifiers for Efficient Feature Selection and Improved Performance

E N D

Presentation Transcript

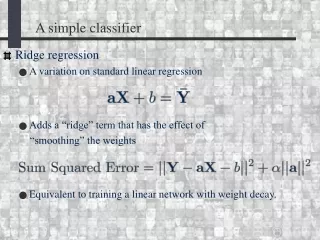

A simple classifier • Ridge regression • A variation on standard linear regression • Adds a “ridge” term that has the effect of “smoothing” the weights • Equivalent to training a linear network with weight decay.

A “Strong” Classifier:SNoW– Sparse Network of Winnows • Roth et al. 2000 – Currently best reported face detector • 1. Turn each pixel into a sparse, binary vector • 2. Activation = sign( ) • 3. Train with the Winnow update rule

AdaBoost for Feature Selection • Viola and Jones (2001) used AdaBoost as a feature selection method • For each round of AdaBoost: • For each patch, train a classifier using only that one patch. • Select the best one as the classifier for this round • reweight distribution based on that classifier.