Distributed databases and the 3D project

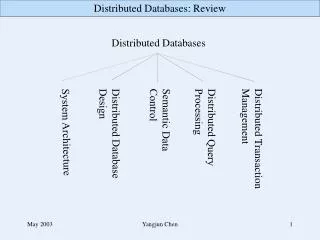

Distributed databases and the 3D project. Outline. What is 3D 3D Architecture and status 3D Testbed and performace Experiment deployment plans. 3D. Distributed Deployment of Databases

Distributed databases and the 3D project

E N D

Presentation Transcript

Distributed databases and the 3D project Stefan Stonjek: 3D

Outline • What is 3D • 3D Architecture and status • 3D Testbed and performace • Experiment deployment plans Stefan Stonjek: 3D

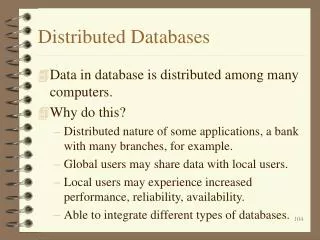

3D • Distributed Deployment of Databases • LCG project, initiated by LHC experiments to co-ordinate the set-up of database services and facilities for relational data transfers as part of the LCG infrastructure • The project goal is to provide a consistent way of accessing database services at tier-0 and collaborating LCG tier sites to achieve a more scalable and available access to non-event data Stefan Stonjek: 3D

Why a LCG Database Deployment Project? • LCG provides an infrastructure for distributed access to file based data and file replication • Physics applications (and grid services) require a similar services for data stored in relational databases • Several applications and services already use RDBMS • Several sites have already experience in providing RDBMS services • Increase the availability and scalability of LCG and experiment components • Allow applications to access data in a consistent and location independent way • Connect existing database services via data replication mechanisms • Simplify a shared deployment and administration of this infrastructure during 24 x 7 operation Stefan Stonjek: 3D

3D is not • Store all database data • Experiments are free to deploy databases and distribute data under their responsibility • Setup a single monolithic distributed database system • Given constraints like WAN connections one can not assume that a single synchronously updated database will provide sufficient availability • Setup a single vendor system • Technology independence and a multi-vendor implementation will be required to minimize the long term risks and to adapt to the different requirements/constraints on different tiers • Impose a CERN centric infrastructure to participating sites Stefan Stonjek: 3D

LCG 3D Service Architecture Oracle Streams http cache (SQUID) Cross DB copy & MySQL/SQLight Files M O S S T1- db back bone - all data replicated - reliable service O O F T2 - local db cache -subset data -only local service T0 - autonomous reliable service • Online DB • autonomous reliable service O M S S R/O Access at Tier 1/2(at least initially) Stefan Stonjek: 3D

Oracle Streams Architecture Stefan Stonjek: 3D

FroNtier & OEM SOURCE DATABASE FNAL CERN CERN CNAF BNL Sinica GridKA RAL 3D Testbed Configuration insert into emp values ( 03, “Manuel”, ….) create table emp ( id number, name varchar2, ….) Stefan Stonjek: 3D

LCG Database Deployment Plan • After October ‘05 workshop a database deployment plan has been presented to LCG GDB and MB • http://agenda.cern.ch/fullAgenda.php?ida=a057112 • Two production phases • March - Oct ‘06 : partial production service • Production service (parallel to existing testbed) • H/W requirements defined by experiments/projects • Subset of LCG tier 1 sites: ASCC, CERN, BNL, CNAF, GridKA, IN2P3, RAL • Oct ‘06- onwards : full production service • Adjusted h/w requirements (defined at summer ‘06 workshop) • Remaining tier 1 sites joined: PIC, NIKHEF, NDG, TRIUMF Stefan Stonjek: 3D

Oracle Licenses for Tier 1 • 3D collected license needs from experiments and s/w projects • After validation with T1 site responsibles:152 processor licenses (incl. Grid Services and Castor) • CERN negotiated with Oracle a proposal with attractive conditions • T1 sites agreed to the proposal • FNAL had already acquired their licenses • All Tier 1 sites should now be covered for s/w and support! • Oracle client will join LCG s/w distribution Stefan Stonjek: 3D

Database Software Status • Persistency Framework Project (POOL & COOL) • CORAL, a reliable generic RDBMS interface for Oracle, MySQL, SQLight and FroNTier -> LCG 3D project • Provides db lookup, failover, connection pooling, authentication, monitoring • COOL and POOL can access all back-ends via CORAL • CORAL also used as separate package by ATLAS/CMS online • Improved COOL versioning functionalities (user tags and hierarchical tags) • CMS online replication tests with POOL/ORA • Latency cut by factor tow via increased commit frequency • Suspected influence of POOL/ORA meta data handling not confirmed • Significant improvements of PVSS performance • Now reaching target of 150k values logged per second • Showed scalability in cluster environment • Significant effort from IT DES/PSS/CO and experiments • Input available for sizing online database setups Stefan Stonjek: 3D

Tier 1 Site Status • Phase 1 Sites • ASGC, BNL, CNAF, IN2P3, RAL - DB clusters available, part of 3D throughput tests • GridKA-DB setup is delayed because, lack of coverage during vacation of main DBA • Phase 2 Sites • TRIUMF - regular attendance in 3D planning meetings • PIC, NIKHEF/SARA - DBA contact established • NDGF - early discussions Stefan Stonjek: 3D

LCG 3D Throughput Tests • Scheduled for May - extended until end of June • Use the production database clusters at tier 1 and obtain a first estimate for the replication throughput which can be achieved with the setup • Input to experiment models for calibration / tag data flow • Tests started on time, but progress was slower then planned • Main reasons • Server setup problem (often db storage) at sites • Firewall configuration • DBA coverage during vacation period (several hires in the queue) • Throughput optimization need Oracle experts to be involved Stefan Stonjek: 3D

Preliminary Results CERN to CERN • Condition-like workload • small (10 column) rows • 10, 20 and 50 MB user data • Factor ~5 for DB log data • Conclusions so far • Apply is the bottleneck • Parallelism helps! • Throttling (flow control) works • Queues size at destinations need appropriate size • Regular contact with Patricia McElroy - Oracle Principal Product Manager Distributed Systems CERN to CNAF Eva Dafonte Perez Stefan Stonjek: 3D

Replication Throughput • Online-Offline (LAN) • Tier 0 - Tier 1 (WAN) • ~ 10-100 MB/min achieved (little optimisation so far) • Strong dependency on row size and commit frequency • WAN reaches ~50% of LAN throughput Eva Dafonte Perez Stefan Stonjek: 3D

Throughput vs Row Size • Replication rate of 100 MB/s reached for 2kB rows Eva Dafonte Perez Stefan Stonjek: 3D

Experiment Deployment Plans • ATLAS • Databases online / Tier-0 / Tier-1 / T2 (mysql) • Oracle streams for conditions replication (COOL) • LHCb • Databases online / Tier-0 / Tier-1 • Oracle streams for conditions replication (COOL) • ATLAS and LHCb interested in FroNtier cache for conditions data in COOL • CMS • Databases online / Tier-0 - DB cache Tier-1 and higher • FroNtier/POOL for conditions data distribution (cache at T1/T2) • Oracle streams as fallback • ATLAS, LHCB & CMS: Oracle streams for online to offline replication • ALICE • Databases online / Tier-0 - Files Tier-1 and higher • Alice s/w for copy/transformation, no 3D service reques Stefan Stonjek: 3D

TAG database testing • TAG database tests so far concentrated on technical aspects • ~50 GB TAG data from ATLAS 2005 ‘Rome’ production uploaded at CERN • Some limited end-user physicist testing; feedback to refine content • June 2006 (now): Tier-0 scaling test for 3 weeks • Uploading TAGs to latest DB schema as part of Tier-0 reconstruction • Peak rate of 200 Hz x ~1kB data; expect 400GB in 3 weeks • New ATLAS DBAs instrumental in setting up optimised Oracle schema/procedure • No Oracle streaming to Tier-1 sites yetJu • July-August 2006: TAG replication to selected Tier 1 sites • Using 3D-provided Oracle-streams-based replication from Tier 0 • Measure performance; understand dependencies on Tier 0 load/update model • TAG files are also distributed to Tier 1s; alternative is to update Tier 1 databases from files, as is done at Tier 0 • September 2006: next Tier-0 test • Include Oracle streams to Tier-1s (maybe for part of testing period) • Testing with ‘real’ simulated data useful for end-user physicists if possible • Start to exercise TAG queries against Tier-1 database replicas • Also plans for tests exploring ATLAS streaming model, and upload of TAGs for current ATLAS Monte Carlo production campaign • Again, emphasis on useful TAG content to gain physicist feedback • Scope / details / resources for these tests currently being discussed in ATLAS Stefan Stonjek: 3D

Relational database data • Replication of relational-database based conditions data (COOL and others): • Tier-0 hosts master copy of all data in Oracle (O(1 TB/year)) • Oracle Streams technology used to replicate data to Oracle servers at Tier-1 • Native Oracle technology, for keeping a replica in sync - ‘duplicates’ all database writes in slave servers by extracting data from master server’s change logs • Works equally well for COOL and other relational database data (application-neutral) • All Tier-1 sites should have local access to conditions data from Oracle • Performant-enough access for reconstruction of full RAW data samples • Options for Tier-2s: • Access Oracle server of nearest Tier-1 • OK for small scale access, limited by network latencies and load on Tier-1 server • Extract needed COOL data into an SQLite file (tools exist) • A ‘one shot’ replication, only practical for a subset of data (e.g. for simulation use case) • Maintain a ‘live’ database copy in MySQL - run a local MySQL condDB server • Tool being developed to synchronise two COOL databases and copy recent updates • Will probably be needed for sites doing significant calibration work • Again, only practical for subsets of the full conditions database Stefan Stonjek: 3D

Frontier • Frontier is an interesting alternative to traditional database replication • A fourth (read-only) technology for CORAL - database access requests are translated to http page requests • These are served by a Tomcat web server sitting in front of a relational database - server translates page request back to SQL and queries real relational database • Server returns result as web page (can be gzipped to avoid XML space overheads) • Frontier client (CORAL) translates web page request back to SQL result for client program (e.g COOL) • Putting a web proxy cache (squid) between client and server allows queries to be cached • When many clients make the same query (= request same web page), only the first one will go all the way to the database, rest will be satisfied from squid cache • Reduces queries on the server, and network traffic • In a distributed environment, could have e.g. squid caches at Tier-1s or even at local Tier-2s, to satisfy most requests as locally and as quickly as possible • First steps in trying this out for ATLAS conditions data (CMS more advanced) • Many questions (e.g. stale caches), but could be an attractive alternative for Tier-2s - deploy a squid cache instead of a MySQL replica Stefan Stonjek: 3D

Requirements No update with respect to the numbers already reported.

Work Plan - Conditions - COOL July • Set up streaming to Tier-1s • Test the replication Tier-0 Tier-1s • Set up streaming between a LHCb managed RAC (Online) and the CERN one • Test replication • CERN Online • Online CERN Tier-1s October

Frontier/SQUID Tests • Stress tests with many clients at CERN to validate FroNTier and SQUID production setup at T0 • Obtained cached and un-cached client access rates • Maximum # of connections per server box • Validated DNS failover • CMS Frontier tests at FNAL • Focus on connection retry and failover and CORAL integration • CMS July release may pickup LCG AA s/w release which is currently prepared • Setting up an additional node for ATLAS FroNTier tests with COOL Stefan Stonjek: 3D

CMS Distributed DB Access Status • Squids will be ready at most sites (CMS SC4 sites) by July 15, 2006 ( as of June 15: 8/8 for Tier-1, 10/28 for Tier-2) • The entire software/service stack is ready including: • POOL database repository • FroNTier servlets running on 3 boxes in CERN/IT • CMS Software framework (CMSSW), • POOL/CORAL/Frontier_client • Site-local-config for configuration at sites • Initial calibration and alignment data for several sub-detectors is available for testing Stefan Stonjek: 3D

CMS FroNTier Test Plans • Testing will be Mid-July through August for use in CSA06 in October. • Work will include the following: • Performance for various tuning options • Payload compression levels • Direct CERN and local-site squid access • Reliability under various failure scenarios • Server redundancy failover for FroNTier @ CERN • Squid failover at Tier 1 centers • Reliability and performance at simulated full scale operation • Simultaneous access at Compute Centers of 100’s of CE’s • Synchronized, multi-site, access of CERN FroNTier servers • Evaluation of monitoring and operations support model Stefan Stonjek: 3D

IT DB usage autumn 06 • 07-08.06: CMS combined detector test: • Complete DB data chain set up: detector→TierX • Application validation against validation server ongoing • Conditions streaming to production server set up and optimization in progress • Production server will be used as T0 conditions repository and source for distribution to Tier0+X centers. Stefan Stonjek: 3D

Timeline • May-June • Replication throughput phase, validation of production setups • Junly-October • Throughput phase closed, experiment application and throughput test start • Experiment ramp-up test with production setup of phase 1 sites • 11 July • 3D DBA day to plan database setup options with new tier 1 sites. Hosted by GridKA (after similar meetings at RAL, CNAF) • End of August/early September • 5 Day Oracle Admin Course @ CERN for Experiment/Site Database Teams • 11-12 September (tbc) • 3D workshop (experiments and sites) defining October setup and service • October • Full service open at all tier 1 sites Stefan Stonjek: 3D

Summary • 3D is already distributing databases to some sites • Oracle streams is used • Experiments plan to use it • Experiment specific test must be next step Stefan Stonjek: 3D

The End Stefan Stonjek: 3D

Tier 1 Hardware Setup • Propose to setup for first 6 month • ATLAS/LHCb: 2/3 dual-cpu database nodes with 2GB or more • Setup as RAC cluster (preferably) per experiment • ATLAS: 3 nodes with 300GB storage (after mirroring) • LHCb: 2 nodes with 100GB storage (after mirroring) • Shared storage (eg FibreChannel) proposed to allow for clustering • CMS: 2-3 dual-cpu Squid nodes with 1GB or more • Squid s/w packaged by CMS will be provided by 3D • 100GB storage per node • Recent MB: 1 node setup for Tier 2’s requested Stefan Stonjek: 3D

OEM - Server Utilization Stefan Stonjek: 3D

Oracle Instant Client Distribution • The issue of client distribution has been discussed with Oracle and an agreement has been achieved • The instant client can be integrated into the LCG middleware and application distributions • As long as the included license file is preserved and adhered to • The SPI project in the Application Area will from now on bundle the software as part of AA releases. • Experiments and LCG middleware should take advantage and pick up validated client releases from this single source. • Version management will happen as for other AA packages via the established channels for external packages Stefan Stonjek: 3D

FroNTier Stress Tests Stefan Stonjek: 3D

Role of Tier-1s • Role of Tier-1s according to the ATLAS computing model • Long term access to and archiving of fraction of RAW data • Reprocessing of RAW data with new reconstruction and calibration constants • Collaboration-wide access to resulting processed data (ESD, AOD, TAG, …) • Managed production of derived datasets for physics groups • Some calibration processing (especially that requiring RAW data) • Host simulated data processed at associated Tier-2s and others • Database requirements to support this: primarily TAGs and conditions data • TAG database ( few 100 quantities per event, for fast first selection) • Copy of TAG data for all ‘active’ reconstruction passes • TAG data collated in Oracle at Tier-0 and copied to Tier-1s (e.g. via Oracle Streams) • Support TAG database queries from physics groups and individuals to contstuct samples for analysis • Conditions database • Copy of all conditions data required for Tier-1 reprocessing and user analysis • Conditions data (from online and calibration) collated at Tier-0, streamed to Tier-1 Stefan Stonjek: 3D

Conditions database testing • ATLAS using LCG COOL conditions database in production mode now • Have around 25 GB of data, mainly from subdetector commissioning activities • Some limited dedicated tests of Oracle streams (online -> offline, CERN -> RAL -> Oxford) using COOL as example application • Achieving rates of 10-30 MB/minute in these tests (combined LCG 3D/ATLAS effort) • Ready to exploit Oracle streams to Tier-1 in production as soon as available: • Conditions data from ATLAS commissioning: • Steady input of conditions data, more subdetectors keep joining • E.g. combined barrel calorimeters test coming up in next month • Data being accumulated at CERN … limited SQLite replication of subsets • Oracle streams to Tier-1 will allow outside analysis using latest conditions data • Already have technology to ship event data to remote institutes … will be highly valuable • Conditions data in calibration/alignment challenge • Towards end of 2006, process significant miscalibrated MC samples, run calibration algorithms and reprocess • Need ‘live’ replication of new calibration data from CERN to reprocessing centres Stefan Stonjek: 3D

Calibration / alignment model • First pass calibration done at CERN (except muon stream, see later) • In 24 hours after end of fill, process and analyse calibration streams, produce and verify first pass alignment constants… • Processing resources are part of CERN Tier-0/CAF • Calibration will also depend on previous calibration - amount of ‘per run’ recalibration will not be known until experience with real data is gained • … Prompt reconstruction of physics data, distribution to Tier-1s, Tier-2s, etc. • Then, study pass 1 data, prepare new calibrations ready for reprocessing • ATLAS expects to reprocess whole data sample 1-2 times per year, at Tier-1s • Calibration will be based on detailed analysis of AOD, ESD and some RAW data • Processing done primarily at Tier-2 (and Tier-1) centres • Calibrations will be uploaded from originating sites to CERN central databases • Probably file-based uploading - see later • New calibrations distributed to Tier-1 centres for subsequent raw data reprocessing • Once raw data is reprocessed and distributed, process can be repeated Stefan Stonjek: 3D

Conditions data model • ATLAS conditions database contains all non-event data needed for simulation, reconstruction and analysis • Calibration/alignment data, also DCS (slow controls) data, subdetector and trigger configuration, monitoring, … • Key concept is data stored by ‘interval of validity’ (IOV) - run/event or timestamp • Some meta-data may be stored elsewhere (luminosity blocks, run level information) • Several technologies employed: • Relational databases: COOL for IOVs and some payload data, other relational database tables referenced by COOL • COOL databases can be stored in Oracle, MySQL DBs, or SQLite file-based DBs • Accessed by ‘CORAL’ software (common database backend-independent software layer) - CORAL applications are independent of underlying database • Mixing technologies an important part of database distribution strategy • File based data (persistified calibration objects) - stored in files, indexed / referenced by COOL • File based data will be organised into datasets and handled using DDM (same system as used for event data) Stefan Stonjek: 3D

File-based conditions data • Some conditions data stored in files: • Large calibration data objects, stored using POOL technology (as event data) • Other types of data, e.g. files of monitoring histograms • Organise into conditions datasets using standard ATLAS DDM tools • Expect O(100 GB/year) of calibration data - small compared to event data • Perhaps more for histograms/monitoring data • Reconstruction/analysis jobs will require local access to specified datasets • Stored on DDM-managed local storage, as for event data being processed, or even downloaded to worker node • DDM / DQ2 instance to manage the storage and maintain catalogues could be at Tier-2, or at Tier-1 • … but Tier-2 sites must be ‘DDM-aware’ • End users will want to download specific datasets, e.g. histogram sets for their subdetector, download locally to Tier-2 or even to their laptops • Again using DDM end-user tools - retrieve datasets from local Tier-2 or nearest Tier-1 Stefan Stonjek: 3D

Calibration data challenge • So far in ATLAS, Tier-2s have only really done simulation/reconstruction • With static replicas of conditions data in SQLite files, or preloaded MySQL replicas - required conditions data already known in advance • ATLAS calibration data challenge (late 2006) will change this • Reconstruct misaligned/miscalibrated data, derive calibrations, rereconstruct and iterate - as close as possible to real data • Will require ‘live’ replication of new data out to Tier-1/2 centres • Technologies to be used @ Tier-2 • Will need COOL replication either by local MySQL replicas, or via Frontier • Currently just starting on ATLAS tests of Frontier - need to get experience • Decision in a few months on what to use for calibration data challenge • Frontier is also of interest in online environment (database replication for trigger farm) • Will definitely need DDM replication of new conditions datasets (sites subscribe to evolving datasets) • External sites will submit updates as COOL SQLite files to be merged into central CERN Oracle databases Stefan Stonjek: 3D

Muon calibration use case • A few Tier-2 sites designated as muon ‘calibration centres’ • Receive special stream of muon data extracted from level 2 trigger: ~ 100 GB/day • Probably transferred via Tier-1 for tape backup • Process this locally at Tier-2 on a farm of O(100 machines) • Store intermediate results in a local Oracle-based calibration database, which is replicated to CERN using Oracle streams replication • Calibration results (to be used in prompt reconstruction) will be derived from this data and entered into COOL in the usual way • Time critical operation - prompt reconstruction needs these results in < 24 hours • Goes beyond the calibration requirements of a standard Tier-2 site • Need for dedicated local Oracle database expertise and higher ‘quality of service’ and response time for problems Stefan Stonjek: 3D

Concluding remarks • Little experience of calibration/alignment activities so far, especially in an organised production environment • Tier-2 have concentrated on simulation/reconstruction of simulated data • Some requirements on Tier-2s are clear: • Need for CPU resources for calibration/alignment • Access to event and conditions datasets using ATLAS DDM tools • Access to local SQLite-based database replicas of parts of conditions database • Others are not so clear: • Need for dedicated MySQL service for live conditions data ? • Need for Froniter squid caches ? • … will become clearer in next few months and from experience with calibration data challenge • Probably will not have ‘standard’ requirements for a Tier-2 • A lot will depend on what the users at that Tier-2 want to do (simulation,analysis, calibration,..) Stefan Stonjek: 3D

Pixel Pixel Strips Strips EMDB ECAL ECAL HCAL HCAL DDD RPC RPC Logbook DT DT ES ES LHC data CSC CSC Trigger Trigger DAQ DAQ DCS DCS Create rec conDB data set Offline Reconstruction Conditions DB ONline subset Online Master Data Storage Conditions Calibration Poolification Bat 513 Configurations Conditions Production Validation Development Offline Reconstruction Conditions DB OFfline subset Configuration Conditions Master copy Tier 0 Stefan Stonjek: 3D

CMS Squid Deployment • Squids deployed at several Tier 1 and 2 sites. • Tier 1: LCG: ASCC,IN2P3, PIC, RAL, CNAF, FZK, CERN and OSG: FNAL • Tier 2:LCG: Bari, CIEMAT, DESY, Taiwan, OSG: UCSD, Purdue, Caltech • Remaining Tier 2 sites for SC4 goals: • LCG: Belgium, Legnaro, Budapest, Estonia, CSCS, GRIF, Imperial, Rome, Pisa, NCU/NTU, ITEP, SINP, JINR, IHEP, KNU and OSG: Florida, Nebraska, Wisconsin, MIT. • In progress, plan to finish in the next month. • Request WLCG support for Tier-2 Squid installation • Minimum specs: 1GHz CPU, 1GByte mem, GBit network, 100 GB disk. • Needs to be well connected (network-wise) to worker nodes, and have access to WAN and LAN if on Private Network. • Having 2 machines for failover is a useful option for T-2 but not required. • Sites perform Squid installation using existing instructions • Installation should be completed and tested by July 15, 2006.

LHCb DB Requirements and Work Plan Marco Clemencic LHCb 3D Meeting 22-06-2006

Overview • Introduction • Requirements • Plans for Jul-Oct • CondDB • LFC • Production Phase • Conclusions

Work Plan - LFC July • Replication tests ongoing • CERN CNAF • Large scale tests using available Tier-1s • LFC setup at Tier-1s • Oracle streaming setup October

Production Phase (October) • CondDB and LFC up and running • Master copy at CERN(RAC in the PIT will not be ready before end of the year) • Replicas at Tier-1s • GRID access

Conclusions • Requested resources are sufficient for start-up • Setup and tests should expose problems or limitations • September deadline for corrections to the requirements should be ok