Parallel Algorithms

Parallel Algorithms. Computation Models. Goal of computation model is to provide a realistic representation of the costs of programming. Model provides algorithm designers and programmers a measure of algorithm complexity which helps them decide what is “ good ” (i.e. performance-efficient).

Parallel Algorithms

E N D

Presentation Transcript

Computation Models • Goal of computation model is to provide a realistic representation of the costs of programming. • Model provides algorithm designers and programmers a measure of algorithm complexity which helps them decide what is “good” (i.e. performance-efficient)

Goal for Modeling • We want to develop computational models which accurately represent the cost and performance of programs • If model is poor, optimum in model may not coincide with optimum observed in practice Model Real World x optimum A optimum B Y

Models of Computation What’s a model good for?? • Provides a way to think about computers. Influences design of: • Architectures • Languages • Algorithms • Provides a way of estimating how well a program will perform. Cost in model should be roughly same as cost of executing program

The Random Access Machine Model RAM model of serial computers: • Memory is a sequence of words, each capable of containing an integer. • Each memory access takes one unit of time • Basic operations (add, multiply, compare) take one unit time. • Instructions are not modifiable • Read-only input tape, write-only output tape

Has RAM influenced our thinking? Language design: No way to designate registers, cache, DRAM. Most convenient disk access is as streams. How do you express atomic read/modify/write? Machine & system design: It’s not very easy to modify code. Systems pretend instructions are executed in-order. Performance Analysis: Primary measures are operations/sec (MFlop/sec, MHz, ...) What’s the difference between Quicksort and Heapsort??

What about parallel computers • RAM model is generally considered a very successful “bridging model” between programmer and hardware. • “Since RAM is so successful, let’s generalize it for parallel computers ...”

PRAM [Parallel Random Access Machine] PRAM composed of: • P processors, each with its own unmodifiable program. • A single shared memory composed of a sequence of words, each capable of containing an arbitrary integer. • a read-only input tape. • a write-only output tape. PRAM model is a synchronous, MIMD, shared address space parallel computer. (Introduced by Fortune and Wyllie, 1978)

PRAM model of computation Shared memory • p processors, each with local memory • Synchronous operation • Shared memory reads and writes • Each processor has unique id in range 1-p

Characteristics • At each unit of time, a processor is either active or idle (depending on id) • All processors execute same program • At each time step, all processors execute same instruction on different data (“data-parallel”) • Focuses on concurrency only

More PRAM taxonomy • Different protocols can be used for reading and writing shared memory. • EREW - exclusive read, exclusive write A program isn’t allowed to have two processors access the same memory location at the same time. • CREW - concurrent read, exclusive write • CRCW - concurrent read, concurrent write Needs protocol for arbitrating write conflicts • CROW – concurrent read, owner write Each memory location has an official “owner” • PRAM can emulate a message-passing machine by partitioning memory into private memories.

Sub-variants of CRCW • Common CRCW • CW iff all processors writing same value • Arbitrary CRCW • Arbitrary value of write set stored • Priority CRCW • Value of min-index processor stored • Combining CRCW

Why study PRAM algorithms? • Well-developed body of literature on design and analysis of such algorithms • Baseline model of concurrency • Explicit model • Specify operations at each step • Scheduling of operations on processors • Robust design paradigm

Work-Time paradigm • Higher-level abstraction for PRAM algorithms • WT algorithm = (finite) sequence of time steps with arbitrary number of operations at each step • Two complexity measures • Step complexity T(n) • Work complexity W(n) WT algorithm work-efficient ifW(n) = Q(TS(n)) optimal sequential Algorithm

Designing PRAM algorithms • Balanced trees • Pointer jumping • Euler tours • Divide and conquer • Symmetry breaking • . . .

Balanced trees • Key idea: Build balanced binary tree on input data, sweep tree up and down • “Tree” not a data structure, often a control structure (e.g., recursion)

Alg : Sum • Given: Sequence a of n = 2k elements • Given: Binary associative operator + • Compute: S = a1 + ... + an

WT description of sum integer B[1..n] forall i in 1 : n do B[i] := ai enddo for h = 1 to k do forall i in 1 : n/2hdo B[i] := B[2i-1] + B[2i] enddo enddo S := B[1]

Points to note about WT pgm • Global program: no references to processor id • Contains both serial and concurrent operations • Semantics of forall • Order of additions different from sequential order: associativity critical

Analysis of scan operation • Algorithm is correct • Q(lg n) steps, Q(n) work • EREW model • Two variants • Inclusive: as discussed • Exclusive: s1 = I, sk = x1 + ... + xk-1 • If n not power of 2, pad to next power

Complexity measures of Sum • Recall definitions of step complexity T(n) and work complexity W(n) • Concurrent execution reduces number of steps

How to do prefix sum ? • Input: Sequence x of n = 2kelements, binary associative operator + • Output: Sequence s of n = 2kelements, with sk = x1 + ... + xk • Example: x = [1, 4, 3, 5, 6, 7, 0, 1] s = [1, 5, 8, 13, 19, 26, 26, 27]

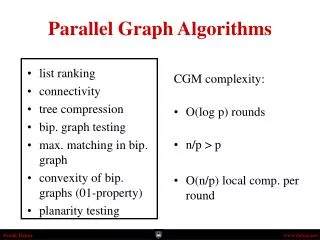

List Ranking • List ranking problem • Given a singly linked list L with n objects, for each node, compute the distance to the end of the list • If d denotes the distance • node.d = 0 if node.next = nil • node.next.d + 1 otherwise • Serial algorithm: O(n) • Parallel algorithm • Assign one processor for each node • Assume there are as many processors as list objects • For each node i, perform • i.d = i.d + i.next.d • i.next = i.next.next // pointer jumping {

List Ranking - Pointer Jumping • List_ranking(L) • for each node i, in parallel do • if i.next = nil then i.d = 0 • else i.d = 1 • while exists a node i, such that i.next != nil do • for each node i, in parallel do • if i.next != nil then • i.d = i.d + i.next.d // i updates i itself • i.next = i.next.next • Analysis • After a pointer jumping, a list is transformed into two (interleaved) lists • After that, four (interleaved) lists • Each pointer jumping doubles the number of lists and halves their length • After log n, all lists contain only one node • Total time: O(log n)

List Ranking - Discussion • Synchronization is important • In step 8 (i.next = i.next.next), all processors must read right hand side before any processor write left hand side • The list ranking algorithm is EREW • If we assume in step 7 (i.d = i.d + i.next.d) all processors read i.d and then read i.next.d • If j.next = i, i and j do not read i.d concurrently • Work performance • performs O(n log n) work since n processors in O(log n) time • Work efficient • A PRAM algorithm is work efficient w.r.t another algorithm if two algorithms are within a constant factor • Is the link ranking algorithm work-efficient w.r.t the serial algorithm? • No, because O(n log n) versus O(n) • Speedup • S = n / log n

Parallel Prefix on a List • Prefix computation • Input <x1, x2, .., xn>, a binary, associative operator • Output <y1, y2, .., yn> • Prefix computation: yk = x1 x2 .. xk • Example • if xk = 1 for k=1..n and = + • Then yk = k, for k = 1..n • Serial algorithm: O(n) • Notation • [i, j] = xi xi+1 .. xj • [k, k] = xk • [i, k] [k+1, j] = [i, j] • Idea: perform prefix computation on a linked list so that • each node k contains [k, k] = xk initially • finally each node k contains [1, k] = yk

Parallel Prefix on a List (2) • List_prefix(L, X) // L: list, X: <x1, x2, .., xn> • for each node i, in parallel • i.y = xi • While exists a node i such that i.next != nil do • for each node i, in parallel do • if i.next != nil then • i.next.y = i.y i.next.y // i updates its successor • i.next = i.next.next • Analysis • Initially k-th node has [k,k] as y-value, points to (k+1)-th node • At the first iteration, • k-th node fetches [k+1,k+1] from its successor and • perform [k,k] [k+1,k+1] resulting in [k,k+1] and • update its successor • At the second iteration • k-th node fetches [k+1,k+2] from its successor and • perform [k-1,k] [k+1,k+2] resulting in [k-1,k+2] and • update its successor

Parallel Prefix on a List (4) • Running time: O(log n) • After log n, all lists contain only one node • Work performed: O(n log n) • Speedup • S = n / log n

Pointer jumping • Fast parallel processing of linked data structures (lists, trees) • Convention: Draw trees with edges directed from children to parents • Example: Finding the roots of forest represented as parent array P • P[i] = j if and only if (i, j) is a forest edge • P[i] = i if and only if i is a root

Algorithm (Roots of forest) forall i in 1:n do S[i] := P[i] while S[i] != S[S[i]] do S[i] := S[S[i]] endwhile enddo

Analysis of pointer jumping • Termination detection? • At each step, tree distance between i and S[i] doubles unless S[i] is a root • CREW model • Correctness by induction on h • O(lg h) steps, O(n lg h) work • TS(n) = O(n) • Not work-efficient unless h constant

Concurrent Read – Finding Roots • This is a CREW algorithm • Suppose Exclusive-Read is used, what will be the running time? • Initially only one node i has root information • First iteration: Another node reads from the node i • Totally two nodes are filled up • Second iteration: Another two nodes can reads from the two nodes • Totally four nodes are filled up • k-th iteration: 2k-1 nodes are filled up • If there are n nodes, k=log n • So Find_root with Exclusive-Read takes O(log n). • O(log log n) vs. O(log n)

Euler tours • Technique for fast optimal processing of tree data • Euler circuit of directed graph: directed cycle that traverses each edge exactly once • Represent (rooted) tree by Euler circuit of its directed version

Trees = balanced parentheses ((()( ) )( ) (( ) ( ) ( ) )) Key property: The parenthesis subsequence corresponding to a subtree is balanced.

Computing the Depth • Problem definition • Given a binary tree with n nodes, compute the depth of each node • Serial algorithm takes O(n) time • A simple parallel algorithm • Starting from root, compute the depths level by level • Still O(n) because the height of the tree could be as high as n • Euler tour algorithm • Uses parallel prefix computation

Computing the Depth (2) • Euler tour: A cycle that traverses each edge exactly once in a graph • It is a directed version of a tree • Regard an undirected edge into two directed edges • Any directed version of a tree has an Euler tour by traversing the tree • in a DFS way forming a linked list. • Employ 3*n processors • Each node i has fields i.parent, i.left, i.right • Each node i has three processors, i.A, i.B, and i.C. • Three processors in each node of the tree are linked as follows • i.A = i.left.A if i.left != nil • i.B if i.left = nil • i.B = i.right.A if i.right != nil • i.C if i.right = nil • i.C = i.parent.B if i is the left child • i.parent.C if i is the right child • nil if i.parent = nil { { {

Computing the Depth (3) • Algorithm • Construct the Euler tour for the tree – O(1) time • Assign 1 to all A processors, 0 to B processors, -1 to C processors • Perform a parallel prefix computation • The depth of each node resides in its C processor • O(log n) • Actually log 3n • EREW because no concurrent read or write • Speedup • S = n/log n

M B P P P P P P P P Broadcasting on a PRAM • “Broadcast” can be done on CREW PRAM in O(1) steps: • Broadcaster sends value to shared memory • Processors read from shared memory • Requires lg(P) steps on EREW PRAM.

Concurrent Write – Finding Max • Finding max problem • Given an array of n elements, find the maximum(s) • sequential algorithm is O(n) • Data structure for parallel algorithm • Array A[1..n] • Array m[1..n]. m[i] is true if A[i] is the maximum • Use n2 processors • Fast_max(A, n) • for i = 1 to n do, in parallel • m[i] = true // A[i] is potentially maximum • for i = 1 to n, j = 1 to n do, in parallel • if A[i] < A[j] then • m[i] = false • for i = 1 to n do, in parallel • if m[i] = true then max = A[i] • return max • Time complexity: O(1)

Concurrent Write – Finding Max • Concurrent-write • In step 4 and 5, processors with A[i] < A[j] write the same value ‘false’ into the same location m[i] • This actually implements m[i] = (A[i] A[1]) … (A[i] A[n]) • Is this work efficient? • No, n2 processors in O(1) • O(n2) work vs. sequential algorithm is O(n) • What is the time complexity for the Exclusive-write? • Initially elements “think” that they might be the maximum • First iteration: For n/2 pairs, compare. • n/2 elements might be the maximum. • Second iteration: n/4 elements might be the maximum. • log n th iteration: one element is the maximum. • So Fast_max with Exclusive-write takes O(log n). • O(1) (CRCW) vs. O(log n) (EREW)

Simulating CRCW with EREW • CRCW algorithms are faster than EREW algorithms • How much fast? • Theorem • A p-processor CRCW algorithm can be no more than O(log p) times faster than the best p-processor EREW algorithm • Proof by simulating CRCW steps with EREW steps • Assumption: A parallel sorting takes O(log n) time with n processors • When CRCW processor pi write a datum xi into a location li, EREW pi writes the pair (li, xi) into a separate location A[i] • Note EREW write is exclusive, while CRCW may be concurrent • Sort A by li • O(log p) time by assumption • Compare adjacent elements in A • For each group of the same elements, only one processor, say first, write xi into the global memory li. • Note this is also exclusive. • Total time complexity: O(log p)