Kernels for Non-linear Algorithms in Data Analysis

Explore the fundamentals of kernels in data analysis, comparing various statistical methods, and the importance of kernel selection to enhance algorithm performance. Learn about feature spaces, the kernel trick, modularity in kernel methods, proper kernel definition, and stability of kernel algorithms. Discover the significance of reproducing Kernel Hilbert spaces and the utility of learning kernels for improved generalization. Gain insights into Rademacher complexity, generalization bounds, and margin bounds in classification problems.

Kernels for Non-linear Algorithms in Data Analysis

E N D

Presentation Transcript

Introduction to Kernels (chapters 1,2,3,4) Max Welling October 1 2004

Introduction • What is the goal of (pick your favorite name): • - Machine Learning • - Data Mining • - Pattern Recognition • - Data Analysis • - Statistics • Automatic detection of non-coincidental structure in data. • Desiderata: • - Robust algorithms insensitive to outliers and wrong • model assumptions. • - Stable algorithms: generalize well to unseen data. • - Computationally efficient algorithms: large datasets.

Answer: We consider linear combinations of input vector: Linear algorithm are very well understood and enjoy strong guarantees. (convexity, generalization bounds). Can we carry these guarantees over to non-linear algorithms? Let’s Learn Something Find the common characteristic (structure) among the following statistical methods? 1. Principal Components Analysis 2. Ridge regression 3. Fisher discriminant analysis 4. Canonical correlation analysis

Feature Spaces non-linear mapping to F 1. high-D space 2. infinite-D countable space : 3. function space (Hilbert space) example:

Ridge Regression (duality) problem: regularization input target solution: dxd inverse inverse Gram-matrix Dual Representation linear comb. data

Kernel Trick Note: In the dual representation we used the Gram matrix to express the solution. Kernel Trick: Replace : kernel If we use algorithms that only depend on the Gram-matrix, G, then we never have to know (compute) the actual features This is the crucial point of kernel methods

Modularity Kernel methods consist of two modules: 1) The choice of kernel (this is non-trivial) 2) The algorithm which takes kernels as input Modularity: Any kernel can be used with any kernel-algorithm. some kernel algorithms: - support vector machine - Fisher discriminant analysis - kernel regression - kernel PCA - kernel CCA some kernels:

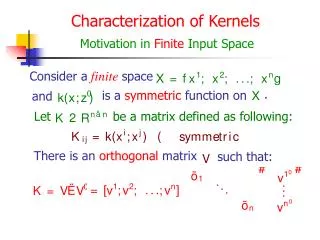

What is a proper kernel Definition:A finitely positive semi-definite function is a symmetric function of its arguments for which matrices formed by restriction on any finite subset of points is positive semi-definite. Theorem:A function can be written as where is a feature map iff k(x,y) satisfies the semi-definiteness property. Relevance: We can now check if k(x,y) is a proper kernel using only properties of k(x,y) itself, i.e. without the need to know the feature map!

Reproducing Kernel Hilbert Spaces The proof of the above theorem proceeds by constructing a very special feature map (note that more feature maps may give rise to a kernel) i.e. we map to a function space. definition function space: reproducing property:

Mercer’s Theorem Theorem:X is compact, k(x,y) is symmetric continuous function s.t. is a positive semi-definite operator: i.e. then there exists an orthonormal feature basis of eigen-functions such that: Hence: k(x,y) is a proper kernel. Note: Here we construct feature vectors in L2, where the RKHS construction was in a function space.

Learning Kernels • All information is tunneled through the Gram-matrix information • bottleneck. • The real art is to pick an appropriate kernel. • e.g. take the RBF kernel: if c is very small: G=I (all data are dissimilar): over-fitting if c is very large: G=1 (all data are very similar): under-fitting We need to learn the kernel. Here is some ways to combine kernels to improve them: cone k1 k2 any positive polynomial

Stability of Kernel Algorithms Our objective for learning is to improve generalize performance: cross-validation, Bayesian methods, generalization bounds,... Call a pattern a sample S. Is this pattern also likely to be present in new data: ? We can use concentration inequalities (McDiamid’s theorem) to prove that: Theorem:Let be a IID sample from P and define the sample mean of f(x) as: then it follows that: (prob. that sample mean and population mean differ less than is more than ,independent of P!

Rademacher Complexity Prolem: we only checked the generalization performance for a single fixed pattern f(x). What is we want to search over a function class F? Intuition: we need to incorporate the complexity of this function class. Rademacher complexity captures the ability of the function class to fit random noise. ( uniform distributed) f1 (empirical RC) f2 xi

Generalization Bound Theorem: Let f be a function in F which maps to [0,1]. (e.g. loss functions) Then, with probability at least over random draws of size every f satisfies: Relevance: The expected pattern E[f]=0 will also be present in a new data set, if the last 2 terms are small: - Complexity function class F small - number of training data large

Linear Functions (in feature space) Consider the function class: and a sample: Then, the empirical RC of FB is bounded by: Relevance: Since: it follows that if we control the norm in kernel algorithms, we control the complexity of the function class (regularization).

Margin Bound (classification) Theorem: Choose c>0 (the margin). F : f(x,y)=-yg(x), y=+1,-1 S: : (0,1) : probability of violating bound. (prob. of misclassification) Relevance: We our classification error on new samples. Moreover, we have a strategy to improve generalization: choose the margin c as large possible such that all samples are correctly classified: (e.g.support vector machines).