Mining the Web Traces: Workload Characterization, Performance Diagnosis, and Applications

830 likes | 974 Vues

Mining the Web Traces: Workload Characterization, Performance Diagnosis, and Applications. Lili Qiu Microsoft Research Performance’2002, Rome, Italy September 2002. Motivation. Why do we care about Web traces? Content providers How do users get to the Web site?

Mining the Web Traces: Workload Characterization, Performance Diagnosis, and Applications

E N D

Presentation Transcript

Mining the Web Traces:Workload Characterization, Performance Diagnosis, and Applications Lili Qiu Microsoft Research Performance’2002, Rome, Italy September 2002

Motivation • Why do we care about Web traces? • Content providers • How do users get to the Web site? • Why do users leave the Web site? Is poor performance the cause for this? • What content are users interested in? • How do users’ interest vary in time? • How do users’ interest vary across different places? • Where are the performance bottlenecks?

Motivation (Cont.) • Web hosting companies • Accounting & billing • Server selection • Provisioning server farms: where to place servers • ISPs • How to save bandwidth by storing proxy caches? • Traffic engineering & provisioning • Researchers • Where are the performance bottlenecks? • How to improve Web performance? • Examples: Traffic measurements have influenced the design of HTTP (e.g., persistent connections and pipeline), TCP (e.g., initial congestion window)

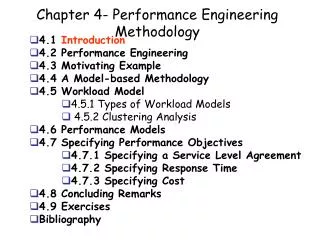

Tutorial Outline • Background • Web workload characterization • Performance diagnosis • Applications of traces • Bibliography

Part I: Background • Web components • Web transaction • Web protocols • Types of Web traces

Web Components • Web clients • An application that establishes connections to send Web requests • E.g., Mosaic, Netscape Navigator, IE • Web servers • An application that accepts connections to service requests by sending back responses • E.g., Apache, IIS • Web proxies (optional) • Web replicas (optional) Internet proxy replica proxy replica proxy WebServers WebClients

Internet Protocol Stack Application layer (HTTP, Telnet, FTP, DNS) Transport layer (TCP,UDP) Network layer (IP) Datalink layer (Ethernet, ATM) Physical layer (coaxial cable, optical fiber)

Web Protocols HTTP messages HTTP HTTP TCP segments TCP TCP IP pkt IP pkt IP pkt IP IP IP IP Ethernet Ethernet Sonet Sonet Ethernet Ethernet Sonet link Ethernet Ethernet A picture taken from [KR01]

Web Protocols (Cont.) • DNS [AL01] • An application layer protocol responsible for translating hostname to IP and vice versa (e.g., perf2002.uniroma2.it 160.80.2.140) • TCP [JK88] • A transport layer protocol that does error control and flow control • Hypertext Transfer Protocol (HTTP) • HTTP 1.0 [BLFF96] • The most widely used HTTP version • A “Stop and wait” protocol • HTTP 1.1 [GMF+99] • Adds persistent connections, pipelining, caching, compression

Example of a Web Transaction DNS server 1. DNS query 2. Setup TCP connection 3. HTTP request 4. HTTP response Browser Web server

HTTP 1.0 • HTTP request • Request = Simple-Request | Full-Request Simple-Request = "GET" SP Request-URI CRLF Full-Request = Request-Line; *( General/Request/Entity Header) ; CRLF [ Entity-Body ] ; Request-Line = Method SP Request-URI SP HTTP-Version CRLF Method = "GET" ;| "HEAD" ; | "POST" ;| extension-method • Example: GET /info.html HTTP/1.0

HTTP 1.0 (Cont.) • HTTP response • Response = Simple-Response | Full-Response Simple-Response = [ Entity-Body ]Full-Response = Status-Line; *( General/Response/Entity Header ); CRLF [ Entity-Body ] ; • Example:HTTP/1.0 200 OKDate: Mon, 09 Sep 2002 06:07:53 GMTServer: Apache/1.3.20 (Unix) (Red-Hat/Linux) PHP/4.0.6Last-Modified: Mon, 29 Jul 2002 10:58:59 GMTContent-Length: 21748Content-Type: text/html…<21748 bytes of the current version of info.html>

HTTP 1.1 • Connection management • Persistent connections [Mogul95] • Use one TCP connection for multiple HTTP requests • Pros: • Reduce the overhead of connection setup and teardown • Avoid TCP slow start • Cons: • head-of-line blocking • increase servers’ state • Pipeline [Pad95] • Send multiple requests without waiting for a response between requests • Pros: avoid the round-trip delay of waiting for each response • Cons: connection abortion is harder to deal with

HTTP 1.1 (Cont.) • Caching • Continues to support the notion of expiration used in HTTP 1.0 • Add a cache-control header to handle the issues of cacheability and semantic transparency [KR01] • E.g., no-cache, only-if-cache, no-store, max-age, max-stale, min-fresh, … • Others • Range request • Content negotiation • Security • …

Types of Web Traces • Application level traces • Flow level traces • Packet level traces • Collection method: monitor a network link • Available tools: tcpdump, libpcap • Concerns: packet dropping, timestamp accuracy

Tutorial Outline • Background • Web workload characterization • Performance diagnosis • Applications of traces • Bibliography

Part II: Web Workload Characterization • Overview • Content dynamics • Access dynamics • Common pitfalls • Case studies

Overview • Process of trace analyses • Common analysis techniques • Common analysis tools • Challenges in workload characterization

Process of trace analyses • Collect traces • Define key metrics to characterize • Process traces • Draw inferences from the data • Apply the traces or insights gained from the trace analyses to design better protocols & systems

Common Analysis Techniques - Statistics • Mean • Median • Variance and standard deviation • Geometric mean • Confidence interval • A range of values that has a specified probability of containing the parameter being estimated • Example: 95% confidence interval 10 x 20

Common Analysis Techniques – Statistics (Cont.) • Cumulative distribution (CDF) • P(x a) • Probability density function (PDF) • Derivative of CDF: f(x) = dF(x)/dx • Check for heavy tail distribution • Log-log complementary plot, and check its tail • Example: Pareto distribution If 2, distribution has infinite variance (a heavy tail)If 1, distribution has infinite mean

Common Analysis Techniques – Data Fitting • Visually compare empirical distribution with standard distributions • Chi Squared tests [AS86,Jain91] • If , then two distributions are close, where • Kolmogorov-Smirnov tests [AS86,Jain91] • Compares two distributions by finding the maximum differences between two variables’ cumulative distribution functions • Quantile-quantile plots [AS86,Jain91] • Compare two distributions by plotting the inverse of the cumulative distribution function F-1(x) for two variables and finding the best-fit line

Common Analysis Tools • Scripting languages • Perl, awk, … • Databases • SQL, … • Statistics packages • Matlab, S, … • Programs

Challenges in Workload Characterization • Each of the Web components provides a limited perspective on the functioning of the Web • Workload characteristics vary both in space and in time Internet proxy replica proxy replica proxy Servers Clients

Views from Clients • Capture clients’ requests to all servers • Pros • Know details of client activities, such as requests satisfied by browser caches, client abortion • The ability to record detailed information, as this does not impose significant load on a client browse • Cons • Need to modify browser software • Hard to deploy for a large number of clients

Views from Web Servers • Capture all clients’ requests (except those satisfied by caches) to a single server • Pros • Relatively easy to deploy/change logging software • Cons • Requests satisfied by browser & proxy caches will not appear in the logs • May not log detailed information to ensure fast processing of requests

Views from Web Proxies • Depending on the proxy’s location • A proxy close to clients see requests from a a small client group to a large number of servers • A proxy close to the servers see requests from a large client group to a small number of servers • Pros • More diverse …? • Cons • Requests satisfied by browser caches will not appear in the logs • May not log detailed information to ensure fast processing of requests • Does not have full information …?

Workload Variation • Vary with measurement points • Vary with sites being measured • Information servers (news site), e-commercial servers, query servers, streaming servers, upload servers • US vs. Italy • … • Vary with the clients being measured • Internet clients vs. wireless clients • University clients vs. home users • … • Vary in time • Day vs. night • Weekday vs. weekend • Changes with new applications, recent events …

Part II: Web Workload • Overview • Content dynamics • Access dynamics • Common pitfalls • Case studies

Content Dynamics • File size distribution • File update patterns • How often files are updated • How much files are updated

File Size Distribution • Two definitions • D1: Size of all files on a Web server • D2: Size of all files transferred by a Web server • D1 D2, because some files can be transferred multiple times or not in completion and other files are not transferred • Studies show that the distribution of file sizes in both definitions exhibit heavy tails (i.e., P[F > x] ~ x-, 0 2)

File Update Interval • Varies in time • Hot events and fast changing events require more frequent update, e.g., Worldcup • Varies across sites • Depending on server update policy • Predictability • Study of MSNBC logs show that modification history yields a rough predictor of future modification interval TTL based [PQ00]

Extent of Change upon Modifications • Studies show that most file modifications are small delta encoding can be very useful

Part II: Web Workload • Motivation • Limitations of workload measurements • Content dynamics • Access Dynamics • File popularity distribution • Temporal stability • Spatial locality • User session and request arrivals & duration • Synthetic workload generation • Common pitfalls • Case studies

Document Popularity • The Web requests follow Zipf-like distribution • Request frequency 1/i, where i is a document’s ranking • The value of depends on the point of measurements • Between 0.6 and 1 for client traces and proxy traces • Close to or larger than 1 for server traces [ABC+96, PQ00] • The value of varies over time (e.g., larger during hot events)

Impact of the value • Larger means more accesses are more concentrated on popular documents caching is more beneficial • 90% of the accesses are accounted by • Top 36% files in proxy traces [BCF+99, PQ00] • Top 10% files in small departmental server logs reported in [AW96] • Top 2-4% files in MSNBC traces

Temporal Stability • Metrics • Coarse-grained: likely duration that a current popular file remains popular • e.g., overlap between the set of popular documents on day 1 and day 2 • Fine-grained: how soon a requested file will be requested again • e.g., LRU stack distance [ABC+96] File 5 File 2 File 4 File 5 Stack distance = 4 File 3 File 4 File 2 File 3 File 1 File 1

Spatial Locality • Refers to if users in the same geographical location or same organization tend to request the same documents • E.g., degree of a request locally shared vs. globally shared

Spatial Locality (Cont.) Domain membership is significant except when there is a “hot” event of global interest

User session and request arrivals & duration • User’s workload at three levels • Session: a consecutive series of requests from a user to a Web site • Click: a user action to request a page, submit a form, etc. • Request: each click generates one or more HTTP requests • Exponential distribution [LNJV99,KR01] • Session inter-arrival times • Heavy-tail distribution [KR01] • # clicks in a session, most in the range of 4-6 [Mah97] • # embedded references in a Web page • Think time: time between clicks • Active time: time to download a Web page and its embedded images

Common Pitfalls • Trace analyses are all about writing scripts & plotting nice graphs • Trace analyses are for better design of systems, and implications are often more important than raw data • Challenges • Trace collection: where to monitor, how to collect (e.g., efficiency, privacy, accuracy) • Identify important metrics, and understand why they are important • Sound measurement require disciplines • Draw implications from data analyses • Understanding the limitation of the traces • No representative traces: workload changes in time and in space • Try to diversify data sets (e.g., collect over different places and different sites) before jumping into conclusions • Draw inferences more than what data show

Part II: Web Workload • Motivation • Limitations of workload measurements • Content dynamics • Access dynamics • Common pitfalls • Case studies • Boston University client log study • UW proxy log study • MSNBC server log study • Mobile log study

Case Study I: BU Client Log Study • Analyze clients’ browsing pattern and their impact on network traffic [CBC95] • One of the few client log studies • Approach • Modify Mosaic and distribute it to machines in CS Dept. at Boston Univ. to collect client traces in 1995 • Major Findings • Power law distributions • Distribution of document sizes • Distribution of user requests for documents • # requests to documents as a function of their popularity

Case Study II: UW Proxy Log Study • Approaches [WVS+99a, WVS+99b] • Deploy a passive network sniffer between the Univ. of Washington and the rest of the Internet in May 1999

Major Findings • Members of an organization are more likely to request the same documents than a random set of clients • Significant fraction of uncachable documents • Significant fraction of audio/video content

Case Study III: MSNBC Server Log Study • MSNBC server site • a large news site • server cluster with 40 nodes • 25 million accesses a day (HTML content alone) • Period studied: Aug. – Oct. 99 & Dec. 17, 98 flash crowd • Server logs • HTTP access logs • Content Replication System (CRS) logs • HTML content logs • Data analysis • Content dynamics • Access dynamics

Major Findings • Content dynamics • Modification history is a rough predictor • Frequent but minimal file modifications • Access dynamics • Set of popular files remains stable for days • Domain membership has a significant bearing on client accesses except during a flash crowd of global interest • Zipf-like distribution of file popularity but with a much larger than at proxies • Accesses to old documents account for most first-time misses hard to anticipate such accesses, and eliminate these first-time misses

Case Study IV: Mobile Log Study • A popular commercial Web site for mobile clients • Content • news, weather, stock quotes, email, yellow pages, travel reservations, entertainment etc. • Services • Notification • Browse • Period studied • 3.25 million notifications in Aug. 20 – 26, 2000 • 33 million browse requests in Aug. 15 – 26, 2000