Overview of Backpropagation Algorithm and Support Vector Machines in Supervised Learning

E N D

Presentation Transcript

Synaptic DynamicsII : Supervised Learning The Backpropagation Algorithm and Spport Vector Machines JingLIU 2004.11.3

History of BP Algorithm • Rumelhart [1986]popularized the BP algorithm in the Parallel Distributed Processing edited volume in the late 1980’s. • BP algorithm overcame the limitations of the perceptron algorithm, limitations that Minsky and Papert[1969] had carefully enumerated. • BP’s popularity begot waves of criticism. BP algorithm often failed to converge, and at best converged to local error minima. • The wave of criticism challenged BP’s historical priority. • The wave of criticism challenge whether the BP learning was new. The algorithm not offer a new kind of learning.

Feedforward Sigmoidal Representation Theorems • Feedforward sigmoidal architectures can in principle represent any Borel-measurable function to any desired accuracy—if the network contains enough “hidden” neurons between the input and output neuronal fields. • So the MLP can solve the problems of nonlinear separable problems and function approximate.

Feedforward Sigmoidal Representation Theorems • We can explain the theorem in the following two aspects: • To improve the NNs’ classification ability, we must use multilayer networks, at least one hidden layers. • One the other hand, when feedforward –sigmoidal neural networks finely approximate complicated functions, the networks may suffer a like “explosion” of hidden neurons and interconnecting synapses.

Xi Yi-N(Xi) How can a MLP learn? • We know only the random sample (xi,Yi) and the vector error Yi-N(Xi)

BP Algorithm • The Principle of BP algorithm • Working signals are propagate forward to the output neurons. • Error signals are propagated backward to the input field.

BP Algorithm • The BP Algorithm for the learning process of Multilayer networks The error signal of the jth output is: The instantaneous summed squared error of the output is: The objective function of learning process is:

BP Algorithm • In the case of learning sample by sample: • The gradient of E(n) at nth iteration is:

BP Algorithm • The modification quantity of weight wjiis: where • For j is an output neuron: • For j is a hidden neuron:

initialize Calculate output of each layer Calculate the error of the output layer Calculate for all neurons Modify the weights of Output layer Modify the weights of Hidden layer

Some improvements for BP algorithm The problems of BP algorithm • It convergence slowly. • There are local minima in the objective function. Some methods to improvement BP algorithm, such as: conjugate gradient descent, using high order derivative and adding momentum term, etc.

Adding momentum term for BP Algorithm • Here we modify weights of neurons with:

Support Vector Machines • SVM was presented for the binary classification problems based on the principle of Structural Risk Minimization.

Support Vector Machines To get the largest margin, we deduce the optimal classification function: Using inner product to replace the dot product:

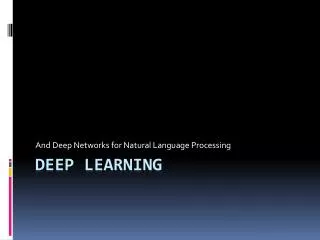

(i) (j) (k) (l) Support Vector Machines