Processes (and Threads)

Processes (and Threads). (Processes) (Inter-process interactions) (Inter-process communication) Threads Thread/IPC Problems+Solutions. Processes: Modes of Operation. Monolithic/Sequential Abstractions: Processes (+children)

Processes (and Threads)

E N D

Presentation Transcript

Processes (and Threads) • (Processes) • (Inter-process interactions) • (Inter-process communication) • Threads • Thread/IPC Problems+Solutions

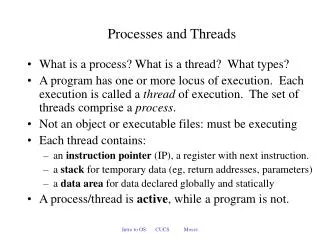

Processes: Modes of Operation • Monolithic/Sequential Abstractions: Processes (+children) • Divide and Conquer (Client-Server): Overall processes actions executed by parallel sub-process activities: “threads” • Allows distinct threads (i.e., only • part of a process to be blocked while • other threads can proceed) • Concurrency & resource control at • a finer granularity (multi-processor • discrete threads per processor for • a single process) • …value mainly if concurrency (esp • for 1 CPU) allows spread across • CPU, I/O, mem. etc tasks

Threads: Flow Control within a Process • Process Model (1 Thread Model) (Unix/Windows) • Each process is discrete + distinct (sequential) control flow • Each process/child has its unique PC, SP, registers + address space • Processes interact via IPC • Thread Model (mini/“lightweight” processes, N Threads) • Each thread runs independently though sequentially (like a process) • Each thread has its own PC, SP (like a process) • A thread can spawn sub-threads (like a process) • A thread can request services (like a process) • A thread has “state” ready:running:blocked (like a process)

But, all threads share exactly the same address space • access to all global variables within the process • access to all files within the shared address space concurrency!!! • can read, write, delete files and stacks shared address space

Thread Models work workers dispatcher • Dispatcher/Worker Model • Pipeline Model • Team Model File servers: eg. client wants a file replicated on multiple servers: one thread per server work producer consumer model; not useful for file servers etc, great for database servers work

Thread Usage multi-process/child model would also work but entails much higher overhead: process creation, context switching, scheduling, discrete address spaces, discrete resource allocation etc Dispatcher while(TRUE){ get_next_request(&buf); hand_off_work(&buf);} A multi-threaded Web server Worker while(TRUE){ wait_for_work(&buf); look_for_page_in_cache(&buf,&page); if(page_not_in_cache)(&page)){ read_page_from_disk(&buf,&page); } return_page(&page)} ** can handle multiple read access requests

Implementations: User-level Kernel-level User-level threads package each u.process defines its own thread policies! flexible mgt, scheduling etc…kernel has NO knowledge no support for any problems Threads package managed by the kernel

Hybrid Implementations Multiplexing user-level threads onto kernel- level threads (each kernel thread possesses limited sphere of user-thread control)

Many-to-One Model • Many user-level threads mapped to single kernel thread • Solaris Green Threads • GNU Portable Threads • + Flexible: thd. mgmt in user space • Process blocks if thd. blocks • Only 1 thread can access kernel • at a time: no multi-proc support

One-to-One Model • Each user-level thread maps (bound) to a kernel thread • Windows 95/98/NT/2000/XP • Linux • Solaris v9+ • + Max. concurrency • Each u.thd needs k.thd • (expensive!)

Multiplexed: Many-to-Many Model • Many user level threads to be mapped to many kernel threads • Allows OS to create/manage limited kernel threads • Solaris (prior to v9); v9+ one-to-one • Windows NT/2000 with the ThreadFiber package + large # of thds + u.thd blocks, kernel schedules another for execution - lesser concurrency than 1-1

Two-level Model • Similar to M:M, except that it also allows a user thread to be bound to a kernel thread • HP-UX • 64bit UNIX • Solaris 8 and earlier

Windows XP Threads • Implements one-to-one mapping • Each thread contains • A unique thread id • Processor register set • Separate user and kernel stacks • Private data storage area • The register set, stacks, and private storage area are known as the context of the threads • The primary data structures of a thread include: • ETHREAD (executive thread block) Kernel Space • KTHREAD (kernel thread block) Kernel Space • TEB (thread environment block) User space

XP Thread Data Structures ETHREAD thd start addr K THREAD ptr to parent proc scheduling & sync. info TEB (User Space) kernel stack thd identifier user stack thd local storage

Linux Threads • Linux refers to them as tasks rather than threads • Linux does not distinguish between process/threads • Thread creation is done through clone() system call with flags indicating sharing across parent/children • clone()allows a child task to share the address/file/signal space of the parent task (process)

Java Threads (& States) • Java threads are managed by the JVM • Java threads may be created by: • Extending Thread class • Implementing the Runnable interface

Thread Types • LWP (Lightweight Processes) • Kernel Threads • User Threads

LWP: Lightweight Process • Kernel supported/controlled user-thread • share process/user address space NOT kernel space • each LWP can be independently assigned to separate CPU’s • very similar to processes in term of their actions. However, LWP has no global name space associated with them invisible to all processes except parent! ~ Unix pipe | op.: cat | print… user process simply an interface! LWP LWP kernel threads

Solaris 2.x task 2 task 1 task 3 user thd LWP (bound) LWP (unbound) kernel thd Scheduler KERNEL CPU CPU

Kernel Threads • Windows XP, Solaris, Linux, Mac OS X • created/destroyed by kernel (not user) • shares kernel text, global data, kernel stack • independently schedulable by kernel without belonging inside a process • started similar to a process: fork(), exec(), sleep(), wakeup() • very useful for handling asynchronous I/O or interrupts • Data Structure • saved copy of kernel registers • priority and scheduling info • pointers for scheduler queues • pointers to kernel stack • pointers to LWP

Handling Async. I/O & Interrupts T1 need to perform async. I/O T2 create thread T2 issue I/O req & block continue execution via other threads (if no precedence constraints) I/O complete notification of I/O notify thread T1 process notification & continue terminate T2

Pop-Up Threads (no wait blocking – sync) • Creation of a new thread only when message arrives (async, demand based responses without process being blocked waiting)

User Threads • Thread mgmt. done by user-level thread libraries (POSIX, Win32, Java Threads) • at user level (kernel can be totally unaware of thread creation) • user libraries (MACH: c-threads, POSIX: p-threads) provide all sync., scheduling and resource mgmt. functions and NOT the kernel! • consumes no kernel resources; fast! • usually bound to LWP processes user threads kernel thread LWP LWP CPU’s

Thread POSIX Standards – p-threads • Strictly guidelines: specifications not implementations • API for thread creation and synchronization • API specifies behavior of the thread library, implementation is up to development of the library • Common in UNIX OS’s (Solaris, Linux, Mac OS X)

Scheduler Activations • Goal – mimic functionality of kernel threads • gain performance of user space threads • Avoid unnecessary user/kernel transitions • Kernel assigns virtual processors to each process • allows runtime system to allocate threads to processors • Problem:- fundamental reliance on kernel (lower layer) - calling procedures in user space (higher layer)

Thread Mgmt. • How to make threads react like threads and not processes • After a process is created by the OS, it can spawn threads without kernel knowledge – so how does the kernel control them, say termination? • Static threads: • # of threads determined at program compile • each thread assigned a specific stack • very simple, very inflexible • Dynamic threads: • on demand creation • thread creation calls (pointer to procedures, stack size allocation, scheduling, …) • Access Condition Issues?

Making Single-Threaded Code Multithreaded global variable! Conflicts between threads over the use of a global variable CPU schedule switch to T2 T1 access code lost

Making Single-Threaded Code Multithreaded * Avoid global variables completely? * Allow threads to have private global variables Each thread keeps private copy of errno and global variables

Thd Mgmt: Processes Threads ? • # of resources in a system: finite • Traditional Unix (life was easy!) • single thread of control • multiprogramming: 1 process actually executing • non-preemptive processes • Distributed systems, multi-threading etc. How do we handle issues of resource constraints, ordering of processes/threads, precedence relations, access control for global parameters, shared memory and IPC such that the solutions are: fair, efficient, deadlock/race-free

Race Conditions/Mutual Exclusion • Segment of code of a process where a shared resource is accessed (changing global variables, writing files etc) is called theCritical Section (CS) • If several processes access and modify shared data concurrently, the outcome can depend solely on the order of accesses (sans ordered control): Race Condition • In a situation involving shared memory, shared files, shared address spaces and IPC, how do we find ways of prohibiting more than 1 process from accessing shared data at the same time and providing ordered access? Mutual Exclusion (ME) [Generalization of the Race Condition Problems]