Types of research design – experiments

380 likes | 629 Vues

Types of research design – experiments. Chapter 8 in Babbie & Mouton (2001) Introduction to all research designs All research designs have specific objectives they strive for Have different strengths and limitations Have validity considerations.

Types of research design – experiments

E N D

Presentation Transcript

Types of research design – experiments • Chapter 8 in Babbie & Mouton (2001) • Introduction to all research designs • All research designs have specific objectives they strive for • Have different strengths and limitations • Have validity considerations SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Validity considerations • When we say that a knowledge claim (or proposition) is valid, we make a JUDGEMENT about the extent to which relevant evidence supports that claim to be true • Is the interpretation of the evidence given the only possible one, or are there other plausible ones? • "Plausible rival hypotheses" = potential alternative explanations/claims • e.g. New York City's "zero tolerance" crime fighting strategy in the 1980s and 1990s - the reverse of the "broken windows" effect SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

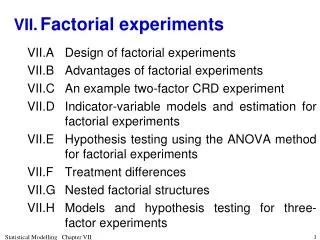

The logic of causal social research in the controlled experiment • Explanatory rather than descriptive • Different from correlational research - one variable is manipulated (IV) and the effect of that manipulation observed on a second variable (DV) • If … then …. • E.g. • "Animals respond aggressively to crowding" (causal) • "People with premarital sexual experience have more stable marriages" (noncausal) SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Three pairs of components: • Independent and dependent variables • Pre-testing and post-testing • Experimental and control groups SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Components • Variables • Dependent (DV) • Independent (IV) • Pre-testing and post-testing • O X O • Experimental and control groups • To off-set the effects of the experiment itself; to detect effects of the experiment itself SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

The generic experimental design: • R O1 X O2 R O3 O4 • The IV is an active variable; it is manipulated • The participants who receive one level of the IV are equivalent in all ways to those who receive other levels of the IV Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Sampling • 1. Selecting subjects to participate in the research • Careful sampling to ensure that results can be generalized from sample to population • The relationship found might only exist in the sample; need to ensure that it exists in the population • Probability sampling techniques Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Sampling • 2. How the sample is divided into two or more groups is important • to make the groups similar when they start off • randomization - equal chance • matching - similar to quota sampling procedures • match the groups in terms of the most relevant variables; e.g. age, sex, and race Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Variations on the standard experimental design • One-shot case study X O • No real comparison Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

A famous one-group posttest-only design • Milgram's study on obedience • Obedience to authority • The willingness of subjects to follow E's orders to give painful electrical shocks to another subject • A real, important issue here: how could "ordinary" citizens, like many Germans during the Nazi period, do these incredibly cruel and brutal things? • If a person is under allegiance to a legitimate authority, under what conditions will the person defy the authority if s/he is asked to carry out actions clearly incompatible with basic moral standards? Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

One-group pre-test post-test design • O1 X O2 Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Example • We want to find out whether a family literacy programme enhances the cognitive development of preschool-age children. • Find 20 families with a 4-year old child, enrol the family in a high-quality family literacy programme • Administer a pretest to the 20 children - they score a mean of say 50 on the cognitive test • The family participates in the programme for twelve months • Administer a post-test to the 20 children; now they score 75 on the test - a gain of 25 Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Two claims/conclusions: • 1 The children gained 25 points on average in terms of their cognitive performance • 2 the family literacy programme caused the gain in scores • VALIDITY - rival explanations Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Static-group comparison X O O Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Evaluating research (experiments) • We know the structure of research • We understand designs • We know the requirements of "good" research • Then we can evaluate a study • Is it good? Can we believe its conclusions? • Back to plausible rival hypotheses Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Validity in designs • If the design is not valid, then the conclusions drawn are not supported; it is like not doing research at all • Validity of designs come in two parts: • Internal validity • can the design sustain the conclusions? • External validity • can the conclusions be generalized to the population? Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Internal validity • Each design is only capable of supporting certain types of conclusions • e.g. only experiments can support conclusions about causality • Says nothing about if the results can be applied to the real world (generalization) • Generally, the more controlled the situation, the higher the internal validity • The conclusions drawn from experimental results may not accurately reflect hat has gone on in the experiment itself Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Sources of internal invalidity • These sources often discussed as part of experiments, but can be applied to all designs (e.g. see reactivity) • History • Historical events may occur that will be confounded with the IV • Especially in field research (compare the control in a laboratory, e.g. nonsense syllables in memory studies Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Maturation • Changes over time can be caused by a natural learning process • People naturally grow older, tired, bored, over time Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Testing (reactivity) • People realize they are being studied, and respond the way they think is appropriate The very act of studying something may change it • In qualitative research, the "on stage" effects Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

The Hawthorne studies • Improved performance because of the researcher's presence - people became aware that they were in an experiment, or that they were given special treatment • Especially for people who lack social contacts, e.g. residents of nursing homes, chronic mental patients Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Placebo effect • When a person expects a treatment or experience to change her/him, the person changes, even when the "treatment" is know to be inert or ineffective • Medical research • "The bedside manner", or the power of suggestion SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Experimenter expectancy • Pygmalion effect - self-fulfilling prophecies of e.g. teachers' expectancies about student achievement • Experimenters may prejudge their results - experimenter bias • Double blind experiments: • Both the researcher and the research participant are "blind" to the purpose of the study. • They don't know what treatment the participant is getting SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Instrumentation • Instruments with low reliability lead to inaccurate findings/missing phenomena • e.g. human observers become more skilled over time (from pretest to posttest) and so report more accurate scores at later time points SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Statistical regression to the mean • Studying extreme scores can lead to inflated differences, which would not occur in moderate scorers SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Selection biases • Selection subjects for the study, and assigning them to E-group and C-group • Look out for studies using volunteers SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Attrition • Sometimes called experimental (or subject) mortality • If subjects drop out, it creates a bias to those who did not • e.g. comparing the effectiveness of family therapy with discussion groups for treatment of drug addiction • addicts with the worst prognosis more likely to drop out of the discussion group • will make it look like family therapy does less well than discussion groups, because the "worst cases" were still in the family therapy group Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Diffusion or imitation of treatments • When subject can communicate to each other, pass on some information about the treatment (IV) Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Compensation • In real life, people may feel sorry for C-group who does not get "the treatment" - try to give them something extra • e.g. compare usual day care for street children with an enhanced day treatment condition • service providers may very well complain about inequity, and provide some enhanced service to the children receiving usual care Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Compensatory rivalry • C-group may "work harder" to compete better with the E-group SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Demoralization • Opposite to compensatory rivalry • May feel deprived, and give up • e.g. giving unemployed high school dropouts a second chance at completing matric via a special education programme • if we assign some of them to a control group, who receive "no treatment", they may very well become profoundly demoralized SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

External validity • Can the findings of the study be generalized? • Do they speak only of our sample, or of a wider group? • To what populations, settings, treatment variables (IV's), and measurement variables can the finding be generalized? Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

External validity • Mainly questions about three aspects: • Research participants • Independent variables, or manipulations • Dependent variables, or outcomes • Says nothing about the truth of the result that we are generalizing • External validity only has meaning once the internal validity of a study has been established • Internal validity is the basic minimum without which an experiment is uninterpretable SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

External validity • Our interest in answering research questions is rarely restricted to the specific situation studied - our interest is in the variables, not the specific details of a piece of research • But studies differ in many ways, even if they study the same variables: • operational definitions of the variables • subject population studied • procedural details • observers • settings • Generally bigger samples with valid measures lead to better external validity SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Sources of external invalidity • Subject selection - Selecting a sample which does not represent the population well, will prevent generalization • Interaction between the testing situation and the experimental stimulus • When people have been sensitized to the issues by the pre-test • Respond differently to the questionnaires the second time (post-test) • Operationalization SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Operationalization • We take a variable with wide scope and operationalize it in a narrow fashion • Will we find the same results with a different operationalization of the same variable? Types of design - experiments SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Field experiments • "natural" - e.g. disaster research • Static-group comparison type • Non-equivalent experimental and control groups SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...

Strengths and weaknesses • Strengths • Control • Manipulating the IV • Sorting out extraneous variables • Weaknesses • Articifiality - a generalization problem • Expense • Limited range of questions SUMBER: web.uct.ac.za/.../Types%20of%20research%20d...