RStats Statistics and Research Camp 2014

860 likes | 998 Vues

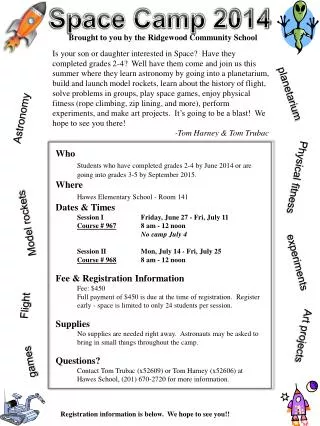

The RStats Statistics and Research Camp 2014, led by Dr. Helen Reid of Missouri State University, focuses on maintaining current knowledge of quantitative methods in research. The camp emphasizes best practices and common pitfalls in data analysis, particularly the importance of the Null Hypothesis Significance Testing (NHST). Participants learn to apply advanced techniques to improve research outcomes and foster professional connections, ensuring integrity and rigor in statistical practices. The agenda includes sessions on data analysis best practices, effect size, meta-analysis, and structural equation modeling.

RStats Statistics and Research Camp 2014

E N D

Presentation Transcript

Helen Reid, PhD Dean of the College of Health and Human Services Missouri State University

Welcome • Goal: keeping up with advances in quantitative methods • Best practices: use familiar tools in new situations • Avoid common mistakes Todd Daniel, PhD RStats Institute

Coffee Advice Ronald A. Fisher

Cambridge, England 1920 Box, 1976 Dr. Muriel Bristol

Familiar Tools • Null Hypothesis Significance Testing (NHST) • a.k.a. Null Hypothesis Decision Making • a.k.a. Statistical Hypothesis Inference Testing p < .05 The probability of finding these results given that the null hypothesis is true

Benefits of NHST • All students trained in NHST • Easier to engage researchers • Results are not complex Everybody is doing it

Statistically Significant Difference What does p < .05 really mean? • There is a 95% chance that the alternative hypothesis is true • This finding will replicate 95% of the time • If the study was repeated, the null would be rejected 95% of the time

The Earth is Round, p < .05 What you want… • The probability that an hypothesis is true given the evidence What you get… • The probability of the evidence assuming that the (null) hypothesis is true. Cohen, 1994

Null: There are no flying pigs H0: P = 0 • Random sample of 30 pigs • One can fly 1/30 = .033 • What kind of test? • Chi-Square? • Fisher exact test? • Binomial? Why do you even need a test?

Daryl Bem and ESP • Assumed random guessing p = .50 • Found subject success of 53%, p < .05 • Too much power? • Everything counts in large amounts • What if Bem set p = 0 ? • One clairvoyant v. group that guesses 53% In the real world, the null is always false.

Problems with NHST • Cohen (1994) reservations • Non-replicable findings • Poor basis for policy decisions • False sense of confidence NHST is “a potent but sterile intellectual rake who leaves in his merry path a long train of ravished maidens but no viable scientific offspring” - Paul Meehl Cohen, 1994

What to do then? • Learn basic methods that improve your research (learn NHST) • Learn advanced techniques and apply them to your research (RStats Camp) • Make professional connections and access professional resources

Agenda 9:30 Best Practices (and Poor Practices) In Data Analysis 11:00 Moderated Regression 12:00 – 12:45 Lunch (with Faculty Writing Retreat) 1:00 Effect Size and Power Analysis 2:00 Meta-Analysis 3:00 Structural Equation Modeling

RStats Statistics and Research Camp 2014 Best Practices and Poor Practices Session 1 R. Paul Thomlinson PhD Burrell

Poor Practices Common Mistakes

Mistake #1 No Blueprint

Mistake #2 Ignoring Assumptions Pre-Checking Data Before Analysis

Assumptions Matter • Data: I call you and you don’t answer. • Conclusion: you are mad at me. • Assumption: you had your phone with you. If my assumptions are wrong, it prevents me from looking at the world accurately

Assumptions for Parametric Tests • "Assumptions behind models are rarely articulated, let alone defended. The problem is exacerbated because journals tend to favor a mild degree of novelty in statistical procedures. Modeling, the search for significance, the preference for novelty, and the lack of interest in assumptions -- these norms are likely to generate a flood of nonreproducible results." • David Freedman, Chance 2008, v. 21 No 1, p. 60

Assumptions for Parametric Tests • "... all models are limited by the validity of the assumptions on which they ride."Collier, Sekhon, and Stark, Preface (p. xi) to Freedman David A., Statistical Models and Causal Inference: A Dialogue with the Social Sciences. • Parametric tests based on the normal distribution assume: • Interval or Ratio Level Data • Independent Scores • NormalDistribution of the Population • Homogeneityof Variance

Assessing the Assumptions • Assumption of Interval or Ratio Data • Look at your data to make sure you are measuring using scale-level data • This is common and easily verified

Independence • Techniques are least likely to be robust to departures from assumptions of independence. • Sometimes a rough idea of whether or not model assumptions might fit can be obtained by either plotting the data or plotting residuals obtained from a tentative use of the model. Unfortunately, these methods are typically better at telling you when the model assumption does not fit than when it does.

Independence • Assumption of Independent Scores • Done during research construction • Each individual in the sample should be independent of the others • The errors in your model should not be related to each other. • If this assumption is violated: • Confidence intervals and significance tests will be invalid.

Assumption of Normality • You want your distribution to not be skewed • You want your distribution to not have kurtosis • At least, not too much of either

Normally Distributed Something or Other • The normal distribution is relevant to: • Parameters • Confidence intervals around a parameter • Null hypothesis significance testing • This assumption tends to get incorrectly translated as ‘your data need to be normally distributed’.

Assumption of Normality • Both skew and kurtosis can be measured with a simple test run for you in SPSS • Values exceeding +3 or -3 indicate very skewed

Kolmogorov-Smirnov Test Tests if data differ from a normal distribution Significant = non-Normal data Non-Significant = Normal data Non-Significant is the ideal Tests of Normality SPSSExam.sav

The P-P Plot Normal Not Normal

Histograms & Stem-and Leaf Plots Normal-ish Bi-Modal SPSSExam.sav Double-click on Histogram in Output window to add the normal curve

When does the Assumption of Normality Matter? • Normality matters most in small samples • The central limit theorem allows us to forget about this assumption in larger samples. • In practical terms, as long as your sample is fairly large, outliers are a much more pressing concern than normality

Assessing the Assumptions • Assumption of Homogeneity of Variance • Only necessary when comparing groups • Levene’s Test

Assessing Homogeneity of VarianceGraphs Number of hours of ringing in ears after a concert Homogeneous Heterogeneous

Assessing Homogeneity of VarianceNumbers • Levene’sTests • Tests if variances in different groups are the same. • Significant = Variances not equal • Non-Significant = Variances areequal • Non-Significant is ideal • Variance Ratio (VR) • With 2 or more groups • VR = Largest variance/Smallest variance • If VR < 2, homogeneity can be assumed.

Mistake #3 Ignoring Missing Data

Missing Data Missing Data It is the lion you don’t see that eats you

Amount of Missing Data • APA Task Force on Statistical Inference (1999) recommended that researchers report patterns of missing data and the statistical techniques used to address the problems such data create • Report as a percentage of complete data • “Missing data ranged from a low of 4% for attachment anxiety to a high of 12% for depression.” • If calculating total or scale scores, impute the values for the items first, then calculate scale

Pattern of Missing Data • Missing Completely At Random (MCAR) • No pattern; not related to variables • Accidentally skipped one; got distracted • Missing At Random (MAR) • Pattern does not differ between groups • Not Missing At Random (NMAR) • Parents who feel competent are more likely to skip the question about interest in parenting classes

Pattern of Missing Data Distinguish between MCAR and MAR • Create a dummy variable with two values: missing and non-missing • SPSS: recode new variable • Test the relation between dummy variable and the variables of interest • If not related: data are either MCAR or NMAR • If related: data are MAR or NMAR • Little’s (1988) MCAR Test • Missing Values Analysis add-on module in SPSS 20 • If the p value for this test is not significant, indicates data are MCAR

What if my Data are NMAR? • You’re not screwed • Report the pattern and amount of missing data

Deletion Listwise Deletion • Cases with any missing values are deleted from analysis • Default procedure for SPSS • Problems • If the cases are not MCAR remaining cases are a biased subsample of the total sample • Analysis will be biased • Loss of statistical power • Dataset of 302 respondents dropped to 154 cases

Deletion Pairwise Deletion • Cases are excluded only if data are missing on a required variable • Correlating five variables: case that was missing data on one variable would still be used on the other four • Problems • Uses different cases for each correlation (n fluctuates) • Difficult to compare correlations • May mess with multivariate analyses

Imputation Mean Substitution • Missing values are imputed with the mean value of that variable • Problems • Produces biased means with data that are MAR or NMAR • Underestimates variance and correlations • Experts strongly advise against this method

Imputation Regression Substitution • Existing scores are used to predict missing values • Problems • Produces unbiased means under MCAR or MAR • Produces biases in the variances • Experts advise against this method

Imputation Pattern-Matching Imputation • Hot-Deck Imputation • Values are imputed by finding participants who match the case with missing data on other variables • Cold-Deck Imputation • Information from external sources is used to determine the matching variables • Does not require specialized programs • Has been used with survey data • Reduces the amount of variation in the data

Stochastic Imputation Stochastic Imputation Methods • Stochastic = random • Does not systematically change the mean; gives unbiased variance estimates • Maximum Likelihood (ML) Strategies • Observed data are used to estimate parameters, which are then used to estimate the missing scores • Provides “unbiased and efficient” parameters • Useful for exploratory factor analysis and internal consistency calculations

Stochastic Imputation Multiple Imputation (MI) • Create several imputed data sets (3 – 5) • Analyze each data set and save the parameter estimates • Average the parameter estimates to get an unbiased parameter estimate • Most complex procedure • Computer-intensive