Parallel Computing at RIT

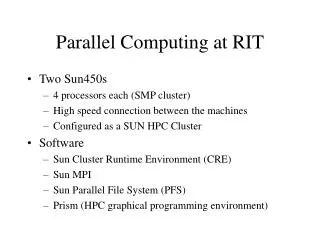

Parallel Computing at RIT. Two Sun450s 4 processors each (SMP cluster) High speed connection between the machines Configured as a SUN HPC Cluster Software Sun Cluster Runtime Environment (CRE) Sun MPI Sun Parallel File System (PFS) Prism (HPC graphical programming environment).

Parallel Computing at RIT

E N D

Presentation Transcript

Parallel Computing at RIT • Two Sun450s • 4 processors each (SMP cluster) • High speed connection between the machines • Configured as a SUN HPC Cluster • Software • Sun Cluster Runtime Environment (CRE) • Sun MPI • Sun Parallel File System (PFS) • Prism (HPC graphical programming environment)

Your Environment • First CRE/MPI and friends are only available on Parasite and Paradise • Paths and all that • /opt/SUNWhpc is the root for the HPC tools • /opt/SUNWhpc/bin is where the executables live • /opt/SUNWhpc/include is where the man files live • There is a docs and examples directory

Hello.c #include <stdio.h> #include <string.h> #include <mpi.h> main ( int argc, char** argv ) { int myRank; int p; int source; int dest = 0; int tag = 0; char message[ 100 ]; MPI_Status status; MPI_Init( &argc, &argv ); MPI_Comm_rank( MPI_COMM_WORLD, &myRank ); MPI_Comm_size( MPI_COMM_WORLD, &p );

Hello.c if ( myRank != 0 ) { sprintf( message, "Greetings from process %d", myRank ); MPI_Send( message, strlen( message ) + 1, MPI_CHAR, dest, tag, MPI_COMM_WORLD ); } else { for ( source = 1; source < p; source++ ) { MPI_Recv( message, 100, MPI_CHAR, source, tag, MPI_COMM_WORLD, &status ); printf( "%s (status = %d)\n", message, status.MPI_ERROR ); }} MPI_Finalize(); }

Compiling and Running parasite> cc hello.c -o hello -I/opt/SUNWhpc/include -R/opt/SUNWhpc/lib -L/opt/SUNWhpc/lib -lmpi parasite> mprun -np 4 hello Greetings from process 1 (status = 0) Greetings from process 2 (status = 0) Greetings from process 3 (status = 0) parasite> mprun -np 7 hello Greetings from process 1 (status = 0) Greetings from process 2 (status = 0) Greetings from process 3 (status = 0) Greetings from process 4 (status = 0) Greetings from process 5 (status = 0) Greetings from process 6 (status = 0) parasite> mprun -np 8 hello mprun: no_mp_jobs: Not enough resources available parasite>